Académique Documents

Professionnel Documents

Culture Documents

3D Reconstruction From Single 2D Image

Titre original

Copyright

Formats disponibles

Partager ce document

Partager ou intégrer le document

Avez-vous trouvé ce document utile ?

Ce contenu est-il inapproprié ?

Signaler ce documentDroits d'auteur :

Formats disponibles

3D Reconstruction From Single 2D Image

Droits d'auteur :

Formats disponibles

International Journal of Latest Research in Engineering and Technology (IJLRET)

ISSN: 2454-5031

www.ijlret.com Volume 2 Issue 1 January 2016 PP 42-51

3D Reconstruction from Single 2D Image

Deepu R, Murali S

Department of Computer Science & Engineering

Maharaja Research Foundation

Maharaja Institute of Technology Mysore, India

Abstract: The perception of 3D scene with stereovision is the capability of human vision but it is a challenge

to computer systems. The challenge is to obtain 3D geometric shape information from planar images. This is

termed as 3D reconstruction. It is usually done in a piece-wise fashion by identifying various planes of the scene

and from those planes constructing a representation of the whole. The image is captured from a calibrated

camera. The captured image is perspectively distorted. These distortions are removed by corner point

estimation. The selected points give the true dimensions in the input image using view metrology. Then a 3D

geometrical model is constructed in VRML according to true dimensions from metrology. In rendering, a

texture map is created to each corresponding surface of a polygon in VRML model. VRML supports for

walkthrough to a rendered model, through which different views are generated.

1. Introduction

As a popular research topic, image-based 3D scene modelling has attracted much attention in recent years. It has

wide range of applications in civil engineering where civil engineers need to generate 3D views to conceive

their product to customer. It is used in games and entertainment, to create virtual reality and also for robot

navigation inside the building. In some cases, buildings that have disappeared can be modelled from as little as a

single image, for example, an old photograph or a painting.

To generate 3D views, one solution is to use specialized devices for acquiring 3D information of the image.

Since these devices are cost expensive, it is not possible to use at all time. The other solution is to manual

generation. The user requires prior knowledge about the object and also needs engineering skills. To generate

thousands of models it is a time consuming process. Hence, there is a need for generating 3D views with less

user interaction.

2. Problem Statement

The main objective of 3D modelling is to computationally understand and model the 3D scene from the captured

images, and provide human-like visual system for machines. The approach begins by capturing the 2D image

from calibrated camera. Captured images are usually perspectively distorted. These perspective distortions are

eliminated to get true dimensions of the image. 3D model is constructed in Virtual reality modelling language

(VRML) using the true dimensions. VRML is a tool that supports visual representation of the 3D model. Then

the perspectively corrected images are mapped to corresponding planes in VRML model to create realistic

effect. VRML supports walk- through simulation in a 3D space, so that the user can navigate the model

according to the requirement.

3. Existing Methodology

Most of the existing methods paid attention in generating 3D views from stereo images, sequence of monocular

images and combining both these images to get 3D views. Each of their approach differs in cost, amount of time

and user interaction for generating 3D models. Existing approach presented a 3D surface reconstruction from

sequence of images. Structure from motion (SFM) method is used to perform automatic calibration and depth

map is obtained by applying multi view stereoscopic depth estimation for each calibrated image. For rendering

two different texture based rendering techniques view dependent geometry and texture (VDGT) and multiple

local methods (MLM) are used. Another approach used Potemkin model to support reconstruction of the 3D

shapes of object instance. They stored different 3D oriented shape primitives of fixed 3D positions in a class

model. They label the each images of a class for learning. A 2D view specific recognition system returns the

bounding box for the detected object from an image. Then a model based segmentation method is used to obtain

object contour, using that object outline individual parts in the model is obtained. Shape context algorithm

match and deform the boundaries of the stored part labelled image for detected instance, thus it generated 3D

www.ijlret.com

42 | Page

3D Reconstruction from Single 2D Image

model of the class using detected object. Also there are many approaches which are inconsistent and require

more user intervention.

4. Architecture of the Proposed Methodology

We have considered three methods of constructing 3D views from single image. In first method, 3D of an object

is generated based on our approach discussed in our paper [7]. But, knowing focal length in prior through proper

calibration method has made this possible. In second approach, 3D is created using method discussed in [8]

where in second view is automatically created from first image of a scene. The first two approaches do not

demand the geometry of an image to be known. Third method is geometry based method where in view

metrology of objects in a scene is obtained and modeling, rendering gives us realistic appearance. Figure 8.1

gives overall structure of our approach. The image is captured from a calibrated camera used as input to the

system. In view metrology the different dimensions of the building are determined using one point perspective,

this is done by constructing orthographic views of objects from the perspectively distorted image. The image

contains the perspective distortion which is rectified using plane homography in perspective elimination

process. Then a 3D geometrical model is constructed in VRML according to true dimensions from metrology. In

rendering a texture map is performed to each corresponding surface of a polygon in VRML model. VRML

supports for walkthrough to a rendered model, through which different views are generated. Steps during 3D

reconstruction using geometrical approach have been shown in figure 1.

4.1. View Metrology

Measurement of objects dimensions from their images acquired from an imaging device is called as view

metrology. In a picture taken by the camera the depth information about the scene is lost. By Observing an

image a human being can feel the shape of the object but not metric like height or width of object. So view

metrology is used to measure the dimension of the objects in the image.

Figure 1: Steps to generate 3D model

4.2. Perspective Elimination Process

Perspective projection is the representation of an object on a plane surface, called the picture plane, as it would

appear to the eye, when viewed from a fixed position. Thus the image obtained from any camera is nothing but

the form of perspective view of the actual scene. Perspective distortions occur during the image acquisition

stage. In perspective imaging, the shape is distorted because parallel lines in the image tend to converge to a

finite point. Figure 2 below shows the example of perspective view of the object. The perspective distortions are

eliminated by vanishing point and corner point selection to get real dimensions of the image which is explained

in the section 6 and section 7.

www.ijlret.com

43 | Page

3D Reconstruction from Single 2D Image

Figure 2: Perspective view of the object

4.3. Modelling

Virtual reality modelling language (VRML) is used for visual representation of model. VRML defines a file

format that integrates 3D graphics and multimedia. Conceptually, each VRML file is a 3D time-based space that

contains graphic.

Figure 3: Model

Objects that can be dynamically modified through a variety of mechanism. The model of sample image is built

using dimensions in VRML as shown in figure 3. Model can be simulated according to the user requirements.

4.4. Rendering

In rendering, the perspectively corrected textures are used for mapping. The texture is mapped to corresponding

polygon surfaces is as shown in figure 4. Rendering gives the realistic effect to the model.

Figure 4: Model with tex

www.ijlret.com

44 | Page

3D Reconstruction from Single 2D Image

5. System Design

System design aims to identify the modules that should be in the system, the specifications of these modules will

interact with each other to produce the desired results. The purpose of the design is to plan the solution of a

problem specified by the requirements document.

5.1. Dataset Collection

The first stage in image analysis is data collection. Different set of 2D images at fixed distance and for fixed

focal length are taken. The images are scaled to 512X512 resolutions. Different test images are shown in below

figure.

Figure 5: Test image1

Figure 6: Test image 2

Figure 7: Test image 3

www.ijlret.com

45 | Page

3D Reconstruction from Single 2D Image

5.2. Flow diagram of the overall procedure

Figure 8: Flow diagram

6. Corner Estimation

Harris corner detector is a mathematical operator that finds a variation in the gradient of a image. It is rotation,

scale and illumination variation independent. It sweeps a window w(x,y) with displacement u in the x direction

and v in the y direction and calculates the variation of intensity.

A window with a score R greater than the threshold is selected and points of local maxima of R are considered

as a corner and are shown in Figure 9(a). Further it is reduced to four using geometric based calculations as

desired by the plane homography method.

Figure 9: (a) All corners are detected (b) Desired corners obtained

www.ijlret.com

46 | Page

3D Reconstruction from Single 2D Image

7. Perspective Estimation

Images captured in pinhole cameras are perspective in nature. In a perspective image, objects of similar size

appear bigger when it is closer to view point and smaller when it is farther. Hence the number of pixels to

represent farther objects in the image requires lesser pixels as compared to the number of pixels required to

represent the closer objects in the image. Example: when objects are inclined to the viewer, it goes farther away

from the viewpoint. Hence, the lesser the pixels means less information for image processing. Perspective

images lead to many ambiguous results when we tend to measure the size of the objects in the picture. The

rectangular plane ABCD in the Figure 10(a) is seen as A1B1C1D1 in a perspectively distorted case. The

perspective view of the rectangle is depicted as in the Figure 10(b). This is how the images of the objects are

formed in the image acquisition process.

Figure 10: (a) Rectangular Plane (b) Perspective distortion

8. Image Representation

Firstly small homogeneous regions in the image, called Superpixels, are identified and used them as a basic

unit of representation.(Fig.11) Such regions can be reliably found using over-segmentation, and represent a

coherent region in the image with all the pixels having similar properties. In most images, a superpixel is a small

part of a structure, such as part of a wall, and therefore represents a plane. In this experiment, algorithm is used

to obtain the superpixels. Typically, over-segment an image into many superpixels, representing regions which

have similar colour and texture. The goal is to infer the location and orientation of each of these superpixels.

Figure 11: The feature vector for a superpixel, which includes immediate and distant neighbours in multiple

scales

www.ijlret.com

47 | Page

3D Reconstruction from Single 2D Image

9. Probabilistic Model

It is difficult to infer 3D information of a region from local cues alone and one needs to infer the 3D information

of a region in relation to the 3D information of other region.

Figure 12: An illustration of the Markov Random Field (MRF) for inferring 3-d structure.

10. Transformations

Suppose the two images I1 and I2 to be registered having both translation and rotation with angle of rotation

being theta between them. When I2 is rotated by theta, there will be only translation left between the images.

So by rotating I2by one degree each time and computing the correlation peak for that angle, a stage where there

is only translation left between the images is reached. That angle becomes the angle of rotation.

1. Translation Transform x= x +b

2. Affine Transform x=ax+b

3. Bilinear x = p1xy + p2x +p3y +p4

y =p5xy +p6x +p7y +p8

4. Projective Transform x= (ax+b)/cx+1

11. Implementation

The implementation phase of any project development is the most important phase as it yields the final solution,

solves the problem at hand. The implementation phase involves the actual materialization of the ideas, which are

expressed in the analysis document and developed in the design phase.

11.1. Image acquisition

During this phase, calibrated camera is used to capture the images as discussed above.

11.2. View metrology

The captured image from calibrated camera is shown in figure. The image is measured to get different

dimensions like length,width ,breadth etc.. The image given to the system is converted into grayscale.

Figure 13 : Input image

www.ijlret.com

48 | Page

3D Reconstruction from Single 2D Image

11.3. Corner point selection

In order to find the true dimension of the image, corner points and also one vanishing point are selected as

shown in figure 14.The corner points and vanishing point helps to measure the dimensions in the image. Square

symbol shows the corner points selected. Triangle shows reference points generated with respect to corner

points.

The lines generated in the figure 15 shows the distance between the pixels in the various planes. These points

separated the image into different planes like front, top, bottom, side plane etc.

Depth information of the each plane is generated as shown in below figure 16. These planes are used to generate

3D model in VRML. 3D model is generated according to the true dimensions from metrology.

Figure 14: Corner points selection

Figure 15: Measurement of dimension

(a): Front plane

(b): Right plane

www.ijlret.com

49 | Page

3D Reconstruction from Single 2D Image

( c ): Left plane

(d): Top plane

(e): Bottom plane

Figure 16: 3D planes of the input image

Figure 17: 3D rendered model in VRML

Figure 18: Wireframe model

www.ijlret.com

50 | Page

3D Reconstruction from Single 2D Image

In rendering a texture mapping is performed to each corresponding surface of a polygon in VRML model as

shown in figure 17. Also wireframe model of the generated 3D model is shown in figure 18. VRML supports for

walkthrough to a rendered model, through which different views are generated.

12. Results

This section discusses the results of the proposed method. The method is implemented in matlab and output is

viewed on VRML viewer. The method is tested for several 2D images. As compared to the existing approach as

shown in the figure 19. So that 3D model can be built exactly as a real world using these dimensions from the

proposed method.

Figure 19 (a): Input image Figure

(b): Output 3D plane

13. Conclusion and Future Work

In this approach a new method is presented for generating 3D model using multiple planes of the 2D image. The

generated 3D model preserves the actual dimension of the object in the image. View metrology constructs

orthographic views from the perspectively distorted image. Perspectively distorted image is corrected by

vanishing point and corner point selection and the image is stretched according to its true dimension obtained

from view metrology. It is an easy way to generate model with less user interaction. Finally the 3D model is

rendered to give the realistic effect .Any image containing depth information can be applied to this method to

calculate the true dimensions. This method can be used in robot path planning inside the building, artificial

intelligence, animation, gaming, interior designing and architecture.

In this approach, images are used for 3D modelling. Since this approach determines the depth of the images

which has at least one vanishing point and corner points. The Future work includes enhancement of approach to

accept any image and also to work on video processing. For example goal line technology is used football match

to detect whether the ball is crossed the goal line.

References

[1]. Akash Kushal And Jean Ponce Modeling 3d Objects From Stereo Views And Recognizing Them In

Photographs University of Illinois at urbana-champaign,2005

[2]. Ashutosh Saxena, Min Sun And Andrew Y. Ng Learning 3-D Scene Structure From A Single Still Image

IEEE transactions on pattern analysis and machine intelligence(PAMI),2008.

[3]. S Murali N Avinash Estimation Of Depth Information From A Single View In An Image 3DTVconference: The true vision-capture, Transmission and Display of 3D video(3DTV-CON),2010

[4]. Heewon Lee, Alper Yilmaz 3d Reconstruction Using Photo Consistency From Uncalibrated Multiple

Views ohio state university,2010.

[5]. K Susheel kumar, Vijay Bhaskar Semwal, Shitala Prasad And R.C Tripathi Generating 3d Model Using 2d

Images Of An Object International Journal of Engineering Science and Technology (IJEST),2011.

[6]. Geetha Kiran A1 And Murali S Automatic Rectification Of Perspective Distortion From A Single Image

Using Plane Homography International Journal on Computational Sciences & Appli\cations (IJCSA)

Vol.3, No.5, October 2013.

[7]. Deepu R, Murali S, Vikram Raju, A Mathematical Model for the Determination of Distance of an Object

in a 2D Image, 17th International Conference on Image Processing, Computer Vision & Pattern

Recognition (IPCV13), World Congress in Computer Science, Computer Engineering, and Applied

Computing during July 22-25, 2013, Las Vegas, USA

[8]. Deepu R, Honnaraju B, Murali S, Automatic Generation of Second Image from an Image for

Stereovision, IEEE international conference on Advances in Computing, Communications and Informatics

(ICACCI-2014), ISBN: 978-1-4799-3080-7, pp920-927.

www.ijlret.com

51 | Page

Vous aimerez peut-être aussi

- Experimental Implementation of Odd-Even Scheme For Air Pollution Control in Delhi, IndiaDocument9 pagesExperimental Implementation of Odd-Even Scheme For Air Pollution Control in Delhi, IndiaInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Effect of Microwave Treatment On The Grindabiliy of Galena-Sphalerite OresDocument7 pagesEffect of Microwave Treatment On The Grindabiliy of Galena-Sphalerite OresInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Potentials of Rice-Husk Ash As A Soil StabilizerDocument9 pagesPotentials of Rice-Husk Ash As A Soil StabilizerInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Upper-Nonconvergent Objects Segmentation Method On Single Depth Image For Rescue RobotsDocument8 pagesUpper-Nonconvergent Objects Segmentation Method On Single Depth Image For Rescue RobotsInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Investigation Into The Strength Characteristics of Reinforcement Steel Rods in Sokoto Market, Sokoto State NigeriaDocument4 pagesInvestigation Into The Strength Characteristics of Reinforcement Steel Rods in Sokoto Market, Sokoto State NigeriaInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Thermoluminescence Properties of Eu Doped Bam PhosphorDocument3 pagesThermoluminescence Properties of Eu Doped Bam PhosphorInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Impact of Machine Tools Utilization On Students' Skill Acquisition in Metalwork Technology Department, Kano State Technical CollegesDocument4 pagesImpact of Machine Tools Utilization On Students' Skill Acquisition in Metalwork Technology Department, Kano State Technical CollegesInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- A Quantitative Analysis of 802.11 Ah Wireless StandardDocument4 pagesA Quantitative Analysis of 802.11 Ah Wireless StandardInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Experimental Comparative Analysis of Clay Pot Refrigeration Using Two Different Designs of PotsDocument6 pagesExperimental Comparative Analysis of Clay Pot Refrigeration Using Two Different Designs of PotsInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Utilization of Quantity Surveyors' Skills in Construction Industry in South Eastern NigeriaDocument10 pagesUtilization of Quantity Surveyors' Skills in Construction Industry in South Eastern NigeriaInternational Journal of Latest Research in Engineering and Technology100% (1)

- Crystal and Molecular Structure of Thiadiazole Derivatives 5 - ( (4-Methoxybenzyl) Sulfanyl) - 2-Methyl-1,3,4-ThiadiazoleDocument8 pagesCrystal and Molecular Structure of Thiadiazole Derivatives 5 - ( (4-Methoxybenzyl) Sulfanyl) - 2-Methyl-1,3,4-ThiadiazoleInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Preparation, Characterization and Performance Evaluation of Chitosan As An Adsorbent For Remazol RedDocument11 pagesPreparation, Characterization and Performance Evaluation of Chitosan As An Adsorbent For Remazol RedInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Electrolytic Method For Deactivation of Microbial Pathogens in Surface Water For Domestic UseDocument11 pagesElectrolytic Method For Deactivation of Microbial Pathogens in Surface Water For Domestic UseInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Investigation of Thermo-Acoustic Excess Parameters of Binary Liquid Mixture Using Ultrasonic Non Destructive TechniqueDocument7 pagesInvestigation of Thermo-Acoustic Excess Parameters of Binary Liquid Mixture Using Ultrasonic Non Destructive TechniqueInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Analysis of Wind Power Density Inninava Governorate / IRAQDocument10 pagesAnalysis of Wind Power Density Inninava Governorate / IRAQInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- A Survey On Different Review Mining TechniquesDocument4 pagesA Survey On Different Review Mining TechniquesInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- An Overview To Cognitive Radio Spectrum SharingDocument6 pagesAn Overview To Cognitive Radio Spectrum SharingInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Testing of Vertical Axis Wind Turbine in Subsonic Wind TunnelDocument8 pagesTesting of Vertical Axis Wind Turbine in Subsonic Wind TunnelInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- A Review On Friction Stir Welding of Dissimilar Materials Between Aluminium Alloys To CopperDocument7 pagesA Review On Friction Stir Welding of Dissimilar Materials Between Aluminium Alloys To CopperInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Analysis of Different Ways of Crosstalk Measurement in GSM NetworkDocument5 pagesAnalysis of Different Ways of Crosstalk Measurement in GSM NetworkInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Digital Inpainting Techniques - A SurveyDocument3 pagesDigital Inpainting Techniques - A SurveyInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Exploring The Geotechnical Properties of Soil in Amassoma, Bayelsa State, Nigeria For Classification Purpose Using The Unified Soil Classification SystemsDocument5 pagesExploring The Geotechnical Properties of Soil in Amassoma, Bayelsa State, Nigeria For Classification Purpose Using The Unified Soil Classification SystemsInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- A Survey On Secrecy Preserving Multi-Keyword Matching Technique On Cloud For Encrypted DataDocument4 pagesA Survey On Secrecy Preserving Multi-Keyword Matching Technique On Cloud For Encrypted DataInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Comparison Between Touch Gesture and Pupil Reaction To Find Occurrences of Interested Item in Mobile Web BrowsingDocument10 pagesComparison Between Touch Gesture and Pupil Reaction To Find Occurrences of Interested Item in Mobile Web BrowsingInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Study On Nano-Deposited Ni-Based Electrodes For Hydrogen Evolution From Sea Water ElectrolysisDocument12 pagesStudy On Nano-Deposited Ni-Based Electrodes For Hydrogen Evolution From Sea Water ElectrolysisInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Effect of Hot Water Pre-Treatment On Mechanical and Physical Properties of Wood-Cement Bonded Particles BoardDocument4 pagesEffect of Hot Water Pre-Treatment On Mechanical and Physical Properties of Wood-Cement Bonded Particles BoardInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- A Proposal On Path Loss Modelling in Millimeter Wave Band For Mobile CommunicationsDocument3 pagesA Proposal On Path Loss Modelling in Millimeter Wave Band For Mobile CommunicationsInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Effects of Rain Drops Rate On Digital Broadcasting Satellite (DBS) System in KadunaDocument6 pagesEffects of Rain Drops Rate On Digital Broadcasting Satellite (DBS) System in KadunaInternational Journal of Latest Research in Engineering and TechnologyPas encore d'évaluation

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceD'EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceÉvaluation : 4 sur 5 étoiles4/5 (895)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeD'EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeÉvaluation : 4 sur 5 étoiles4/5 (5794)

- The Yellow House: A Memoir (2019 National Book Award Winner)D'EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Évaluation : 4 sur 5 étoiles4/5 (98)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureD'EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureÉvaluation : 4.5 sur 5 étoiles4.5/5 (474)

- Shoe Dog: A Memoir by the Creator of NikeD'EverandShoe Dog: A Memoir by the Creator of NikeÉvaluation : 4.5 sur 5 étoiles4.5/5 (537)

- The Little Book of Hygge: Danish Secrets to Happy LivingD'EverandThe Little Book of Hygge: Danish Secrets to Happy LivingÉvaluation : 3.5 sur 5 étoiles3.5/5 (399)

- On Fire: The (Burning) Case for a Green New DealD'EverandOn Fire: The (Burning) Case for a Green New DealÉvaluation : 4 sur 5 étoiles4/5 (73)

- Never Split the Difference: Negotiating As If Your Life Depended On ItD'EverandNever Split the Difference: Negotiating As If Your Life Depended On ItÉvaluation : 4.5 sur 5 étoiles4.5/5 (838)

- Grit: The Power of Passion and PerseveranceD'EverandGrit: The Power of Passion and PerseveranceÉvaluation : 4 sur 5 étoiles4/5 (588)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryD'EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryÉvaluation : 3.5 sur 5 étoiles3.5/5 (231)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaD'EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaÉvaluation : 4.5 sur 5 étoiles4.5/5 (266)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersD'EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersÉvaluation : 4.5 sur 5 étoiles4.5/5 (344)

- The Emperor of All Maladies: A Biography of CancerD'EverandThe Emperor of All Maladies: A Biography of CancerÉvaluation : 4.5 sur 5 étoiles4.5/5 (271)

- Team of Rivals: The Political Genius of Abraham LincolnD'EverandTeam of Rivals: The Political Genius of Abraham LincolnÉvaluation : 4.5 sur 5 étoiles4.5/5 (234)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreD'EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreÉvaluation : 4 sur 5 étoiles4/5 (1090)

- The Unwinding: An Inner History of the New AmericaD'EverandThe Unwinding: An Inner History of the New AmericaÉvaluation : 4 sur 5 étoiles4/5 (45)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyD'EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyÉvaluation : 3.5 sur 5 étoiles3.5/5 (2259)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)D'EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Évaluation : 4.5 sur 5 étoiles4.5/5 (120)

- Her Body and Other Parties: StoriesD'EverandHer Body and Other Parties: StoriesÉvaluation : 4 sur 5 étoiles4/5 (821)

- Interim Summer Placement Report 2018 20 DoMS IISc Bangalore Added1Document6 pagesInterim Summer Placement Report 2018 20 DoMS IISc Bangalore Added1Garvit GoyalPas encore d'évaluation

- Ch. 3 Problem Solving and Logical Reasoning 3.1 Problem-Solving StrategiesDocument21 pagesCh. 3 Problem Solving and Logical Reasoning 3.1 Problem-Solving StrategiesEpic WinPas encore d'évaluation

- Loops PDFDocument15 pagesLoops PDFRacer GovardhanPas encore d'évaluation

- CplusplususDocument947 pagesCplusplususJeremy HutchinsonPas encore d'évaluation

- LIST OF STUDENTS Mobiloitte 1Document3 pagesLIST OF STUDENTS Mobiloitte 1Ishika PatilPas encore d'évaluation

- C & DS at A GlanceDocument98 pagesC & DS at A GlanceDrKamesh NynalasettyPas encore d'évaluation

- Cs 501 Theory of Computation Jun 2020Document4 pagesCs 501 Theory of Computation Jun 2020Sanjay PrajapatiPas encore d'évaluation

- Landmark - Drilling and Completions Portfolio PDFDocument1 pageLandmark - Drilling and Completions Portfolio PDFAnonymous VNu3ODGavPas encore d'évaluation

- Storchak Design Shaping Machine ToolingDocument27 pagesStorchak Design Shaping Machine ToolingCrush SamPas encore d'évaluation

- CMS Manual EnglishDocument44 pagesCMS Manual EnglishJUAN DAVID100% (1)

- E-Learning System On Computer Hardware Technologies: User ManualDocument14 pagesE-Learning System On Computer Hardware Technologies: User ManualLasithaPas encore d'évaluation

- Queensland Health Infomration and Communications and Technology Cabling Standard V1.4Document167 pagesQueensland Health Infomration and Communications and Technology Cabling Standard V1.4Lovedeep BhuttaPas encore d'évaluation

- Lab ManualDocument57 pagesLab ManualRahul SinghPas encore d'évaluation

- Operations and Team Leader Empower Training v1128Document102 pagesOperations and Team Leader Empower Training v1128Joana Reyes MateoPas encore d'évaluation

- CRJ Poh 12 01 2008 PDFDocument780 pagesCRJ Poh 12 01 2008 PDFGeoffrey MontgomeryPas encore d'évaluation

- 2015 - WitvlietDocument19 pages2015 - Witvlietdot16ePas encore d'évaluation

- Unit2 PDFDocument8 pagesUnit2 PDFnadiaelaPas encore d'évaluation

- Dspic33Fj32Gp302/304, Dspic33Fj64Gpx02/X04, and Dspic33Fj128Gpx02/X04 Data SheetDocument402 pagesDspic33Fj32Gp302/304, Dspic33Fj64Gpx02/X04, and Dspic33Fj128Gpx02/X04 Data Sheetmikehibbett1Pas encore d'évaluation

- Best Practices Snoc White PaperDocument9 pagesBest Practices Snoc White PaperAkashdubePas encore d'évaluation

- Programming Instruction For Hach DR 2800Document7 pagesProgramming Instruction For Hach DR 2800saras unggulPas encore d'évaluation

- Java Advanced Imaging APIDocument26 pagesJava Advanced Imaging APIanilrkurupPas encore d'évaluation

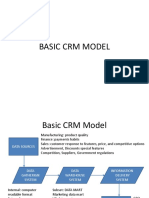

- CRM Model and ArchitectureDocument33 pagesCRM Model and ArchitectureVivek Jain100% (1)

- Resume Paul PresentDocument3 pagesResume Paul PresentPaul MosqueraPas encore d'évaluation

- Tilak Nepali Mis ProjectDocument18 pagesTilak Nepali Mis ProjectIgeli tamangPas encore d'évaluation

- WN Ce1905 en PDFDocument708 pagesWN Ce1905 en PDFanuagarwal anuPas encore d'évaluation

- Round RobinDocument15 pagesRound RobinSohaib AijazPas encore d'évaluation

- SAFE Key Features and TerminologyDocument74 pagesSAFE Key Features and TerminologyPAUC CAPAIPas encore d'évaluation

- Effect of Model and Pretraining Scale On Catastrophic Forgetting in Neural NetworksDocument33 pagesEffect of Model and Pretraining Scale On Catastrophic Forgetting in Neural Networkskai luPas encore d'évaluation

- Eviews 5 Users Guide PDFDocument2 pagesEviews 5 Users Guide PDFFredPas encore d'évaluation

- DSP Hardware: EKT353 Lecture Notes by Professor Dr. Farid GhaniDocument44 pagesDSP Hardware: EKT353 Lecture Notes by Professor Dr. Farid GhanifisriiPas encore d'évaluation