Académique Documents

Professionnel Documents

Culture Documents

The Effective Way of Processor Performance Enhancement by Proper Branch Handling

Transféré par

CS & ITCopyright

Formats disponibles

Partager ce document

Partager ou intégrer le document

Avez-vous trouvé ce document utile ?

Ce contenu est-il inapproprié ?

Signaler ce documentDroits d'auteur :

Formats disponibles

The Effective Way of Processor Performance Enhancement by Proper Branch Handling

Transféré par

CS & ITDroits d'auteur :

Formats disponibles

THE EFFECTIVE WAY OF PROCESSOR PERFORMANCE ENHANCEMENT BY PROPER BRANCH HANDLING

Jisha P Abraham1 and Dr.Sheena Mathew2

1 2

M A College of Engineering,Kothamangalam,Kerala,India

jishaanil@gmail.com

Cochin University of Science and Technology,Cochin-22,Kerala,India

ABSTRACT

The processor performance is highly dependent on the regular supply of correct instruction at the right time. To reduce instruction cache misses, one of the proposed mechanism is the instruction prefetching, which in turn will increase instructions supply to the processor. The technology developments in these fields indicates that in future the gap between processing speeds of processor and data transfer speed of memory is likely to be increased. Memory bandwidth can be increased significantly using the prefetching, but unsuccessful prefetches will pollute the primary cache. Prefetching can be done either with software or hardware. In software prefetching the compiler will insert a prefetch code in the program. In this case as actual memory capacity is not known to the compiler and it will lead to some harmful prefetches. In hardware prefetching instead of inserting prefetch code it will make use of extra hardware and which is utilized during the execution. The most significant source of lost performance when the process waiting for the availability of the next instruction. All the prefetching methods are giving stress only to the fetching of the instruction for the execution, not to the overall performance of the processor. This paper is an attempt to study the branch handling in a uniprocessing environment, where, when ever branching is identified the proper cache memory management is enabled inside the memory management unit.

KEYWORDS

Uniprocessing, Prefetch, Branch handling, Processor performance.

1. INTRODUCTION

The performance of superscalar processors and high speed sequential machines are degraded by the instruction cache misses. Instruction prefetch algorithms attempt to reduce the performance degradation by bringing prefetch code into the instruction cache before they are needed by the CPU fetch unit. The technology developments in these fields indicates that in future the gap between processing speeds of processor and data transfer speed of memory is likely to be increased. A traditional solution for this problem is to introduce multi-level cache hierarchies. To reduces memory access latency by fetching lines into cache before a demand reference is called

Jan Zizka (Eds) : CCSIT, SIPP, AISC, PDCTA - 2013 pp. 451457, 2013. CS & IT-CSCP 2013 DOI : 10.5121/csit.2013.3651

452

Computer Science & Information Technology (CS & IT)

prefetching. Each missing cache line should be prefetched so that it arrives in the cache just before its next reference. Each prefetching techniques should be able to predict the prefetch addresses. This will lead to following issues; Some addresses which cannot accurately predict the limits will limit the effectiveness of speculative prefetching. With accurate predictions the prefetch issued early enough to cover the nominal latency, the full memory latency may not be hidden due to additional delays caused by limited available memory bandwidth. Prefetched lines, whether correctly predicted or not, may be issued too early will pollute the cache by replacing the desirable There are two main techniques to attack memory latency: (a) the first set of techniques attempt to reduce memory latency and (b) the second set attempt to hide it. These prefetching can be either done with software or with hardware technology. Save memory bandwidth due to useless prefetch, improve prefetch performance. Our study on the branch prediction will narrow to the following conclusion. Domain-fetching is not an effective mechanism for filling the required instruction to the cache memory. Conventional hardware prefetches are not useful over the time intervals in which performance loss is the most dire. Bulk transferring the private cache is surprisingly ineffective, and in many cases is worse than doing nothing. The only over head in this case is the over head caused while identifying the branch instruction.

2. BACK GROUND AND RELATED WORKS

Sair, et,al[14] survey several prefetchers, and introduce a method for classifying memory access behaviors in hardware: the branch access stream is matched against the behavior specific table in parallel. We utilize such a similar technique in the listing the branches in the programming environment. Mahmut,Yuanrui [15] study shows that timing and scheduling of prefetch instructions is a critical issue in software data prefetching and prefetch instructions must be issued in a timely manner for them to be useful. If a prefetch is issued too early, there is a chance that the prefetched data will be replaced from the cache before its use or it may also lead to replacement of other useful data from the higher levels of the memory hierarchy. If the prefetch is issued too late, the requested data may not arrive before the actual memory reference is made, thereby introducing processor stall cycles. We make use of this concept will the pre-empting the current running sequence form the processor. I.K. Chen[6] Next-line prefetching tries to prefetch sequential cache lines before they are needed by the CPUs fetch unit. The next line is not resident in the cache; it will be prefetched when an instruction located some distance into the current line is accessed. This specified distance is measured from the end of the cache line and is called the fetch ahead distance. Next-line prefetching predicts that execution will fall-through any conditional branches in the current line and continue along the sequential path. Next line guess will be incorrect except in the case of short branches and the correct execution path will not be prefetched. Performance of the scheme is dependent upon the choice of fetch ahead distance. For the calculation of the wastage of the processor cycles can be calculated using this fetch ahead distance. Run ahead Execution [16]prefetches by speculatively executing the application, but ignoring dependences on long latency misses. This seems well-suited to our need to prefetch the branch instruction where the branching is identified.

3. BASELINE ARCHITECTURE

On a single processor system, if the processor executes only one instruction at a time, then multiprogramming in itself does not involve true parallelism. Of course, if the single main processor in the system happens to be pipelined, then that system feature leads to pseudoparallelism. Pseudo-parallelism share the some technical issues in common, related to the need

Computer Science & Information Technology (CS & IT)

453

for synchronization between running programs. Figure 1 is somewhat detailed view of the running of the four programs- labeled P1, P2, P3, and P4- in multiprogramming mode. The top part of the figure, all the four programs seems to be running in parallel. With time, each program makes progress in terms of both instruction and input/output performed. Due to single processor, in terms of actual execution of program execution, there is no parallelism in this system. Running program is such a crucial entity in a computer system that it is given a name of its own and referred to as a process. The behavior of a running program, in terms it spend in compute phase and I/O phase.

4. MOTIVATION

Parallelism in a uniprocessor environment can be achieved with pipelined concept. Today, pipelining is the key technique used to make fast CPUs. The time required between moving an instruction one step down the pipeline is a processor cycle. The length of processor cycle is determined by the time required for the slowest pipe stage. If the stages are perfectly balanced, then the time per instruction on the pipelined processor is equal to Time per instruction on unpipelined machine/ Number of pipe stages Pipelining yields a reduction in the average execution time per instruction. It is an implementation technique that exploits parallelism among the instruction in the sequential instruction stream. In he case of a RISC processor ,it basically consisting of five pipeline stages. Figure 2 deals with the various stages associated with it. Pipeline overhead arises from the combination of pipeline register delay and clock skew. The pipeline registers add setup time plus propagation delay to the clock cycle. The major hurdle of pipelining is structural, data and control hazards. We are mainly concentrated on the control hazards; arise from the pipelining of branches and other instructions that changes the PC. The structure of a standard program [2] shows that around 56% of instruction are conditional branching,10% of instructions are unconditional and 8% of the instruction are comes under the call return pattern. Hence during the design of pipeline structure we should take care of the nonsequential execution of the program. Control hazards can cause a greater performance loss for MIPS pipeline than do data hazards. When branch (conditional/unconditional) is executed, it may or may not change the PC to something other than its current value. The simplest scheme to handle branches is to freeze or flush the pipeline, holding or detecting any instructions after the branch until the branch destination is known. It will destroy the entire parallelism achieved in the system. If the branching can be predicted early to some extent we can overcome the delay. That is, the required instruction can be brought to the cache region early. Prefetching can be done either with software or hardware . In software prefetching the compiler will insert a prefetch code in the program. In this case as actual memory capacity is not known to the compiler and it will lead to some harmful prefetches. In hardware prefetching instead of inserting prefetch code it will make use of extra hardware and which is utilized during the execution. The most significant source of lost performance when the process waiting for the availability of the next instruction. In both the cases we have to flush some instruction out from the pipe. Combining the software prefetching with the branch handling, we can overcome this problem. Whenever branching encountered the system will go for a context switching, pipe will be filled with instruction for the next process.

454

Computer Science & Information Technology (CS & IT)

Fig. 1. The pipeline can be thought of as a series of data paths shifted in time

5. SYSTEM DESIGN

5.1 User Modeling Process

User Model life cycle is defined by a sequence of actions performed by an adaptive web-based system during users interaction. The process is depicted in the Fig.3. We separate the user modelling process into three distinct stages, as defined below. 1.Data collection, when an adaptive system collects various user-related data such as forms and questionnaires filled-in by the user, logs of user activity within the system, feedback given by the user to a particular domain item, information about users context(device, browser, bandwidth ,etc.). All these information represent observable facts and are stored in an evidence layer of the user model.

Fig. 2. User Model Life Cycle

The facts can be supplied also from external sources, for instance we can acquire information about user relationships with other users from an external application for social networking. 2. User model inference, when an adaptive system processes the data from the evidence layer into higher level user characteristics such as interests, preferences or personal traits. It is important to mention that these are only estimates of the real user characteristics. 3. Adaptation and personalization is the actual use of a user model (both evidence and higher level characteristics layer) to provide a user with personalized experience when using an adaptive system. The success rate of the supplied adaptation effect gives us a feedback about an accurateness of the underlying user model. The process has a cyclic character, as an adaptive system continuously acquires new data about the user (influenced by already performed adaptation and thus by a previous version of the user model) and continuously refines the user model to better reflect reality and thus to serve as a better base for personalization. An adaptive

Computer Science & Information Technology (CS & IT)

455

system must handle specific problems related to each step of the process. In data collection we must find a balance between user privacy and amount and nature of data which is required in order to deliver a successful personalization. We must find such sources of data, which do not pose additional extra burden on the user and design data collection process in such a way, which is unobtrusive and runs automatically in background. The main challenge of the actual user model inference is (apart from actual transformation of user action history into user characteristics) maintenance of user characteristics, which should keep pace with user personal development, changing interests and knowledge.

6. ARCHITECTURAL SUPPORT

The miss stream is not sufficient to cover many branch related misses; we need to characterize the access stream. For that we construct a working set predictor which works in different stages. First, we observe the access stream of a process and capture the patterns and behavior. Second ,we summarize this behavior and transfer the summery data to the stack region. Third we apply the summery via a prefetch engine to the target process, to rapidly fill the caches in advance of the migrated process. The following set of capture engines are required to perform the migration process. For the processing of each process it will enter to the pipeline, once it enter to the decoding stage the processor is able to find the branching condition. By that time the next instruction will be in the fetch cycle.

6.1 Memory locker

For each process we add a memory locker, a specialized unit which records the selected details of the each committed memory instruction. This unit passively observes the current processes it executes, but does not directly affect execution. If a conservatively implemented memory locker becomes swamped with input records may safely be discarded; this will degrade the accuracy of later prefetching. memory locker can be implemented with small associative memory tables each with control logic. Each table with in the memory locker targets a specific type of access patterns. Next-block : these detect sequential block accesses; entries are advanced by one cache block on a hit. Target PC: this tracks individual instructions which walk through memory in fixed sized steps. Same object: this capturer accesses to ranges of memory from a common base address, as is common for structure and object access code. This takes advantage of the common base+offset method, tracking minimum and maximum offsets for each base address, while ignoring accesses relative to the global pointer or stack pointer. Blocked BTR: these capture taken branches and their targets, recording the most recent inbound branch for each target. Block BTR is a block aligned, allowing a greater amount of the instruction working set to be characterized at a given table size. Return stack: This maintains a shadow copy of the processor return stack, prefetching blocks of instruction near the top few control frames.

6.2 Target generator

The target generator activates when a core is signaled to branching. Our baseline core design assumes hardware support for branching; at halt-time, the core collect and stores the register state of the process being halted. While register state is being transferred, the target generator reads through the tables populated by the memory locker and prepare a compact summery of the process which is moving towards the running state. This summery is transmitted after the architected process state, and is used to prefetch the ready working set when it resume on the

456

Computer Science & Information Technology (CS & IT)

running state. During summarization each table entry is inspected to determine its usefulness by observing whether the process comes to ready state is whether from start or waiting state. Table entries are summarized for transfer by generating a sequence of block addresses from each, following the behavior pattern captured by that table We encode each sequence with a simple linear-range encoding, which tells the prefetcher to fetch length cache blocks, starting at startaddress.

6.3 Target-driven prefetcher

Rounding out our working-set switching hardware is the target-driven prefetcher. When a previously pre-empted branch is activated on a core, its summary records are read by the prefetcher. Each record is expanded to a sequence of cache block addresses, which are submitted for prefetching as bandwidth allows. While the main execution pipeline reloads register values and resumes execution, the prefetcher independently begins to prefetch a likely working set. Prefetches search the entire memory hierarchy, and contend for the same resources as demand requests. Once the register state has been loaded, the process resumes execution and competes with the prefetchers for memory resources. Communication overlap between the process are modelled among transfers of register state, table summaries, prefetches, and the service of demand-misses. We model the prefetch engine as submitting virtually addressed memory requests at the existing ports of the caches, utilizing the existing memory hierarchy for service. While these requests compete with the process itself, we find that most prefetching immediately after switching occurs while the process would otherwise be stalled for memory access; thus we do not require additional cache porting.

7. ANALYSIS AND RESULT

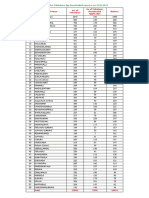

The baselines for comparison in our simulation result are the prediction rate of each benchmark when executed as a single process and run to completion with no intervening process. The program is executed for different size of instruction for experiment our experiment has the effects on the size of the cache line provided along with the number of cache blocks considered in the cache memory. The cache management is done with the first in first out method.

Fig. 3. Lines of code Vs. Time Graph

Computer Science & Information Technology (CS & IT)

457

8. CONCLUSIONS

The report here sheds light on the applicability of instruction cache prefetching schemes in current and next-generation microprocessor designs where memory latencies are likely to be longer. When ever, a new prefetching algorithm is examined that was inspired by previous studies of the effect of speculation down miss predicted not correct paths. Data prefetching has been used in the past as one of the mechanism to hide memory access latencies. But prefetching requires accurate timing to be effective in practice. This timing problem will affect more in the case of multiple processor. Which is handled with the help of target-driven prefetcher. The designing of an effective prefetching algorithm which will minimize the prefetching overhead, and it is a big challenge and needs more thoughts and effort on it.

REFERENCES

[1] [2] [3] [4] [5] [6] [7] [8] [9] [10] [11] [12] A.J. Smith, Cache memories, ACM Computing ,Surveys, pp. 473-530, Sep. 1982 ECE 4100/6100 Advanced Computer Architecture ,Lecture 5 Branch Prediction,Prof. Hsien-Hsin Sean Lee School of Electrical and Computer Engineering Georgia Institute of Technology A. Brown and D. M. Tullsen. The shared-thread multiprocessor. In 21st InternationalConference on Supercomputing, pages 73-82, June 2008. Abdel-Shafi,H.Hall,J. Adve.An Evaluation of Fine Grain producer-initiated communication in Cache-Coherent proceeding of the 3rd HPCA,IEEEcomputer Society press Aleksardar Milenkovic, Veljko Milutinovic Lazy prefetching proceedings of the IEEE hicss 1998 I.K. Chen, C.C. Lee, and T.N. Mudge. Instruction prefetching us- ing branch prediction information. In International Conference on Computer Design, pages 593-601, October 1997. Mahmut Kandemir, Yuanrui zhang,Ozcan OzturkAdaptive prefetching for shared cache based chip multiprocessorsEDAA 2009 Jim Pierce Intel Corporation,Trevor Mudge University of Michigan Wrong-Path Instruction Prefetching IEEE 1996 P. Hsu, Design of the TFP microprocessor, IEEE Micro, 1993. J. Pierce, Cache Behavior in the Presence of Speculative ExecutionThe Benefits of Misprediction, Ph.D. Thesis, The University of Michigan, 1995. G. Reinman, T. Austin, and B. Calder. A scalable front-end architecture for fast instruction delivery. In 26th Annual International Symposium on Computer Architecture, May 1999. Lawrence Spracklen, Yuan chou and Snathosh G Abraham effective instruction prefetching in chip multiprocessor for modern commersal application proceedings of 11th Intl. Symbosium on HPCA,2005 D Kongetira,K Aingraran and K olukotun NiagraA 32-way multithreaded SPARC processor IEEE micro, 2005. J. D. Collins, S. Sair, B. Calder, and D. M. Tullsen. Pointer cache assisted prefetching. In 35th International Symposium on Microarchitecture, pages 62-73, Nov. 2002. R. S. Chappell, J. Stark, S. P. Kim, S. K. Reinhardt, and Y. N. Patt. Simultaneous subordinate microthreading (SSMT). In 26th International Symposium on Computer Architecture, pages 186-195, M

[13] [14] [15]

Vous aimerez peut-être aussi

- Free Publications : Scope & TopicsDocument2 pagesFree Publications : Scope & TopicscseijPas encore d'évaluation

- International Journal of Game Theory and Technology (IJGTT)Document2 pagesInternational Journal of Game Theory and Technology (IJGTT)CS & ITPas encore d'évaluation

- Call For Papers-The International Journal of Ambient Systems and Applications (IJASA)Document2 pagesCall For Papers-The International Journal of Ambient Systems and Applications (IJASA)CS & ITPas encore d'évaluation

- International Journal of Chaos, Control, Modelling and Simulation (IJCCMS)Document3 pagesInternational Journal of Chaos, Control, Modelling and Simulation (IJCCMS)Anonymous w6iqRPAPas encore d'évaluation

- Graph HocDocument1 pageGraph HocMegan BellPas encore d'évaluation

- International Journal of Game Theory and Technology (IJGTT)Document2 pagesInternational Journal of Game Theory and Technology (IJGTT)CS & ITPas encore d'évaluation

- International Journal of Chaos, Control, Modelling and Simulation (IJCCMS)Document3 pagesInternational Journal of Chaos, Control, Modelling and Simulation (IJCCMS)Anonymous w6iqRPAPas encore d'évaluation

- Ijait CFPDocument2 pagesIjait CFPijaitjournalPas encore d'évaluation

- Ijait CFPDocument2 pagesIjait CFPijaitjournalPas encore d'évaluation

- Call For Papers-The International Journal of Ambient Systems and Applications (IJASA)Document2 pagesCall For Papers-The International Journal of Ambient Systems and Applications (IJASA)CS & ITPas encore d'évaluation

- Graph HocDocument1 pageGraph HocMegan BellPas encore d'évaluation

- International Journal of Peer-To-Peer Networks (IJP2P)Document2 pagesInternational Journal of Peer-To-Peer Networks (IJP2P)Ashley HowardPas encore d'évaluation

- International Journal On Cloud Computing: Services and Architecture (IJCCSA)Document2 pagesInternational Journal On Cloud Computing: Services and Architecture (IJCCSA)Anonymous roqsSNZPas encore d'évaluation

- The International Journal of Ambient Systems and Applications (IJASA)Document2 pagesThe International Journal of Ambient Systems and Applications (IJASA)Anonymous 9IlMYYx8Pas encore d'évaluation

- Ijait CFPDocument2 pagesIjait CFPijaitjournalPas encore d'évaluation

- International Journal of Game Theory and Technology (IJGTT)Document2 pagesInternational Journal of Game Theory and Technology (IJGTT)CS & ITPas encore d'évaluation

- Graph HocDocument1 pageGraph HocMegan BellPas encore d'évaluation

- Call For Papers-The International Journal of Ambient Systems and Applications (IJASA)Document2 pagesCall For Papers-The International Journal of Ambient Systems and Applications (IJASA)CS & ITPas encore d'évaluation

- International Journal of Game Theory and Technology (IJGTT)Document2 pagesInternational Journal of Game Theory and Technology (IJGTT)CS & ITPas encore d'évaluation

- Free Publications : Scope & TopicsDocument2 pagesFree Publications : Scope & TopicscseijPas encore d'évaluation

- Graph HocDocument1 pageGraph HocMegan BellPas encore d'évaluation

- Free Publications : Scope & TopicsDocument2 pagesFree Publications : Scope & TopicscseijPas encore d'évaluation

- International Journal On Soft Computing, Artificial Intelligence and Applications (IJSCAI)Document2 pagesInternational Journal On Soft Computing, Artificial Intelligence and Applications (IJSCAI)ijscaiPas encore d'évaluation

- Ijait CFPDocument2 pagesIjait CFPijaitjournalPas encore d'évaluation

- International Journal of Chaos, Control, Modelling and Simulation (IJCCMS)Document3 pagesInternational Journal of Chaos, Control, Modelling and Simulation (IJCCMS)Anonymous w6iqRPAPas encore d'évaluation

- International Journal of Chaos, Control, Modelling and Simulation (IJCCMS)Document3 pagesInternational Journal of Chaos, Control, Modelling and Simulation (IJCCMS)Anonymous w6iqRPAPas encore d'évaluation

- International Journal On Soft Computing (IJSC)Document1 pageInternational Journal On Soft Computing (IJSC)Matthew JohnsonPas encore d'évaluation

- International Journal On Soft Computing (IJSC)Document1 pageInternational Journal On Soft Computing (IJSC)Matthew JohnsonPas encore d'évaluation

- International Journal of Security, Privacy and Trust Management (IJSPTM)Document2 pagesInternational Journal of Security, Privacy and Trust Management (IJSPTM)ijsptmPas encore d'évaluation

- International Journal On Soft Computing, Artificial Intelligence and Applications (IJSCAI)Document2 pagesInternational Journal On Soft Computing, Artificial Intelligence and Applications (IJSCAI)ijscaiPas encore d'évaluation

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeD'EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeÉvaluation : 4 sur 5 étoiles4/5 (5794)

- Shoe Dog: A Memoir by the Creator of NikeD'EverandShoe Dog: A Memoir by the Creator of NikeÉvaluation : 4.5 sur 5 étoiles4.5/5 (537)

- The Yellow House: A Memoir (2019 National Book Award Winner)D'EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Évaluation : 4 sur 5 étoiles4/5 (98)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceD'EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceÉvaluation : 4 sur 5 étoiles4/5 (895)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersD'EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersÉvaluation : 4.5 sur 5 étoiles4.5/5 (344)

- The Little Book of Hygge: Danish Secrets to Happy LivingD'EverandThe Little Book of Hygge: Danish Secrets to Happy LivingÉvaluation : 3.5 sur 5 étoiles3.5/5 (399)

- Grit: The Power of Passion and PerseveranceD'EverandGrit: The Power of Passion and PerseveranceÉvaluation : 4 sur 5 étoiles4/5 (588)

- The Emperor of All Maladies: A Biography of CancerD'EverandThe Emperor of All Maladies: A Biography of CancerÉvaluation : 4.5 sur 5 étoiles4.5/5 (271)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaD'EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaÉvaluation : 4.5 sur 5 étoiles4.5/5 (266)

- Never Split the Difference: Negotiating As If Your Life Depended On ItD'EverandNever Split the Difference: Negotiating As If Your Life Depended On ItÉvaluation : 4.5 sur 5 étoiles4.5/5 (838)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryD'EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryÉvaluation : 3.5 sur 5 étoiles3.5/5 (231)

- On Fire: The (Burning) Case for a Green New DealD'EverandOn Fire: The (Burning) Case for a Green New DealÉvaluation : 4 sur 5 étoiles4/5 (73)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureD'EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureÉvaluation : 4.5 sur 5 étoiles4.5/5 (474)

- Team of Rivals: The Political Genius of Abraham LincolnD'EverandTeam of Rivals: The Political Genius of Abraham LincolnÉvaluation : 4.5 sur 5 étoiles4.5/5 (234)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyD'EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyÉvaluation : 3.5 sur 5 étoiles3.5/5 (2259)

- The Unwinding: An Inner History of the New AmericaD'EverandThe Unwinding: An Inner History of the New AmericaÉvaluation : 4 sur 5 étoiles4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreD'EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreÉvaluation : 4 sur 5 étoiles4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)D'EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Évaluation : 4.5 sur 5 étoiles4.5/5 (120)

- Her Body and Other Parties: StoriesD'EverandHer Body and Other Parties: StoriesÉvaluation : 4 sur 5 étoiles4/5 (821)

- ES2402 16P 2C User Manual PDFDocument97 pagesES2402 16P 2C User Manual PDFPaul Ramos CarcaustoPas encore d'évaluation

- Factuur PDF TemplateDocument2 pagesFactuur PDF TemplateAmandaPas encore d'évaluation

- Image Sensor Effective Pixels Thermal Sensitivity (NETD) Spectral Range Pixel SizeDocument3 pagesImage Sensor Effective Pixels Thermal Sensitivity (NETD) Spectral Range Pixel SizeYatin KavishwarPas encore d'évaluation

- SCP Service Control Point 032010 enDocument4 pagesSCP Service Control Point 032010 enGökhan TunaPas encore d'évaluation

- Smart ViewDocument34 pagesSmart ViewVijayabhaskarareddy VemireddyPas encore d'évaluation

- Inventec Styx MV 6050a2400201 Rax2 SchematicsDocument59 pagesInventec Styx MV 6050a2400201 Rax2 SchematicsGleison GomesPas encore d'évaluation

- Why & What Is Cooperative Learning at The Computer: Teaching TipsDocument8 pagesWhy & What Is Cooperative Learning at The Computer: Teaching Tipsapi-296655270Pas encore d'évaluation

- FinalDocument178 pagesFinalBiki JhaPas encore d'évaluation

- SG 245867Document156 pagesSG 245867Barbie Candy S ColínPas encore d'évaluation

- Usb Seminar ReportDocument33 pagesUsb Seminar ReportTuhina Nath100% (1)

- Communication Interfaces PDFDocument2 pagesCommunication Interfaces PDFBrittanyPas encore d'évaluation

- Week 3 IT135-8Document3 pagesWeek 3 IT135-8Jerwin Dela CruzPas encore d'évaluation

- School of Electrical, Electronic & Computer Engineering Course: Code: Practical No.: TitleDocument5 pagesSchool of Electrical, Electronic & Computer Engineering Course: Code: Practical No.: TitleNdumiso MtshaliPas encore d'évaluation

- IP Spoofing and Web SpoofingDocument11 pagesIP Spoofing and Web SpoofingDevendra ShamkuwarPas encore d'évaluation

- Lesson PlanDocument3 pagesLesson PlanEtty MarlynPas encore d'évaluation

- A. Introduction To JavascriptDocument5 pagesA. Introduction To JavascriptMikki BalateroPas encore d'évaluation

- X-Sending and Receiving IDOCs Using A Single Stac... SCN PDFDocument4 pagesX-Sending and Receiving IDOCs Using A Single Stac... SCN PDFbekirPas encore d'évaluation

- Pass4sure-Final Exam Ccna, CCNP 100% Update Daily PDFDocument4 pagesPass4sure-Final Exam Ccna, CCNP 100% Update Daily PDFOsama JamilPas encore d'évaluation

- Geforce4 Mx-Series Graphics Accelerator User'S ManualDocument22 pagesGeforce4 Mx-Series Graphics Accelerator User'S ManualAnonymous w0egAgMouGPas encore d'évaluation

- GPRS Protocol Equipo GalacticDocument4 pagesGPRS Protocol Equipo GalacticRadicalmPas encore d'évaluation

- Windows 7 Tweak GuideDocument0 pageWindows 7 Tweak GuideDaymyenPas encore d'évaluation

- CcproxyDocument35 pagesCcproxywpshashikaPas encore d'évaluation

- BGP Based Default Routing White-Paper PDFDocument61 pagesBGP Based Default Routing White-Paper PDFshido6Pas encore d'évaluation

- CH 1Document29 pagesCH 1kgfjhjPas encore d'évaluation

- Delphi Magazine - Aug-97 PDFDocument58 pagesDelphi Magazine - Aug-97 PDFManny OrtizPas encore d'évaluation

- DMTSDocument5 pagesDMTSmarkantony373Pas encore d'évaluation

- Mandal Wise Volunteer Mobile App Download Report As On 31.01.2022Document1 pageMandal Wise Volunteer Mobile App Download Report As On 31.01.2022Special Cell VMCPas encore d'évaluation

- ReadmeDocument6 pagesReadmepathidesigns8980Pas encore d'évaluation

- Cisco Live - CCIE R&S Techtorial - TECCCIE-3000Document229 pagesCisco Live - CCIE R&S Techtorial - TECCCIE-3000Adrian Turcu100% (1)

- LESSON 1.1trends in ICTDocument20 pagesLESSON 1.1trends in ICTAices Jasmin Melgar Bongao100% (7)