Académique Documents

Professionnel Documents

Culture Documents

The Objectives For This Module Are Shown Here. Please Take A Moment To Read Them

Transféré par

rasoolvalisk0 évaluation0% ont trouvé ce document utile (0 vote)

30 vues56 pagesclariion vp

Titre original

m202res01

Copyright

© © All Rights Reserved

Formats disponibles

PDF, TXT ou lisez en ligne sur Scribd

Partager ce document

Partager ou intégrer le document

Avez-vous trouvé ce document utile ?

Ce contenu est-il inapproprié ?

Signaler ce documentclariion vp

Droits d'auteur :

© All Rights Reserved

Formats disponibles

Téléchargez comme PDF, TXT ou lisez en ligne sur Scribd

0 évaluation0% ont trouvé ce document utile (0 vote)

30 vues56 pagesThe Objectives For This Module Are Shown Here. Please Take A Moment To Read Them

Transféré par

rasoolvaliskclariion vp

Droits d'auteur :

© All Rights Reserved

Formats disponibles

Téléchargez comme PDF, TXT ou lisez en ligne sur Scribd

Vous êtes sur la page 1sur 56

MirrorView/A and MirrorView/S - 1

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The objectives for this module are shown here. Please take a moment to read them.

MirrorView/A and MirrorView/S - 2

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The objectives for this lesson are shown here. Please take a moment to read them.

MirrorView/A and MirrorView/S - 3

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

MirrorView/S and MirrorView/A are optional software supported on the CX4 series CLARiiON, as

well as many previous models.

The design goal of MirrorView/S is to allow speedy recovery from a disaster with a low Recovery

Point Objective (RPO) as well as a Recovery Time Objective (RTO). To accomplish this,

MirrorView/S uses synchronous mirroring, and does not allow direct host access to secondary images.

There may be one or two secondary images per mirror.

MirrorView/A has a similar design goal, but allows lower cost, and longer distance connectivity

options in environments where some data loss is acceptable. It uses an asynchronous interval-based

update mechanism to accomplish this.

Supported connection topologies include direct connect, SAN connect, and WAN connect, when

appropriate FC/IP devices are used, as well as iSCSI where the SPs have the port type.

MirrorView/A and MirrorView/S - 4

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

MirrorView/S uses synchronous updates, and the secondary is therefore identical to the primary during

normal operation. Bear in mind that a primary can lose communication with the secondary, at which

point the secondary is unreachable, and cannot be updated, a state known as the fractured state. In this

case, a tracking mechanism logs areas of disk where there may be differences between the primary and

secondary images (see the Fracture Log discussion).

MirrorView/A, because of its asynchronous nature, seldom has the data on the secondary image

identical to that on the primary image. There is also a requirement to track differences, so that they

may be shipped to the secondary image at regular intervals. Internally, the SAN Copy delta set

mechanism is used to track changes to the primary image, and to ship those changes to the secondary

image as required. Before the updates are shipped, a protective snapshot of the secondary ensures that,

in the event of a failure, the data state may revert to a previous known good state.

MirrorView/A and MirrorView/S - 5

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Note the definitions below:

Primary storage system

A CLARiiON that serves mirrored primary data to a production host.

Secondary storage system

A CLARiiON that contains a mirrored secondary image of primary storage system data.

Secondary storage systems are typically connected to standby hosts.

Bidirectional mirroring

A Secondary storage system can also be a Primary storage system for another mirror.

Note that the terms primary storage system and secondary storage system are relative to each

mirror. Because MirrorView/S and MirrorView/A support bidirectional mirroring, a storage system

which hosts primary images for one or more mirrors may also host secondary images for one or more

other mirrors.

MirrorView/A and MirrorView/S - 6

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

This functionality is MirrorView/S-specific; MirrorView/A neither needs nor implements the

logging mechanism described here.

In normal operation, data is committed to the secondary image as part of the I/O processing

before the system acknowledges the write back to the host. However, if a secondary LUN is

unreachable, then MirrorView/S marks the secondary image as fractured and records the write

on the primary LUN in a fracture log. The fracture log tracks regions as they change on the

primary LUN for as long as the secondary LUN is unreachable. When the secondary LUN

returns to service, the secondary image must be synchronized with the primary.

Synchronization is the process of committing data from the primary LUN to the secondary

LUN, as recorded in the fracture log. When synchronization finishes, the data on both primary

and secondary LUNs is consistent.

The use of a fracture log avoids the need for a full copy of the entire primary image, resulting

in substantial time savings. I/O from the host continues during synchronization, so the host

experiences no difference in running operations, apart from a possible degradation in

performance. A tunable parameter is available to lessen this performance impact if it is

unacceptable.

Enhancements in FLARE R26 lessened the impact of using the WIL; its use is now

recommended for as many mirrors as will support it in the storage system. With the release of

the CX4 array and FLARE R28 it is now enabled by default on mirror creation. Another

change is that all mirrors are now allowed to use the WIL, instead of just half the mirror limit

as with previous versions. There is no option in the GUI to disable this, one must use the

nowriteintentlog option with mirror creation to create a WIL-less mirror.

MirrorView/A and MirrorView/S - 7

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Synchronization is the process used to ensure that secondary images are rapidly made identical with

primary images (or, in the case of MirrorView/A, with point in time copies of primary images) after

initial creation of the mirror, or after the secondary becomes reachable after having been fractured. The

fractured condition is one in which the secondary image is not reachable from the primary image,

either because of a failure in the environment or because of administrative action.

MirrorView/A performs incremental synchronization operations at intervals prescribed by the user,

configured when the mirror is created.

MirrorView/A and MirrorView/S - 8

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

MirrorView/S and MirrorView/A use dedicated ports on supported storage systems - the specific port

used on each SP depends on the CLARiiON model, and whether FC or iSCSI is being used for

replication. The MirrorView port may be shared with host I/O (though this may be undesirable in some

environments), but may not be shared with SAN Copy.

MirrorView/A and MirrorView/S - 9

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The three mirror states are inactive, active and attention. These states vary in the ways that they

respond to read and write requests from a host. Transitions between the states is either automatic or by

administrative control.

Note that while a mirror is in any state, normal administrative operations can occur, such as adding or

deleting a secondary image.

Inactive The default state for a newly created mirror. An inactive mirror rejects all host data

access. This restriction ensures an orderly transition for such operations as the destruction of the

mirror.

Active The normal state for a mirror in operation. Allows full read and write host access.

Attention The state that indicates a problem exists somewhere in the mirror and is preventing a

transition to the active state. For example, a mirror is placed in this state if the number of

secondary images falls below an administrator-defined minimum value. A mirror in attention state

is flagged as faulted in Manager, but still allows all host data access.

If a Secondary Image is fractured, the primary storage system uses a heartbeat every 10 seconds to

determine when the secondary becomes reachable again. This may be compared to a ping in the

networking environment.

MirrorView/A and MirrorView/S - 10

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The five image, or data states are: synchronized, consistent, synchronizing, out-of-sync and rolling

back. These states represent the relationships between the primary image and a secondary image.

Synchronized The state in which a secondary image is currently an exact byte-for-byte duplicate

of the primary image. It implies that there are no outstanding write requests from a host that have

not committed to stable storage on both the primary and secondary images. Transition from this

state can occur to the consistent state.

Consistent The state in which a secondary image is a byte-for-byte duplicate of the primary

image either now or at some point in the past. Transition from this state can occur to either the

synchronizing state or the in-sync state.

Synchronizing The state in which a secondary image is being updated from the primary image.

This state can be a full byte-for-byte copy of the data or a partial copy based on either a fracture

log or write-intent log. Transition from this state can occur to the out-of-sync or consistent states.

Out-of-sync The state in which the data on a secondary image has no known relationship with

the data on a primary image. This is the case when you add a secondary image to a pre-existing

mirror. Transition from this state can occur to the synchronizing state.

Rolling back (MirrorView/A only) the protective SnapView Session on the secondary image is

rolling back to return the secondary image to a known good, consistent, state.

Along with an image state, an image has an image condition that provides more information.

Normal The normal processing state

Admin Fractured The administrator has fractured the mirror, or a media failure (such as a failed

sector or a bad block) has occurred. An administrator must initiate a synchronization.

MirrorView/A and MirrorView/S - 11

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

System Fractured This occurs when the system fractures the mirrors. For example, if the storage

system containing the secondary image fails, the secondary image is system fractured. Similarly, if

the link between the primary and secondary storage system fails, the secondary image is also

system fractured. In either event, once the issue has been resolved and the secondary storage

system is back in communication with the primary storage system, the secondary image is

automatically resynchronized if auto recovery policy is enabled.

MirrorView/A and MirrorView/S - 12

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The MirrorView/S (and/or MirrorView/A) software must be loaded on both storage systems, regardless

of whether or not the customer wants to implement bi-directional mirroring.

The secondary LUN must be the same size as the primary LUN, though not necessarily the same RAID

type.

Hosts cannot attach to an active secondary LUN while it is configured as a secondary mirror image. If

you promote the secondary mirror to be the primary mirror image, as seen in a disaster recovery

scenario, or if you remove the secondary LUN, thereby turning it into a FLARE LUN, then it may be

accessed by a host.

A point-in-time copy of the secondary image, either a Snapshot or a Clone, may be made at any time

and be presented to a secondary host.

MirrorView/A and MirrorView/S - 13

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

MirrorView/A makes extensive use of other CLARiiON Replication Software for its operation.

SAN Copy, in the form of Incremental SAN Copy, performs the data transfer for MirrorView/A. It, in

turn, makes use of SnapView (on the primary storage system) to track updates to the Source LUN

(primary image), so that they can be copied to the secondary storage system as scheduled.

SnapView is used on the secondary storage system to make a safety snapshot prior to starting an

update cycle. In this way, if the update data transfer should fail for some reason, and the primary site

fails before the update can be completed, the secondary image can be rolled back to a (previous)

known good state before it is promoted.

When a license for MirrorView/A is installed, the CLARiiON Replication Software which it uses will

automatically be licensed for system (i.e. MirrorView/A) use. If the user wishes to make use of the

functionality of the additional software, it must be separately licensed.

Provisioning of adequate space in the Reserved LUN Pool on the primary and secondary storage

systems is vital to the successful operation of MirrorView/A. The exact amount of space needed may

be determined in the same manner as the required space for SnapView is calculated.

MirrorView/A and MirrorView/S - 14

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

MirrorView CLARiiONs must be connected physically, by zoning for FC or creation of connection

sets for iSCSI, and logically, via the GUI or the CLI. Once that task is complete, other operations will

be allowed.

The first of these tasks is the creation of a remote mirror.

MirrorView/A and MirrorView/S - 15

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Starting with FLARE release 29, a new set of replication roles were developed to provide the customer

with greater control of CLARiiON array replication. These roles are:

Local Replication - which provides SnapView operations only (no recovery) a role that would

restrict someone to start/stop SnapView operations

Replication - which provides SnapView, MirrorView, and SAN Copy operations (no recovery)

Replication Recovery - which provides SnapView, MirrorView, and SAN Copy operations plus

recovery

These replication roles can have local or global scope. In order to assign a global scope to a user, all

systems in the Domain must be running FLARE release 29 or above. LDAP role mappings are also

supported. Replication roles can see (but not manage) objects outside of their control. This new feature

facilitates coordination of user access to data and operations. It also introduces a finer granularity of

security.

MirrorView/A and MirrorView/S - 16

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

16

Three new roles were introduced in FLARE 29 to provide fine-grained access control of replication

tasks:

Local Replication SnapView operations only (no recovery)

Replication SnapView, MirrorView, and SAN Copy operations (no recovery)

Replication Recovery SnapView, MirrorView, and SAN Copy operations plus recovery

Global Replication roles are only supported in a complete FLARE 29 or higher environment, meaning

all arrays in the domain must be at FLARE 29 or higher. Global Replication roles (or LDAP mappings

thereof) cannot be created in domains that contain Pre-FLARE 29 systems. You must first remove all

Pre-FLARE systems from the domain. On the same note, Pre-FLARE 29 systems cannot be added to

domains that define global replication accounts. You would have to first remove all global replication

roles and LDAP replication mappings.

Note: All previous security roles are still in effect in Flare 29 and higher.

16

MirrorView/A and MirrorView/S - 17

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The chart shown describes the Replication Roles for MirrorView Operations.

MirrorView/A and MirrorView/S - 18

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Shown at the top of the slide is a list of replication limits for CLARiiON. Note the limits have been

increased for R29 and R30.

The MirrorView/A limits increased to max array limit of 256 Async mirrors. SnapView snapshot limits

doubled in R29/R30. All Consistency Group member counts increased to 32/64, respectively.

Consistency groups, which helps with both crash consistency at the array level, and also application

consistency when using Replication Manager (Replication Manager leverages array consistency

groups).

The bottom of the slide shows the replication limits that did not increase.

MirrorView/A and MirrorView/S - 19

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

10 Gb/s Ethernet and 8 Gb/s Fibre Channel respectively delivers significantly greater bandwidth than

that of 1 Gb/s Ethernet and 2 times the bandwidth of todays 4 Gb/s Fibre Channel deployments.

MirrorView ports can leverage the greater bandwidth.

The nature of the I/O (sequential vs. random) and (large vs. small block) will affect how much IOPS

processing increases can be achieved. Replication Products such as MirrorView can leverage the

increased bandwidth for greater IOPS processing speed. For example since MirrorView/A is more

sequential it will have a greater benefit, because mirror updates and the time for full a sync and re-sync

will take much less time.

Note: MirrorView ports are set in FLARE when the array is initialized and cannot be changed with

out destroying the configuration.

MirrorView/A and MirrorView/S - 20

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Starting with CLARiiON FLARE Release 29, support for Virtual Provisioning with MirrorView

enables remote replication protection of virtually provisioned LUNs, reducing customers TCO. Thin

pool LUN to thin pool LUN replication makes efficient use of remote replication resources. However,

you can replicate a any traditional pool or RAID Group LUN to a thin LUN and the replica can

become fully provisioned after a full sync. If you just promote a secondary thin pool LUN (without a

sync) that was in a MirrorView relationship with a traditional LUN the LUN stays thin. You can

replicate a thin pool LUN to a traditional LUN and the non-provisioned spaces in the thin LUN is zero

filled. In effect, negating any space saving advantages of thin LUNs.

As a best practice, the MirrorView combination of Thin pool LUNs (primary and secondary) work best

and have predictable results when synchronizing, re-synchronizing, or promoting. When combining

Thin or Traditional pool or RAID Group LUNs in a MirrorView set you may get undesirable results. In

the 1

st

scenario, with FLARE release 29 or higher committed on both sides, if you have a Thin pool

LUN primary and a Traditional LUN secondary and promote the secondary. The Thin poolLUNs

unallocated areas are then zero filled, and any space gain from being thin is lost. 2

nd

scenario, if

FLARE 29 or higher is not committed on the secondary side then no MirrorView relationship can exist

between Thin LUNs and pre-release 29 LUNs.

To avoid a pre-release condition FLARE 29 or higher must be installed and committed on all arrays

participating in MirrorView relationships.

MirrorView/A and MirrorView/S - 21

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Virtual Provisioning & MirrorView considerations are shown here. Please take a moment to review

them.

MirrorView/A and MirrorView/S - 22

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

For the slide a Traditional LUN can be either a Fully Provisioned Pool LUN or a RAID Group LUN.

Thin LUNs are Pool LUNs.

Listed are the valid combinations for MirrorView replication with virtual provisioning in Release 29.

These combinations are enforced by FLARE. When adding a secondary thin LUN (or synchronizing

existing mirrors), confirm that Thin Pool has enough capacity for the synchronization to complete. If

there is not enough space to write new data, a Media Failure administrative fracture results.

MirrorView/A and MirrorView/S - 23

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Starting with FLARE Release 29, the finer tracking granularity reduces amount of data to be

resynchronized and reduces recovery time following a fracture.

MirrorView/A and MirrorView/S - 24

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The objectives for this lesson are shown here. Please take a moment to read them.

MirrorView/A and MirrorView/S - 25

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Consistency Groups allow all LUNs belonging to a given application, usually a database, to be treated

as a single entity, and managed as a whole. This helps to ensure that the remote images are consistent,

i.e. all made at the same point in time. As a result, the remote images are always restartable copies of

the local images, though they may contain data which is not as new as that on the primary images.

It is a requirement that all the local images of a Consistency Group be on the same CLARiiON, and

that all the remote images for a Consistency Group be on the same remote CLARiiON. All information

related to the Consistency Group will be sent to the remote CLARiiON from the local CLARiiON.

Only one remote image is allowed for any mirror in a Consistency Group. The operations which can be

performed on a Consistency Group match those which may be performed on a single mirror, and will

affect all mirrors in the Consistency Group. If, for some reason, an operation cannot be performed on

one or more mirrors in the Consistency Group, then that operation will fail, and the images will be

unchanged.

For the CX3 series systems, Consistency Groups are available for MirrorView/A and MirrorView/S,

and the limits for each, 16 groups are independent of each other.

For the CX4 series systems, the max number of Consistency Groups per storage system is 64.

Managing a Consistency Group is simple create and name the group, specify synchronous or

asynchronous mirroring, and add member mirrors. A Consistency Group of the same name is

automatically created on the secondary CLARiiON, and the Secondary Images are added to it as

members.

Note: MirrorView/A source limits are shared with SAN Copy incremental-session and SnapView

snapshot source limits. Please see the MirrorView Release Notes for the latest limits.

MirrorView/A and MirrorView/S - 26

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The Synchronized and Consistent states are similar to states of the same name, applied to individual

mirrors. Synchronized means all the secondary images are in the Synchronized state. Consistent means

all the secondary images are either in the Synchronized or Consistent state, and at least one is in the

Consistent state.

The Quasi-Consistent state is unique to Consistency Groups; the Group is partially consistent,

meaning that at least one member is consistent, and at least one member is not consistent. All member

mirrors will be consistent after the next update. In a Quasi-Consistent status, a new member that is

not consistent with existing members is added to the consistency group, which automatically starts an

update. After the update completes, the consistency group is again consistent.

MirrorView/A and MirrorView/S - 27

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The Synchronizing status means that at least one mirror in the group is in the Synchronizing state, and

no member is in the Out-of-Sync state.

The Out-of-Sync status shows that the group may be fractured, waiting for synchronization (either

automatic or via the administrator), or in the synchronization queue. Administrative action may be

required to return the consistency group to having a recoverable secondary group.

The Scrambled and Incomplete statuses for Consistency Groups are both indications that errors have

occurred. Recovery may involve the removal of group members, the recreation of secondary images,

and a full synchronization.

The Scrambled status means there is a mixture of primary and secondary images in the consistency

group. During a promote, it is common for the group to be in the scrambled state. While the

Incomplete status means that some, but not all of the secondary images are missing, or mirrors are

missing. This is usually due to a failure during group promotion. The group may also be scrambled.

MirrorView/A and MirrorView/S - 28

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The Rolling Back state applies to a secondary group promoted after a failed update. The sequence is

illustrated in the next slide sequence. In the Rolling Back status, a successful promotion occurred

where there was an unfinished update to the group. This state persists until the Rollback operation

completes.

With the Local Only status the consistency group contains only primary images. No mirrors in the

group have a secondary image.

Finally, the Empty status simply means that the consistency group has no members.

MirrorView/A and MirrorView/S - 29

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The procedures for managing MirrorView/S and MirrorView/A are identical in many cases and very

similar in most other cases. This lesson covers the management of both products.

MirrorView/A and MirrorView/S - 30

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Managing MirrorView connections is started by right-clicking the storage system from the dashboard

window, then choosing MirrorView > Manage Mirror Connections, or as shown in the slide click

the Replicas and select Manage Mirror Connections.

MirrowView allows up to four remote CLARiiONs to be connected to the one currently being

managed.

Once that logical connection is in place (and it cannot be created if the physical connection, usually via

zoning, is not already in place), the storage system may participate in a mirrored relationship. The

status Enabled indicates that connections have been made for both SPA and SPB. Other status values

show that partial connections have been made; SPA may be connected to its remote peer, but SPB not,

for example. The environment should be checked to determine why all connections are not in place.

MirrorView/A and MirrorView/S - 31

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

As with all MirrorView configuration settings there is more than one way to perform MirrorView

operations. From the Dashboard right-click the storage system and select MirrorView > Configure

Mirror Write Intent Log. LUNs that meet the WIL criteria will be displayed.

The slide shows a second method for configuring the WIL. Select Replicas > Configure Mirror Write

Intent Log. From the Allocate Write Intent Log window, select the LUNs.

Note that only LUNs which are 128 MB (0.125 GB) or larger are shown. These LUNS is configured

from a RAID Group since Pool LUNs are not eligible to be used as WIL LUNs. The additional LUN

capacity is not used if LUNs larger than 128 MBs are used.

Once created the WIL LUNs can be viewed under the LUNs tab and selecting Private from the filter

dropdown menu.

Important Note: In the latest release of FLARE, when creating a MirrorView/S mirror, the Write Intent

Log in selected by default.

MirrorView/A and MirrorView/S - 32

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

After MirrorView Connections have been made, the user may create a remote mirror. This procedure

is the same for MirrorView/A as for MirrorView/S, and turns a Pool or RAID Group LUN into a

primary image for a mirror. The creation of a Remote Mirror starts by right-clicking the storage

system from the dashboard or a LUN from the LUNs menu, then choosing MirrorView > Create

Remote Mirror. The slide displays the Create Mirror dialog as selected from the Replicas menu.

This dialog allows selection of the mirror type (synchronous or asynchronous (default), primary LUN,

and some mirror configuration items. The mirror name is assigned here, a choice made as to whether

or not the WIL will be used if creating a synchronous mirror, this selected by default with latest

release, and other configuration parameters are set up. Note that the minimum number of required

images does not in any way affect whether or not I/O is allowed to the primary image.

The dialog which appears allows the user to select which LUN should be used for the primary image,

name the new remote mirror, and choose the required number of secondary images.

Note that if all the prerequisites are met, Asynchronous mirrors are the default mirror type.

Prerequisites include:

CLARiiON must be a CX-series storage system

MirrorView/A must be licensed

There must be space in the Reserved LUN Pool

If the Asynchronous mirror type is selected, the Use Write Intent Log and Quiesce Threshold

checkboxes are not present.

Once the remote mirror is created, it appears under the Remote Mirrors container. The mirror is in the

Attention state because there is no secondary image. Note that the new mirror is called out as a Sync

mirror.

MirrorView/A and MirrorView/S - 33

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Once the user selections have been made, the Remote Mirror is created and displayed in the GUI.

Note that the mirror is marked as being in the Attention state; there is no secondary image at this point.

There are, as yet, no reserved SnapView Snapshots, SnapView Sessions, or reserved SAN Copy

Sessions, also because no secondary image is present.

MirrorView/A and MirrorView/S - 34

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Adding a secondary image requires a right-click on the mirror, and selection of the Add Secondary

Image or the slide, selecting Replicas > Add Secondary option. Note that other menu choices allow

destruction of the mirror, and the retrieval of mirror properties.

The right-click options shown here are identical to those for a synchronous mirror.

Ensure the secondary storage system is correct for the host the secondary image, and which LUN will

be used. A secondary storage system will only appear in the list if it meets certain requirements:

It is a CX, CX3 or CX4-series storage systems

There is available space in the Reserved LUN Pool

The pane in the middle of the dialog has containers which may be expanded to show the LUNs

underneath them. SPA and SPB containers hold the LUNs owned by those SPs; other containers will

display their subset of the available public LUNs and metaLUNs that are the same size as the source

LUN. The dialog displays the owning SP of the primary image at the top of the dialog to allow the

user to easily choose a secondary owned by the same SP.

Two of the choices for the secondary image are similar to those for a synchronous mirror - the recovery

policy, and the synchronization rate. The choice not present with synchronous mirrors is the Update

Type. Here the user may select manual updates, a time interval measured from the start of the last

update, or an interval measured from the end of the last update. If an update cycle runs over its allotted

time, then the next cycle will begin immediately after the current cycle finishes. The defaults settings

are shown in the example.

The secondary image has been added, and the mirror automatically starts to synchronize. Note that the

system has automatically created a Reserved SnapView Snapshot, a Reserved SnapView Session, and a

Reserved SAN Copy Session for the mirror, and the names of those objects reflect the fact that they are

used for MirrorView/A (FAR) and which mirror they are associated with.

MirrorView/A and MirrorView/S - 35

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The mirror is shown as being in the transitioning state, and the data state shown as synchronizing.

The Reserved LUN which is assigned to the Primary Image at this point will not be freed up until the

Remote Mirror is destroyed.

The initial synchronization has finished, and the secondary status has changed to Synchronized. If the

primary image had received updates since the start of synchronization, the secondary state would be

Consistent. Note also that the SnapView reserved objects are not destroyed, and that the Reserved SAN

Copy Session is marked as Completed.

MirrorView/A and MirrorView/S - 36

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The slide shows both Asynchronous and Synchronous mirrors have been created. By highlighting a

image in the upper window, the lower window displays the primary and secondary images for that

mirror. Highlighting a secondary image as shown in the lower window, allows the options to become

available ( Delete, Synchronize, Fracture, and Promote).

MirrorView/A and MirrorView/S - 37

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The example show the properties of both a Synchronous (the left ) and Asynchronous secondary image

(right). The General tab gives basic information about the mirror, including the name and mirror type.

The Primary Image tab shows information about the primary image, its LUN number, and disk type

(Not shown).

The Secondary Image tab (there may be two for synchronous mirrors) shows the configuration of the

secondary image(s), as well as the synchronization progress, Recovery Policy, Synchronization Rate,

and Update Type for Asynchronous. The screenshot at the lower right of the slide shows the operations

which may be performed on a Secondary Image. Some of these choices may not be allowed if the state

of the Secondary Image is incorrect.

MirrorView/A and MirrorView/S - 38

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

To create a Consistency Group, right-click the storage system from the Dashboard menu and choose

MirrorView > Create Group or as shown in the example, select Create Consistency Group from the

Replicas > Mirrors menu.

The resulting dialog allows the selection of mirror type, a name for the group (required), an optional

description, and which mirrors will be group members. The slide shows a mirror has been selected and

moved to the right side window, ( Selected Remote Mirror). To move a second mirror, highlight the

mirror and click the arrow to move it to the right side.

The advanced parameters, which are configurable here, match those for an individual mirror

Recovery Policy, Synchronization Rate, and Update Type and the options are identical to those

seen for an individual mirror.

MirrorView/A and MirrorView/S - 39

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Select the Replicas > Mirrors option to view both configured Mirrors and Consistency Groups. The

Type of mirror ( synchronous in the slide) can be selected from the dropdown, once selected, the group

can be expanded to display the group members. By highlighting the group name, users can view the

options such as Fracture shown in the slide. Choosing this option will Administratively Fracture the

group. This option is only available on the storage system the maintains the primary image of the

mirrors in the group. Remember users cannot fracture an individual image whose primary image is part

of a consistency group.

After the fracture, the mirror state is Consistent and the Condition displays Administratively

Fractured.

MirrorView/A and MirrorView/S - 40

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The commands for managing MirrorView/S and MirrorView/A are identical in many cases, and very

similar in most other cases. This lesson covers the management of both products.

MirrorView/A and MirrorView/S - 41

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The structure of the Navisphere Secure CLI commands is shown here. All examples assume that the

appropriate security files have been created.

Note that the Secure CLI commands are an exact match of the operations that can be performed from

the GUI; in many cases, the command name matches the menu choice.

Before secondary images can be added to mirrors, the physical (cables and zoning or connection sets)

and logical connections must be established between the participating CLARiiONs. The

enablepath command establishes the logical connection. The -disablepath command removes the

connection.

The enablepath and disablepath commands allow the user to specify a connection type; if none is

specified, the CLARiiON tries FC first, then tries iSCSI.

MirrorView/A and MirrorView/S - 42

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

This first group of commands performs the creation, destruction, modification, and retrieval of status

for Remote Mirrors.

Note that the creation of a mirror involves only choosing a name and specifying a Primary Image

LUN. Most mirror parameters are specified when adding a Secondary Image. Those initially

configured parameters may be altered with the change command.

MirrorView/A and MirrorView/S - 43

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

These commands all deal with operations which may be performed on a Secondary Image, the

creation, removal, modification, as well as the synchronization and fracturing of the image. The

addimage command allows specifying the synchronization rate and other mirror parameters.

MirrorView/A and MirrorView/S - 44

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

These commands allow promotion of a Secondary Image and display the progress of a synchronization

operation. There are also three commands that are MV/S specific. They deal with the allocation,

deallocation and status retrieval from the Write Intent Log.

MirrorView/A and MirrorView/S - 45

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The setfeature command allows MirrorView to be added to the I/O stack of a LUN (usually used

when dealing with a LUN on a remote storage system). If a secondary image is removed by specifying

its image UID, the mirror feature needs to be removed in this manner.

This final CLI command is the nowriteintentlog. The write intent log is automatically used on all

mirrors on CX4 series systems. CX4 series systems allow all mirrors to have the write intent log

allocated up to the maximum allowable number of mirrors on the system. The performance

improvements previously made in release 26 allow write intent log use with negligible performance

impacts under normal operating conditions. Therefore, the write intent log is configured on all mirrors

by default. The only way to disable this functionality is to use this option with mirror creation to create

a WIL-less mirror.

MirrorView/A and MirrorView/S - 46

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

The Consistency Group CLI commands follow the GUI menu choices, creation, destruction and

modification of a Consistency Group, as well as the addition and removal of mirrors from the group.

MirrorView/A and MirrorView/S - 47

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

This final set of commands allows the synchronization, fracture and promotion of a MirrorView

Consistency Group. The listgroups command retrieves Consistency Group information.

MirrorView/A and MirrorView/S - 48

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Snapshots and Clones can be used in conjunction with MirrorView to make a copy of mirrored data

available to remote hosts.

MirrorView/A and MirrorView/S - 49

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

MirrorView and SnapView work well together. This combination has been used in several products,

among them Replication Manager.

Note that the ability to clone a Secondary Image allows DR testing using a full copy of the data. This

obviates the need for a promotion of the Secondary Image, and may simplify testing.

MirrorView/A and MirrorView/S - 50

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Shown here are replications that are possible.

* - CAUTION Source LUN should not change during the copy

a) Includes any LUN used as a target for either a full or incremental SAN Copy session

b) Only if the source LUN is either in a CX3-series storage system running FLARE 02.24.xxx.5.yyy

or higher or in a CX-series storage system running FLARE 02.24.xxx.5.yyy or higher

c) Can use with MirrorView/A or MirrorView/S but not both at once

d) Cannot create a snapshot of a snapshot

e) Cannot clone a snapshot

f) Cannot clone a clone

g) Cannot mirror a clone

h) Cannot mirror a mirror

i) Cannot mirror a snapshot

j) Incremental sessions use snapshots internally

k) Create snapshot or clone and use as the source for SAN Copy session

MirrorView/A and MirrorView/S - 51

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

This shows a typical distance replication solution. SnapView, in the form of Snapshots or Clones, or a

combination, may be used on the primary or secondary image to allow testing or backup. Bear in mind

that the use of SnapView point in time copies on a MirrorView image may impact performance,

especially for MirrorView/S.

MirrorView/A and MirrorView/S - 52

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Lab Exercise

MirrorView/A and MirrorView/S - 53

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

These are the key points covered in this module. Please take a moment to review them.

MirrorView/A and MirrorView/S - 54

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

These are the key points covered in this training. Please take a moment to review them.

This concludes the training.

MirrorView/A and MirrorView/S - 55

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Refer to the link/URL on the slide to access the assessment.

MirrorView/A and MirrorView/S - 56

Copyright 2010 EMC Corporation. Do not Copy - All Rights Reserved.

Vous aimerez peut-être aussi

- Oracle Recovery Appliance Handbook: An Insider’S InsightD'EverandOracle Recovery Appliance Handbook: An Insider’S InsightPas encore d'évaluation

- VNXBlockSRPM - M2 - MirrorViewA and MirrorViewSDocument58 pagesVNXBlockSRPM - M2 - MirrorViewA and MirrorViewSKalaivanan VeluPas encore d'évaluation

- The Objectives For This Module Are Shown Here. Please Take A Moment To Read ThemDocument10 pagesThe Objectives For This Module Are Shown Here. Please Take A Moment To Read ThemrasoolvaliskPas encore d'évaluation

- Emc Snapview and Mirrorview: Data Replication and Recovery UsingDocument5 pagesEmc Snapview and Mirrorview: Data Replication and Recovery Usingcicero_cetocPas encore d'évaluation

- SVC Mirror FinalDocument19 pagesSVC Mirror Finalpisko1979Pas encore d'évaluation

- Unity Snapshots SRGDocument42 pagesUnity Snapshots SRGRatata100% (1)

- Snapview FoundationsDocument39 pagesSnapview FoundationsDileep MaddukuriPas encore d'évaluation

- Chapter 6 Database Mirroring ArchitectureDocument5 pagesChapter 6 Database Mirroring ArchitectureraushanlovelyPas encore d'évaluation

- Database Mirroring - Key Concepts and Operating ModesDocument9 pagesDatabase Mirroring - Key Concepts and Operating ModesDevvrataPas encore d'évaluation

- Student Guide - Symm BC ManagementDocument291 pagesStudent Guide - Symm BC ManagementjohnPas encore d'évaluation

- MirroringDocument9 pagesMirroringadchy7Pas encore d'évaluation

- Unity Data Protection SRGDocument78 pagesUnity Data Protection SRGShahbaz AlamPas encore d'évaluation

- Nuts and Bolts of Database MirroringDocument61 pagesNuts and Bolts of Database MirroringBeneberu Misikir100% (1)

- VCP 5 - Objective 5.5 - Backup and Restore Virtual Machines: Identify Snapshot RequirementsDocument7 pagesVCP 5 - Objective 5.5 - Backup and Restore Virtual Machines: Identify Snapshot RequirementscromagnonePas encore d'évaluation

- White Paper Introduction To XtremIO Snapshots H13035Document30 pagesWhite Paper Introduction To XtremIO Snapshots H13035lahsivlahsivPas encore d'évaluation

- Mirror View: Configuration GuidelinesDocument7 pagesMirror View: Configuration GuidelinesJagdish ModiPas encore d'évaluation

- Manage Your Risk With Business Continuity and Disaster RecoveryDocument20 pagesManage Your Risk With Business Continuity and Disaster RecoveryvsvboysPas encore d'évaluation

- What Is SQL Server Database Mirroring?Document4 pagesWhat Is SQL Server Database Mirroring?namansoniPas encore d'évaluation

- SQL Interview Questions - DB MirroringDocument19 pagesSQL Interview Questions - DB Mirroringjagan36Pas encore d'évaluation

- DataCores SANsymphonyV Part 2 Mirrored Virtual DiskDocument5 pagesDataCores SANsymphonyV Part 2 Mirrored Virtual DiskRalfRalfPas encore d'évaluation

- Database Mirroring in SQL Server 2005Document43 pagesDatabase Mirroring in SQL Server 2005vartak.daivatPas encore d'évaluation

- Virtual Asr WhitepaperDocument4 pagesVirtual Asr Whitepapershekhar785424Pas encore d'évaluation

- PowerConnect 55xx ReleaseNotes 40111Document5 pagesPowerConnect 55xx ReleaseNotes 40111DavidMarcusPas encore d'évaluation

- EMC ProtectPoint For VMAX3 Overview - SRGDocument18 pagesEMC ProtectPoint For VMAX3 Overview - SRGHamid Reza AhmadpourPas encore d'évaluation

- Building A Highly Available Datacore SANDocument12 pagesBuilding A Highly Available Datacore SANkkphucPas encore d'évaluation

- TF Win2000 Symcli TFmirror Splitbcv OrclDocument6 pagesTF Win2000 Symcli TFmirror Splitbcv OrclShajahan ShaikPas encore d'évaluation

- Snapmirror and Snapvault in Clustered Data Ontap 8.3 V1.1-Lab GuideDocument57 pagesSnapmirror and Snapvault in Clustered Data Ontap 8.3 V1.1-Lab GuideRajendra BobadePas encore d'évaluation

- Database MirroringDocument74 pagesDatabase MirroringRama UdayaPas encore d'évaluation

- h5588 Emc Mirrorview Cluster Enabler WPDocument35 pagesh5588 Emc Mirrorview Cluster Enabler WPWeimin ChenPas encore d'évaluation

- Disaster Recovery - SrinivasDocument5 pagesDisaster Recovery - SrinivaskkrishhhPas encore d'évaluation

- 6 Open Replicator and FLM FundamentalsDocument57 pages6 Open Replicator and FLM Fundamentals김명규Pas encore d'évaluation

- Disaster Recovery in Clustered Storage ServersDocument7 pagesDisaster Recovery in Clustered Storage ServersAmerican Megatrends IndiaPas encore d'évaluation

- Feature Briefing - NetBackup 7 1 - Auto Image ReplicationDocument9 pagesFeature Briefing - NetBackup 7 1 - Auto Image ReplicationjtolaPas encore d'évaluation

- TAC Webinar Series 1 Session 3Document39 pagesTAC Webinar Series 1 Session 3j2388Pas encore d'évaluation

- ODABR ODA Backup and RestoreDocument21 pagesODABR ODA Backup and RestoreMuhammad Salman KhanPas encore d'évaluation

- Mirrorview and San Copy Configuration and Management: October 2010Document27 pagesMirrorview and San Copy Configuration and Management: October 2010rasoolvaliskPas encore d'évaluation

- Ssl-Vision: The Shared Vision System For The Robocup Small Size LeagueDocument12 pagesSsl-Vision: The Shared Vision System For The Robocup Small Size LeaguePercy Wilianson Lovon RamosPas encore d'évaluation

- Exadata Monitoring and Management Best PracticesDocument5 pagesExadata Monitoring and Management Best PracticesOsmanPas encore d'évaluation

- OratopDocument17 pagesOratopWahab AbdulPas encore d'évaluation

- Visual San Intro Student - Resource - GuideDocument98 pagesVisual San Intro Student - Resource - GuideZuwairi KamarudinPas encore d'évaluation

- Database Snapshot: Read-Only View for ReportingDocument8 pagesDatabase Snapshot: Read-Only View for ReportingRadha RamanPas encore d'évaluation

- RecoverPoint Implementation Student GuideDocument368 pagesRecoverPoint Implementation Student Guidesorin_gheorghe2078% (9)

- Macrium Reflect 7.3.5365 Crack Full ReviewDocument4 pagesMacrium Reflect 7.3.5365 Crack Full Reviewjoax100% (1)

- Clariion Hardware SRG r29Document92 pagesClariion Hardware SRG r29Nikhil ShetPas encore d'évaluation

- Release NotesDocument13 pagesRelease NotesBhadhri PrasadPas encore d'évaluation

- ADG Technical PaperDocument23 pagesADG Technical PaperHarish NaikPas encore d'évaluation

- MirroringDocument20 pagesMirroringJanardhan kengarPas encore d'évaluation

- Technical Details On S - 4HANA ZDO (Zero Downtime Upgrades - Updates) - SAP BlogsDocument15 pagesTechnical Details On S - 4HANA ZDO (Zero Downtime Upgrades - Updates) - SAP BlogsNivas ReddyPas encore d'évaluation

- Data Domain FundamentalDocument39 pagesData Domain Fundamentalharibabu6502Pas encore d'évaluation

- Unity ReplicationDocument56 pagesUnity ReplicationRatataPas encore d'évaluation

- Huawei HyperClone Technical White Paper PDFDocument14 pagesHuawei HyperClone Technical White Paper PDFMenganoFulanoPas encore d'évaluation

- SQL Disaster Recovery OptionsDocument7 pagesSQL Disaster Recovery OptionsVidya SagarPas encore d'évaluation

- USP MICROCODE VERSION 50-05-54-00/00 RELEASED 07/18/06 Newly Supported Features and Functions For Version 50-05-54-00/00Document3 pagesUSP MICROCODE VERSION 50-05-54-00/00 RELEASED 07/18/06 Newly Supported Features and Functions For Version 50-05-54-00/00lgrypvPas encore d'évaluation

- Close: Lascon Storage BackupsDocument10 pagesClose: Lascon Storage BackupsAtthulaiPas encore d'évaluation

- SQL Server Database MirroringDocument74 pagesSQL Server Database MirroringnithinvnPas encore d'évaluation

- Using SnapView For Business ApplicationsDocument14 pagesUsing SnapView For Business ApplicationsyjaganrPas encore d'évaluation

- E-Series: Netapp E-Series Storage Systems Mirroring Feature GuideDocument27 pagesE-Series: Netapp E-Series Storage Systems Mirroring Feature Guidemani deepPas encore d'évaluation

- Osing Clariion Data Replication Method Article RossouwDocument22 pagesOsing Clariion Data Replication Method Article RossouwSobhan JaliparthiPas encore d'évaluation

- Mastering z/OS Management Facility: A Comprehensive Guide to Mainframe Innovation: MainframesD'EverandMastering z/OS Management Facility: A Comprehensive Guide to Mainframe Innovation: MainframesPas encore d'évaluation

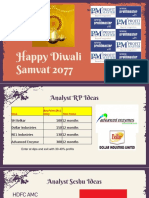

- A Crackling Samvat 2077 in Store: Diwali Muhurat Picks November 2020Document44 pagesA Crackling Samvat 2077 in Store: Diwali Muhurat Picks November 2020rasoolvaliskPas encore d'évaluation

- Passport Appointment ReceiptDocument3 pagesPassport Appointment ReceiptrasoolvaliskPas encore d'évaluation

- EyspsDocument2 pagesEyspsrasoolvaliskPas encore d'évaluation

- A Crackling Samvat 2077 in Store: Diwali Muhurat Picks November 2020Document44 pagesA Crackling Samvat 2077 in Store: Diwali Muhurat Picks November 2020rasoolvaliskPas encore d'évaluation

- The Objectives For This Module Are Shown Here. Please Take A Moment To Review ThemDocument20 pagesThe Objectives For This Module Are Shown Here. Please Take A Moment To Review ThemrasoolvaliskPas encore d'évaluation

- EMC Open Replicator (SOLVED) - Toolbox For IT GroupsDocument3 pagesEMC Open Replicator (SOLVED) - Toolbox For IT GroupsrasoolvaliskPas encore d'évaluation

- HDFC Sec Retail Research Diwali Picks - 2020-202011061535594952846Document32 pagesHDFC Sec Retail Research Diwali Picks - 2020-202011061535594952846rasoolvaliskPas encore d'évaluation

- PM Samvat 2077 RDocument16 pagesPM Samvat 2077 RrasoolvaliskPas encore d'évaluation

- PM Samvat 2077 RDocument16 pagesPM Samvat 2077 RrasoolvaliskPas encore d'évaluation

- BTP ttp1Document3 pagesBTP ttp1rasoolvaliskPas encore d'évaluation

- PM Samvat 2077 RDocument16 pagesPM Samvat 2077 RrasoolvaliskPas encore d'évaluation

- Mirrorview and San Copy Configuration and Management: October 2010Document27 pagesMirrorview and San Copy Configuration and Management: October 2010rasoolvaliskPas encore d'évaluation

- Diwali Technical Picks - 2020Document6 pagesDiwali Technical Picks - 2020rasoolvaliskPas encore d'évaluation

- Symmetrix - BIN FileDocument8 pagesSymmetrix - BIN FilerasoolvaliskPas encore d'évaluation

- BTP ttp2Document4 pagesBTP ttp2rasoolvaliskPas encore d'évaluation

- 5-minute initial troubleshooting on Brocade equipmentDocument5 pages5-minute initial troubleshooting on Brocade equipmentrasoolvaliskPas encore d'évaluation

- M 201 Res 01Document60 pagesM 201 Res 01rasoolvaliskPas encore d'évaluation

- Lab Exercise 4: Create A Synchronous Remote Mirror: PurposeDocument24 pagesLab Exercise 4: Create A Synchronous Remote Mirror: PurposerasoolvaliskPas encore d'évaluation

- Lab Exercise 16: Unisphere Quality of Service Management (NQM)Document5 pagesLab Exercise 16: Unisphere Quality of Service Management (NQM)rasoolvaliskPas encore d'évaluation

- Lab Exercise 4: Create A Synchronous Remote Mirror: PurposeDocument24 pagesLab Exercise 4: Create A Synchronous Remote Mirror: PurposerasoolvaliskPas encore d'évaluation

- Mirrorview and San Copy Configuration and Management: October 2010Document27 pagesMirrorview and San Copy Configuration and Management: October 2010rasoolvaliskPas encore d'évaluation

- The Objectives For This Module Are Shown Here. Please Take A Moment To Read ThemDocument20 pagesThe Objectives For This Module Are Shown Here. Please Take A Moment To Read ThemrasoolvaliskPas encore d'évaluation

- Lab Exercise 13: Configure Unisphere Analyzer: PurposeDocument12 pagesLab Exercise 13: Configure Unisphere Analyzer: PurposerasoolvaliskPas encore d'évaluation

- Lab Exercise 11: Create An Event Monitor Template: PurposeDocument7 pagesLab Exercise 11: Create An Event Monitor Template: PurposerasoolvaliskPas encore d'évaluation

- Lab Exercise 9: Configuring Host Access To Clariion Luns - LinuxDocument9 pagesLab Exercise 9: Configuring Host Access To Clariion Luns - LinuxrasoolvaliskPas encore d'évaluation

- Clariion Host Integration and Management With Snapview Lab GuideDocument2 pagesClariion Host Integration and Management With Snapview Lab GuiderasoolvaliskPas encore d'évaluation

- M 01 Res 01Document20 pagesM 01 Res 01shogun7333Pas encore d'évaluation

- Data Governance For DummiesDocument51 pagesData Governance For DummiesmmelkadyPas encore d'évaluation

- Module 2-85218-2Document9 pagesModule 2-85218-2Erick MeguisoPas encore d'évaluation

- VSICM7 M07 VM ManagementDocument114 pagesVSICM7 M07 VM Managementhacker_05Pas encore d'évaluation

- UNIT 1 - Digital DevicesDocument96 pagesUNIT 1 - Digital DevicesZain BuhariPas encore d'évaluation

- Edexcel Computer Science June 2018 Gcse PDFDocument16 pagesEdexcel Computer Science June 2018 Gcse PDFkirthika senathirajaPas encore d'évaluation

- Pres3 - Oracle Memory StructureDocument36 pagesPres3 - Oracle Memory StructureAbdul WaheedPas encore d'évaluation

- ICT in Data ManagementDocument8 pagesICT in Data ManagementharshilPas encore d'évaluation

- Introduction To Computer CH 2Document53 pagesIntroduction To Computer CH 2Mian AbdullahPas encore d'évaluation

- Configuration Andtuning GPFS For Digital Media EnvironmentsDocument272 pagesConfiguration Andtuning GPFS For Digital Media EnvironmentsascrivnerPas encore d'évaluation

- INTE 30103 Information Processing and Handling in Libraries and Information CentersDocument4 pagesINTE 30103 Information Processing and Handling in Libraries and Information CentersAriel MaglentePas encore d'évaluation

- Chapter 1: Introduction To Computer Science and Media ComputationDocument27 pagesChapter 1: Introduction To Computer Science and Media ComputationSadi SnmzPas encore d'évaluation

- Samsung Hard Disk Drive Installation GuideDocument51 pagesSamsung Hard Disk Drive Installation GuideJamesBond88Pas encore d'évaluation

- Recovery Manager (RMAN) and Oracle Secure Backup (OSB)Document41 pagesRecovery Manager (RMAN) and Oracle Secure Backup (OSB)alexPas encore d'évaluation

- TM213TRE.40-EnG Automation Runtime V4100Document52 pagesTM213TRE.40-EnG Automation Runtime V4100Vladan Milojević100% (3)

- Masm 2Document16 pagesMasm 2Supraja RamanPas encore d'évaluation

- HITACHI What Is The Purpose of This 4Document4 pagesHITACHI What Is The Purpose of This 4Sergio OrtegaPas encore d'évaluation

- Chapter 8Document34 pagesChapter 8tharaaPas encore d'évaluation

- SAT Group AssignmentDocument76 pagesSAT Group AssignmentSyed Ghazi Abbas Rizvi0% (1)

- CSE115 Programming Concepts OverviewDocument21 pagesCSE115 Programming Concepts OverviewhjkjbfPas encore d'évaluation

- ELTR145 Sec2Document105 pagesELTR145 Sec2Midori HiroshiPas encore d'évaluation

- G41M-VS3 R2.0Document2 pagesG41M-VS3 R2.0EspinozaCarlosPas encore d'évaluation

- Computer Components and FunctionsDocument8 pagesComputer Components and FunctionsAjay RavuriPas encore d'évaluation

- Aqa Gcse Computer Science (8520) : Knowledge OrganisersDocument45 pagesAqa Gcse Computer Science (8520) : Knowledge OrganisersShakila Shaki100% (1)

- Chapter 9Document64 pagesChapter 9Sabin MaharjanPas encore d'évaluation

- Chapter 9memoryDocument8 pagesChapter 9memorymaqyla naquelPas encore d'évaluation

- Unit 6 (22516)Document40 pagesUnit 6 (22516)Mufaddal MerchantPas encore d'évaluation

- Lec # 1 Introduction To Information Technology: Fundamental MeansDocument9 pagesLec # 1 Introduction To Information Technology: Fundamental MeansAsif AliPas encore d'évaluation

- Solid State Storage Devices ExplainedDocument5 pagesSolid State Storage Devices ExplainedSam ManPas encore d'évaluation

- Heilman C. - Pocket Forth Manual.v0.6.5Document21 pagesHeilman C. - Pocket Forth Manual.v0.6.5ilyenamarrepointPas encore d'évaluation

- 3000EZPlus Manual SismógrafoDocument55 pages3000EZPlus Manual SismógrafoJordan_PaciniPas encore d'évaluation