Académique Documents

Professionnel Documents

Culture Documents

06939718

Transféré par

Malathy KrishnanCopyright

Formats disponibles

Partager ce document

Partager ou intégrer le document

Avez-vous trouvé ce document utile ?

Ce contenu est-il inapproprié ?

Signaler ce documentDroits d'auteur :

Formats disponibles

06939718

Transféré par

Malathy KrishnanDroits d'auteur :

Formats disponibles

84

IEEE TRANSACTIONS ON HUMAN-MACHINE SYSTEMS, VOL. 45, NO. 1, FEBRUARY 2015

Improving Web Navigation Usability by Comparing

Actual and Anticipated Usage

Ruili Geng, Member, IEEE, and Jeff Tian, Member, IEEE

AbstractWe present a new method to identify navigationrelated Web usability problems based on comparing actual and

anticipated usage patterns. The actual usage patterns can be extracted from Web server logs routinely recorded for operational

websites by first processing the log data to identify users, user sessions, and user task-oriented transactions, and then applying an

usage mining algorithm to discover patterns among actual usage

paths. The anticipated usage, including information about both

the path and time required for user-oriented tasks, is captured by

our ideal user interactive path models constructed by cognitive experts based on their cognition of user behavior. The comparison

is performed via the mechanism of test oracle for checking results

and identifying user navigation difficulties. The deviation data produced from this comparison can help us discover usability issues

and suggest corrective actions to improve usability. A software

tool was developed to automate a significant part of the activities

involved. With an experiment on a small service-oriented website,

we identified usability problems, which were cross-validated by domain experts, and quantified usability improvement by the higher

task success rate and lower time and effort for given tasks after

suggested corrections were implemented. This case study provides

an initial validation of the applicability and effectiveness of our

method.

Index TermsCognitive user model, sessionization, software

tool, test oracle, usability, usage pattern, Web server log.

I. INTRODUCTION

S the World Wide Web becomes prevalent today, building and ensuring easy-to-use Web systems is becoming

a core competency for business survival [26], [41]. Usability

is defined as the effectiveness, efficiency, and satisfaction with

which specific users can complete specific tasks in a particular environment [5]. Three basic Web design principles, i.e.,

structural firmness, functional convenience, and presentational

delight, were identified to help improve users online experience

[42]. Structural firmness relates primarily to the characteristics

that influence the website security and performance. Functional

convenience refers to the availability of convenient characteristics, such as a sites ease of use and ease of navigation, that

Manuscript received February 7, 2014; revised August 28, 2014; accepted

October 3, 2014. Date of publication October 29, 2014; date of current version

January 13, 2015. This work was supported in part by the National Science

Foundation (NSF) Grant #1126747, Raytheon, and NSF Net-Centric I/UCRC.

This paper was recommended by Associate Editor F. Ritter.

R. Geng is with the Department Computer Science and Engineering, Southern

Methodist University, Dallas, TX 75275 USA (e-mail: rgeng@smu.edu).

J. Tian is with the Department of Computer Science and Engineering, Southern Methodist University, Dallas, TX 75275 USA, and also with the School

of Computer Science, Northwestern Polytechnical University, Xian, Shaanxi

710072, China (e-mail: tian@smu.edu).

Color versions of one or more of the figures in this paper are available online

at http://ieeexplore.ieee.org.

Digital Object Identifier 10.1109/THMS.2014.2363125

help users interaction with the interface. Presentational delight

refers to the website characteristics that stimulate users senses.

Usability engineering provides methods for measuring usability and for addressing usability issues. Heuristic evaluation

by experts and user-centered testing are typically used to identify usability issues and to ensure satisfactory usability [26].

However, significant challenges exist, including 1) accuracy of

problem identification due to false alarms common in expert

evaluation [22], 2) unrealistic evaluation of usability due to differences between the testing environment and the actual usage

environment [7], and 3) increased cost due to the prolonged

evolution and maintenance cycles typical for many Web applications [24]. On the other hand, log data routinely kept at

Web servers represent actual usage. Such data have been used

for usage-based testing and quality assurance [20], [39], and

also for understanding user behavior and guiding user interface

design [36], [40].

We propose to extract actual user behavior from Web server

logs, capture anticipated user behavior with the help of cognitive

user models [28], and perform a comparison between the two.

This deviation analysis would help us identify some navigation

related usability problems. Correcting these problems would

lead to better functional convenience as characterized by both

better effectiveness (higher task completion rate) and efficiency

(less time for given tasks). This new method would complement

traditional usability practices and overcome some of the existing

challenges.

The rest of this paper is organized as follows: Section II introduces the related work. Section III presents the basic ideas of our

method and its architecture. Section IV describes how to extract

actual usage patterns from Web server logs. Section V describes

the construction of our ideal user interactive path (IUIP) models

to capture anticipated Web usage. Section VI presents the comparison between actual usage patterns and corresponding IUIP

models. Section VII describes a case study applying our method

to a small service-oriented website. Section VIII validates our

method by examining its applicability and effectiveness. Section IX discusses the limitations of our method. Conclusions

and perspectives are discussed in Section X.

II. RELATED WORK

A. Logs, Web Usage and Usability

Two types of logs, i.e., server-side logs and client-side logs,

are commonly used for Web usage and usability analysis.

Server-side logs can be automatically generated by Web servers,

with each entry corresponding to a user request. By analyzing

these logs, Web workload was characterized and used to suggest

2168-2291 2014 IEEE. Personal use is permitted, but republication/redistribution requires IEEE permission.

See http://www.ieee.org/publications standards/publications/rights/index.html for more information.

GENG AND TIAN: IMPROVING WEB NAVIGATION USABILITY BY COMPARING ACTUAL AND ANTICIPATED USAGE

performance enhancements for Internet Web servers [4]. Because of the vastly uneven Web traffic, massive user population,

and diverse usage environment, coverage-based testing is insufficient to ensure the quality of Web applications [20]. Therefore,

server-side logs have been used to construct Web usage models for usage-based Web testing [20], [39], or to automatically

generate test cases accordingly to improve test efficiency [34].

Server logs have also been used by organizations to learn

about the usability of their products. For example, search queries

can be extracted from server logs to discover user information

needs for usability task analysis [31]. There are many advantages to using server logs for usability studies. Logs can provide

insight into real users performing actual tasks in natural working conditions versus in an artificial setting of a lab. Logs also

represent the activities of many users over a long period of time

versus the small sample of users in a short time span in typical

lab testing [37]. Data preparation techniques and algorithms can

be used to process the raw Web server logs, and then mining can

be performed to discover users visitation patterns for further

usability analysis [14]. For example, organizations can mine

server-side logs to predict users behavior and context to satisfy

users need [40]. Users revisitiation patterns can be discovered

by mining server logs to develop guidelines for browser history mechanism that can be used to reduce users cognitive and

physical effort [36].

Client-side logs can capture accurate comprehensive usage

data for usability analysis, because they allow low-level user

interaction events such as keystrokes and mouse movements

to be recorded [18], [25]. For example, using these client-side

data, the evaluator can accurately measure time spent on particular tasks or pages as well as study the use of back button

and user clickstreams [19]. Such data are often used with taskbased approaches and models for usability analysis by comparing discrepancies between the designers anticipation and a

users actual behavior [10], [27]. However, the evaluator must

program the UI, modify Web pages, or use an instrumented

browser with plug-in tools or a special proxy server to collect

such data. Because of privacy concerns, users generally do not

want any instrument installed in their computers. Therefore, logging actual usage on the client side can best be used in lab-based

experiments with explicit consent of the participants.

B. Cognitive User Models

In recent years, there is a growing need to incorporate insights from cognitive science about the mechanisms, strengths,

and limits of human perception and cognition to understand the

human factors involved in user interface design [28]. For example, the various constraints on cognition (e.g., system complexity) and the mechanisms and patterns of strategy selection

can help human factor engineers develop solutions and apply

technologies that are better suited to human abilities [30], [40].

Commonly used cognitive models include GOMS, EPIC, and

ACT-R [2], [21], [28]. The GOMS model consists of Goals, Operators, Methods, and Selection rules. As the high-level architecture, GOMS describes behavior and defines interactions as

a static sequence of human actions. As the low-level cognitive

85

architecture, EPIC (Executive-Process/Interactive Control) and

ACT-R (Adaptive Control of Thought-Rational) can be taken as

the specific implementation of the high-level architecture. They

provide detailed information about how to simulate human processing and cognition [2], [21]. An important feature of these

low-level cognitive architectures is that they are all implemented

as computer programming systems so that cognitive models may

be specified, executed, and their outputs (e.g., error rates and response latencies) compared with human performance data [17].

ACT-R provides detailed and sophisticated process models

of human performance in interactive tasks with complex interfaces. It allows researchers to specify the cognitive factors (e.g.,

domain knowledge, problem-solving strategies) by developing

cognitive models of interactive behavior. It consists of multiple

modules that acquire information from the environment, process

information and execute actions in the furtherance of particular goals. Cognition proceeds via a pattern matching process

that attempts to find productions that match the current contents. ACT-R is often used to understand the decisions that Web

users make in following various links to satisfy their information goals [2]. However, ACT-R has its own limitations due to

the complexity of its model development [9] and the low-level

rule-based programming language it relies on [12].

Software engineering techniques have also been applied to

develop intelligent agents and cognitive models [16], [29]. On

the one hand, higher level programming languages simplify the

encoding of behavior by creating representations that map more

directly to a theory of how behavior arises in humans. On the

other hand, as these designs are adopted, adapted, and reused,

they may become design patterns.

III. ARCHITECTURE OF A NEW METHOD

Our research is guided by three research questions:

1) RQ1: What usability problems are addressed?

2) RQ2: How to identify these problems?

3) RQ3: How to validate our approach?

As described in the previous section, Web server logs have

been used for usage-based Web testing and quality assurance.

They have also been used for understanding user behavior and

guiding user interface design. These works are extended in this

study to focus on the functional convenience aspect of usability.

In particular, we focus on identifying navigation related problems as characterized by an inability to complete certain tasks

or excessive time to complete them (RQ1).

Usability engineers often use server logs to analyze users

behavior and understand how users perform specific tasks to improve their experience. On the other hand, many critics pointed

out that server logs contain no information about the users goals

in visiting websites [37]. Usability engineers cannot generalize

from server log data as they can from data collected by performing controlled experiments. However, these weaknesses can be

alleviated by applying the cognitive user models we surveyed in

the previous section. Such cognitive models can be constructed

with our domain knowledge and empirical data to capture anticipated user behavior. They may also provide clues to users

intentions when they interact with Web systems.

86

Fig. 1.

IEEE TRANSACTIONS ON HUMAN-MACHINE SYSTEMS, VOL. 45, NO. 1, FEBRUARY 2015

Architecture of a new method for identifying usability problems.

We propose a new method to identify navigation related usability problems by comparing Web usage patterns extracted

from server logs against anticipated usage represented in some

cognitive user models (RQ2). Fig. 1 shows the architecture of

our method. It includes three major modules: Usage Pattern Extraction, IUIP Modeling, and Usability Problem Identification.

First, we extract actual navigation paths from server logs and

discover patterns for some typical events. In parallel, we construct IUIP models for the same events. IUIP models are based

on the cognition of user behavior and can represent anticipated

paths for specific user-oriented tasks. The result checking employs the mechanism of test oracle. An oracle is generally used

to determine whether a test has passed or failed [6]. Here, we use

IUIP models as the oracle to identify the usability issues related

to the users actual navigation paths by analyzing the deviations

between the two. This method and its three major modules will

be described in detail in Sections IVVI.

We used the Furniture Giveaway (FG) 2009 website as the

case study to illustrate our method and its application. Additionally, we also used the server log data of the FG 2010 website,

the next version of FG 2009, to help us validate our method.

All the usability problems in FG2009 identified by our method

were fixed in FG2010. The functional convenience aspect of

usability for this website is quantified by its task completion

rate and time to complete given tasks. The ability to implement

recommended changes and to track quantifiable usability improvement over iterations is an important reason for us to use

this website to evaluate the applicability and effectiveness of

our method (RQ3).

The FG website was constructed by a charity organization

to provide free furniture to new international students in Dallas. Similar to e-commerce websites, it provided registration,

selection, and removal of goods, submission of orders, and other

services. It was partially designed and developed with the wellknown templated page pattern [13]. All outgoing Web pages go

through a one-page template on their way to the client. Four

templated pages were designed for the furniture catalog, furniture details, account information and selections. The FG website

was implemented by using PHP, MySQL, AJAX and other dynamic Web development techniques. It included 15 PHP scripts

to process users requests, 5 furniture catalog pages, about 200

furniture detail pages, and additional pages related to user information, selection rules, registration and so on.

IV. USAGE PATTERN EXTRACTION

Web server logs are our data source. Each entry in a log

contains the IP address of the originating host, the timestamp, the

requested Web page, the referrer, the user agent and other data.

Typically, the raw data need to be preprocessed and converted

into user sessions and transactions to extract usage patterns.

A. Data Preparation and Preprocessing

The data preparation and preprocessing include the following

domain-dependent tasks.

1) Data cleaning: This task is usually site-specific and

involves removing extraneous references to style files,

graphics, or sound files that may not be important for the

purpose of our analysis.

2) User identification: The remaining entries are grouped

by individual users. Because no user authentication and

cookie information is available in most server logs, we

used the combination of IP, user agent, and referrer fields

to identify unique users [14].

GENG AND TIAN: IMPROVING WEB NAVIGATION USABILITY BY COMPARING ACTUAL AND ANTICIPATED USAGE

Fig. 2.

87

Example of a trail tree (right) and associated transaction paths (left).

3) User session identification: The activity record of each

user is segmented into sessions, with each representing

a single visit to a site. Without additional authentication

information from users and without the mechanisms such

as embedded session IDs, one must rely on heuristics

for session identification [3], [23]. For example, we set

an elapse time of 15 min between two successive page

accesses as a threshold to partition a user activity record

into different sessions.

4) Path completion: Client or proxy side caching can often

result in missing access references to some pages that

have been cached. These missing references can often be

heuristically inferred from the knowledge of site topology

and referrer information, along with temporal information

from server logs [14].

These tasks are time consuming and computationally intensive, but essential to the successful discovery of usage patterns.

Therefore, we developed a tool to automate all these tasks except

part of path completion. For path completion, the designers or

developers first need to manually discover the rules of missing

references based on site structure, referrer, and other heuristic

information. Once the repeated patterns are identified, this work

can be automatically carried out. Our tool can work with server

logs of different Web applications by modifying the related parameters in the configuration file. The processed log data are

stored into a database for further use.

B. Transaction Identification

E-commerce data typically include various task-oriented

events such as order, shipping, and shopping cart changes. In

most cases, there is a need to divide individual data into corresponding groups called Web transactions. A transaction usually

has a well-defined beginning and end associated with a specific

task. For example, a transaction may start when a user places

something in his shopping cart and ends when he has completed the purchase on the confirmation screen [41]. A transaction differs from a user session in that the size of a transaction

can range from a single page to all the visited pages in a user

session.

In this research, we first construct event models, also called

task models, for typical Web tasks. Event models can be built

by Web designers or domain experts based on the use cases in

the requirements for the Web application. Based on the event

models, we identify the click operations (pages) from the clickstream of a user session as a transaction. For the FG website,

we constructed four event models for the following four typical

tasks.

1) Task 1: Register as a new user.

2) Task 2: Select the first piece of furniture.

3) Task 3: Select the next piece of furniture.

4) Task 4: Change selection.

The example below shows the event model constructed for

Task 2 (First Selection):

[post register.php] .*? [post process.

php].

Here, [ ] indicates beginning and end pages; .*? indicates

a minimal number of pages in a sequence between the two pages.

For this task, we extracted a sequence of pages which started

with the page post register.php and ended with the

first appearance of the page post process.php for each

session. Such a sequence of pages forms a transaction for a user.

We call the sequence a path.

C. Trail Tree Construction

The transactions identified from each user session form a

collection of paths. Since multiple visitors may access the same

pages in the same order, we use the trie data structure to merge

the paths along common prefixes. A trie, or a prefix tree, is an

ordered tree used to store an associative array where the keys

are usually strings [35]. All the descendants of a node have a

common prefix of the string associated with that node. The root

is associated with the empty string.

We adapted the trie algorithm to construct a tree structure that

also captures user visit frequencies, which is called a trail tree

in our work. In a trail tree, a complete path from the root to a leaf

node is called a trail. Each node corresponds to the occurrence of

a specific page in a transaction. It is annotated with the number

of users having reached the node across the same trail prefix. The

leaf nodes of the trail tree are also annotated with the trail names.

An example trail tree is shown in Fig. 2. The transaction paths

extracted from the Web server log are shown in the table to its

left, together with path occurrence frequencies. Paths 1, 4, and

5 have the common first node a; therefore, they were merged

together. For the second node of this subtree, Paths 1 and 4

both accessed Page b; therefore, the two paths were combined

at Node b. Finally, Paths 1 and 4 were merged into a single

trail, Trail 1, although Path 1 terminates at Node e. By the same

method, the other paths can be integrated into the trail tree. The

number at each edge indicates the number of users reaching the

next node across the same trail prefix.

88

IEEE TRANSACTIONS ON HUMAN-MACHINE SYSTEMS, VOL. 45, NO. 1, FEBRUARY 2015

Based on the aggregated trail tree, further mining can be performed for some interesting pattern discovery. Typically, good

mining results require a close interaction of the human experts

to specify the characteristics that make navigation patterns interesting. In our method, we focus on the paths which are used by a

sufficient number of users to finish a specific task. The paths can

be initially prioritized by their usage frequencies and selected by

using a threshold specified by the experts. Application-domain

knowledge and contextual information, such as criticality of

specific tasks, user privileges, etc., can also be used to identified

interesting patterns. For the FG 2009 website, we extracted

30 trails each for Tasks 1, 2, and 3, and 5 trails for Task 4.

V. IDEAL USER INTERACTIVE PATH MODEL CONSTRUCTION

Our IUIP models are based on the cognitive models surveyed

in Section II, particularly the ACT-R model. Due to the complexity of ACT-R model development [9] and the low-level rulebased programming language it relies on [12], we constructed

our own cognitive architecture and supporting tool based on the

ideas from ACT-R.

In general, the user behavior patterns can be traced with a

sequence of states and transitions [30], [32]. Our IUIP consists

of a number of states and transitions. For a particular goal, a

sequence of related operation rules can be specified for a series

of transitions. Our IUIP model specifies both the path and the

benchmark interactive time (no more than a maximum time)

for some specific states (pages). The benchmark time can first

be specified based on general rules for common types of Web

pages. For example, human factors guidelines specify the upper

bound for the response time to mitigate the risk that users will

lose interest in a website [22].

Humans usually try to complete their tasks in the most efficient manner by attempting to maximize their returns while

minimizing the cost [28]. Typically, experts and novices will

have different task performance [1]. Novices need to learn taskspecific knowledge while performing the task, but experts can

complete the task in the most efficient manner [28]. Based on

this cognitive mechanism, IUIP models need to be constructed

individually for novices and experts by cognitive experts by

utilizing their domain expertise and their knowledge of different users interactive behavior. For specific situations, we can

adapt the durations by performing iterative tests with different

users [38].

Diagrammatic notation methods and tools are often used to

support interaction modeling and task performance evaluation

[11], [15], [33]. To facilitate IUIP model construction and reuse,

we used C++ and XML to develop our IUIP modeling tool based

on the open-source visual diagram software DIA. DIA allows

users to draw customized diagrams, such as UML, data flow,

and other diagrams. Existing shapes and lines in DIA form part

of the graphic notations in our IUIP models. New ones can

be easily added by writing simple XML files. The operations,

operation rules, and computation rules can be embedded into

the graphic notations with XML schema we defined to form

our IUIP symbols. Currently, about 20 IUIP symbols have been

created to represent typical Web interactions. IUIP symbols used

in subsequent examples are explained at the bottom of Fig. 3.

Cognitive experts can use our IUIP modeling tool to develop

various IUIP models for different Web applications.

Similar to ACT-R and other low-level cognitive architecture,

our IUIP is a computational model. It can be extended to support

related task executions. For example, it can be extended to calculate the deviations between itself and users actual operations

in the next section.

Fig. 3 shows the IUIP model constructed by the cognitive

experts for the event First Selection for the novice users of

the FG website, together with the explanation for the symbols.

Each state of the IUIP model is labeled, and the benchmark time

is shown on the top. - means there is no interaction with users.

Those pages are only used to post data to the server.

In summary, IUIP models established by cognitive experts

and designers specify the anticipated user behavior. Both the

paths and time for user-oriented tasks anticipated by Web designers from the perspective of human behavior cognition are

captured in these models.

VI. USABILITY PROBLEM IDENTIFICATION

The actual users navigation trails we extracted from the aggregated trail tree are compared against corresponding IUIP

models automatically. This comparison will yield a set of deviations between the two. We can identify some common problems

of actual users interaction with the Web application by focusing

on deviations that occur frequently. Combined with expertise in

product internal and contextual information, our results can also

help identify the root causes of some usability problems existing

in the Web design.

Based on logical choices made and time spent by users at

each page, the calculation of deviations between actual users

usage patterns and IUIP can be divided into two parts:

1) Logical deviation calculation:

a) When the path choice anticipated by the IUIP model

is available but not selected, a single deviation is

counted.

b) Sum up all the above deviations over all the selected

user transactions for each page.

2) Temporal deviation calculation:

a) When a user spends more time at a specific page than

the benchmark specified for the corresponding state

in the IUIP model, a single deviation is counted.

b) Sum up all the above deviations over all the selected

user transactions for each page.

Fig. 4 gives an example of the logical deviation calculation.

The IUIP model for the task First Selection is shown on the

top. The corresponding user Trail 7, a part of a trail tree extracted from log data, is presented under it. The node in the

tree is annotated with the number of users having reached the

node across the same trail prefix. The successive pages related

to furniture categories are grouped into a dashed box. The pages

with deviations and the unanticipated followup pages below

them are marked with solid rectangular boxes. Those unanticipated followup pages will not be used themselves for deviation

calculations to avoid double counting.

GENG AND TIAN: IMPROVING WEB NAVIGATION USABILITY BY COMPARING ACTUAL AND ANTICIPATED USAGE

Fig. 3.

IUIP model for the event First Selection (top) and explanation of the symbols used (bottom).

Fig. 4.

Logical deviation calculation example.

In user Trail 7, after following the first four states S1S4

in the IUIP model, the expected state S5 (category page) was

not reached from S4 (selection rules). Instead, S4 was repeated;

therefore, a deviation should be recorded. Because the repeated

S4 is the unanticipated followup page, it will be omitted in

subsequent deviation calculation. The process proceeds to find

the page matching S5. The following pages cat=1 and

cat=5 are both category pages, matching S5 in this IUIP

model. Furthermore, page detail id=74 matches S6 according to the operation rule. We anticipated the user to reach S7

(process) or continue to stay in S5 or S6 to look around among

furniture categories or detail pages, but the user jumped back

to S4 (page cat=0, selection rules) instead. Therefore, one

logical deviation for S6 of the IUIP model was identified.

Fig. 5 gives an example of the temporal deviation calculation.

Actual times taken by users are listed in the time tables graphi-

89

cally linked to the related pages. In user Trail 7, one user spent

more time on index.php than the benchmark time for the

corresponding state S2. So, one temporal deviation was counted.

Furthermore, two users took more time on page selection rules

than the benchmark time specified for the corresponding state

S4. Therefore, two temporal deviations were counted. Similarly,

we obtained two temporal deviations for the category pages: one

for the page cat=1 and one for the page cat=5.

We can perform the same comparison and calculation for all

the trails we extracted for all the corresponding tasks. Results

were obtained for the FG 2009 website in this way. Tables I and

II show the specific states (pages) in the IUIP models with large

(5) cumulative logical and temporal deviations respectively.

The results single out these Web pages and their design for further analysis (to be described in the next section), because such

large deviations may be indications of some usability problems.

90

Fig. 5.

IEEE TRANSACTIONS ON HUMAN-MACHINE SYSTEMS, VOL. 45, NO. 1, FEBRUARY 2015

Temporal deviation calculation example.

TABLE I

STATES (PAGES) WITH LARGE LOGICAL DEVIATIONS

Task

1

2

States (pages)

Logical deviation

index.php

Selection Rules

Category Pages

Register.php(post)

Category Pages

My Selection

Show.php?cat=2

Show.php?cat=1

16

10

7

6

18

10

9

7

TABLE II

STATES (PAGES) WITH LARGE TEMPORAL DEVIATIONS

Task

1

2

States (pages)

Temporal deviations

index.php

register.php

Selection Rules

Category cat=2

cat=1

cat=5

Details

detail=18

detail=31

My Selections

Category cat=5

cat=2

Details

detail=9

detail=69

My Selections

27

23

7

14

7

6

6

5

23

23

8

7

5

6

VII. CASE STUDY AND ANALYSIS

We next describe some specific results from applying our

method to the FG 2009 website. We collected Web server access log data for the first three days after its deployment. The

server log includes about 3000 entries. After preprocessing the

raw log data using our tool, we identified 58 unique users and

81 sessions. Then, we constructed four event models for four

typical tasks. We extracted 95 trails for these tasks. Meanwhile,

a designer with three-year GUI design experience and an expert

with five-year experience with human factors practice for the

Web constructed four IUIP models for the same tasks based on

their cognition of users interactive behavior.

By checking the extracted usage patterns against the four IUIP

models, we obtained logical and temporal deviations shown in

Tables I and II and identified 17 usability issues or potential

usability problems. Some usability issues were identified by

both logical and temporal deviation analyses. Next, we further

analyze these deviations for usability problem identification and

improvement.

In Table I, 16 deviations took place in the page

index.php. The unanticipated followup page is the page

login.php, followed by the page index.php?f=t

(login failure). Further reviewing the index page, we found that

the page design is too simplistic: No instruction was provided

to help users to login or register. We inferred that some users

with limited online shopping experience were trying to use their

regular email addresses and passwords to log in to the FG 2009

website. They did not realize that they needed to use their email

addresses to register as new users and setup password. Therefore, numerous login failures occurred. Once this issue was

identified, the index page was redesigned to instruct users to

login or register.

We also found some structure design issues. For example, we

observed that some users repeatedly visited the page Selection

Rules. It is likely that when the users were not permitted to

select any furniture in some categories (the FG website limited

each user to select one piece of furniture under each category),

they had to go to the page Selection Rules to find the reasons.

To reduce these redundant operations and improve user experience, the help function for selection rules should be redesigned

to make it more convenient for users to consult.

GENG AND TIAN: IMPROVING WEB NAVIGATION USABILITY BY COMPARING ACTUAL AND ANTICIPATED USAGE

Temporal deviations also highlight some usability problems

linked to pages where users spent excessive time. For example,

Table II shows that 23 users spent excessive time in the page

register.php. After inspecting this page, domain experts

recommended that some page elements be redesigned to enable

more efficient completion of this task, such as 1) replacing the

control text area with listbox to ensure input data validity

and reduce effort and 2) setting default values for the items

city and state for the predominantly local user population

of the FG website.

We also identified some pages of furniture categories with

larger temporal deviations. After further analysis, the experts

noticed that the thumbnails for all furniture items were displayed

in one page. The users had to wait for the display of the whole

page and to scroll down the screen several times to view all

the furniture items. As a result, addition of page navigation and

View all functions were suggested to improve the efficiency

of these operations.

As illustrated in the above examples, some navigation-related

Web usability problems can be identified and corrected by further analyzing the pages with large deviations.

VIII. VALIDATION

We next provide an initial validation of our method with the

case study of the FG website over two successive versions.

The validation is guided by three subquestions related to our

validation question RQ3 first introduced in Section III.

1) RQ3.1: Can our method be applied in an iterative usability

engineering process?

2) RQ3.2: Can the problematic areas identified by our

method be confirmed as real usability issues by usability specialists?

3) RQ3.3: Can our method contribute to usability improvement?

A. Applicability (RQ3.1)

Our method is applicable to the late phases of Web application development and throughout the continuous evolution and

maintenance process when Web server logs are available. In

the iterative usability engineering process, our method can be

incorporated into an integrated strategy for usability assurance.

Once a beta website is available and related Web server logs

are obtained, our method can be applied to help identify usability problems. The usability issues identified by our method

can also provide usability experts valuable information such as

what types of usability issues and what specific Web pages and

design they should pay more attention to. Similarly, the usability problems found by our method can also help the usability

testing team to prepare and execute relevant test scenarios. Our

method can be continuously used for usability improvement in

the constantly evolving website after its first operational use.

B. Confirmation of Identified Problems (RQ3.2)

We need to confirm that the problematic areas identified by

our method are indeed usability problems. Usability issues,

91

unlike other quality attributes such as reliability or capability, typically involved users perception and experts subjective

judgment. Therefore, in the absence of direct user feedback,

validation of the effectiveness of usability practice must be performed by usability experts.

A designer of the FG website with three years GUI design

experience and an expert with five-year experience with human

factors practice for the Web were invited to serve as the usability

specialists to review and validate our results. Among the 17

usability problems identified by our method for the FG website

described in Session VII, three problems were combined as one.

All the 15 problems were confirmed as usability problems by

the usability specialists.

Additionally, it is meaningful to determine the severity of the

problems to assess their impact on the usability of the system.

The four-level severity measure [5] was adopted in our study.

Among 15 identified usability problems, there was one problem

that prevented the completion of a task (severity level 1), ten

problems that created significant delay and frustration (level 2),

three problems that had a minor effect on usability of the system

(level 3), and one problem that pointed to a future enhancement

(level 4). The results indicate that our method can effectively

identify some usability problems, especially those frustrating to

users.

C. Impact on Usability Improvement (RQ3.3)

We used version 2009 and version 2010 of the FG website

to evaluate the impact of our method on usability improvement.

All the usability problems in FG 2009 identified by our method

were fixed in FG 2010. FG 2009 and FG 2010 users are different

incoming classes of international students, but they share many

common characteristics. Therefore, FG 2009 and FG 2010 can

be used to compare usability change and to evaluate usability

improvement due to the introduction and application of our

method.

For well-defined tasks, we can measure the task success rate

and compare it in successive iterations to evaluate usability

improvement. Another way is to examine the amount of effort

required to complete a task, typically by measuring the number

of steps (pages) required to perform the task [41]. Time-on-task

can also be used to measure usability efficiency.

Table III shows the usability improvement between FG 2009

and FG 2010 in term of changes in task success rate, average

amount of effort-on-task and average time-on-task. The average

improvement of task success rate is 8.2%. The average number

of steps for each task decreased from 7.7 to 5.8 and the average

time for each task was reduced from 2.5 to 0.8 min. The usability

of the FG website has apparently improved, with higher task

success rate and reduced effort and time required for the same

tasks.

IX. DISCUSSION

Our method is not intended to and cannot replace traditional

usability practices. First, we need to extract usage patterns from

Web server logs, which can only become available after Web

applications are deployed. Therefore, our method cannot be

92

IEEE TRANSACTIONS ON HUMAN-MACHINE SYSTEMS, VOL. 45, NO. 1, FEBRUARY 2015

TABLE III

TASK SUCCESS RATES, AVERAGE EFFORT, AND TIME ON TASKS FOR FG 2009 AND FG 2010

Task

Task success rate

Average effort

(# of step)

Average time

(minutes)

FG 2009

FG 2010

Improvement

FG 2009

FG 2010

Improvement

FG 2009

FG 2010

Improvement

Overall

45/53 or 84.9%

37/39 or 94.9%

10.0%

5

3

2

2.9

1.2

1.7

38/45 or 84.4%

35/37 or 94.6%

10.2%

9

8

1

2.5

1.4

1.1

37/50 or 74.0%

40/48 or 83.3%

9.3%

10

7

3

2.4

1.3

1.1

5/13 or 38.5%

9/17 or 52.9%

14.5%

4

3

1

0.5

0.4

0.1

77.6%

85.8%

8.2%

7.7

5.8

1.9

2.5

0.8

1.7

directly used to identify usability problems for the initial prototype design as can be done through the traditional heuristic

evaluation by experts, nor can it replace usability testing for the

initial Web application development before it is fully operational

and made available to the users. After the initial deployment

of the Web applications, our method can be used to identify

navigation related usability issues and improve usability. In addition, it can be used to develop questions or hypotheses for

traditional heuristic evaluation and usability testing for the subsequent updates and improvements in the iterative development

and maintenance processes for Web applications. Therefore, our

method can complement existing usability practices and become

an important part of an integrated strategy for Web usability

assurance.

Traditional usability testing involving actual users requires

significant time and effort [26]. In the heuristic evaluation, significant effort is required for human factors experts to inspect

a large number of Web pages and interface elements. In contrast, our method can be semiautomatically and independently

performed with the tools and models we developed. The total

cost includes three parts: 1) model construction (preparation);

2) test oracle implementation to identify usability problems; and

3) followup inspection. Although usability experts are needed to

construct event and IUIP models in our method, it is a one-time

effort that cumulatively injects the experts domain knowledge

and cognition. The automated tasks in our method include log

data processing, trail tree construction, and trail extraction, and

the comparison between IUIP models and user trails to calculate logical and temporal deviations. This type of methods offer

substantial benefits over the alternative time-consuming unaided

analysis of potentially large amounts of raw data [19]. Human

factor experts must manually inspect the output results to identify usability problems. However, they only need to inspect and

confirm the usability problems identified by our method. For

subsequent iterations, our method would be even more costeffective because of 1) reuse of the IUIP models and event models, possibly with minor adjustment, 2) automated tool support

for a significant part of the activities involved, and 3) limited

scope of followup inspections.

There are some issues with server log data, including unique

user identification and caching [8]. Typically, each unique IP

address in a server log may represent one or more unique

users. Pages loaded from client- or proxy-side cache will not

be recorded in the server log. These issues make server log

data sometimes incomplete or inaccurate. To alleviate these

problems, we identified unique users through a combination

of IP addresses, user agents, and referrers and used site topology and referrer information along with temporal information

to infer missing references. We identified 58 users for the FG

2009 website this way, close to the 55 registered students in the

registration system. A few users changed their computer configuration during this period, leading to their misidentification as

new users.

There are several limitations due to the data granularity of

our current study. Our method captures user interactions at the

site and page levels and, thus, cannot reveal user experiences

at the page element level. For Web 2.0 applications that are

no longer based on synchronous page request, the server log

may not contain enough information. We need to adapt our

current method or develop new strategies to deal with these new

Web applications. However, the usability issues associated with

specific pages identified by our method may contain clues about

possible usability issues with embedded page elements. In an

integrated strategy, these clues will help experts identify page

element problems in followup inspections. These issues will be

explored further in followup studies.

There are also limitations due to the techniques and models

we employed in our method. We used cognitive user models as

the basis to check extracted usage patterns. Our IUIP cognitive

models are based on experts cognition of human behavior,

particularly Web interaction behavior. The experts cognitive

knowledge and experience directly affect IUIP models. To avoid

the limitation of ACT-R due to the complexity of its model

development, we developed our own cognitive architecture and

provided graphic notations for IUIP model construction. These

graphic notations and the associated operation rules need to be

enhanced and validated in constructing IUIP models for largescale Web applications with complex interactive tasks.

Finally, there is the limitation in the scope and scalability of

our method. We only focused on the functional convenience aspect of usability where specific effectiveness (task completion)

and efficiency (time spent on tasks) problems related to Web

navigation can be identified and corrected. We did not directly

address user satisfaction and presentation aspects of usability.

More expertise in cognition and analysis of subtleties in user

preferences is required to address these more subjective aspects

GENG AND TIAN: IMPROVING WEB NAVIGATION USABILITY BY COMPARING ACTUAL AND ANTICIPATED USAGE

of usability. For scalability, we plan to validate our method in

large systems and continuously improve our tools. Our method

involves several manual steps such as constructing IUIP models

and confirming identified usability problems by experts, which

may be difficult and expensive for large-scale Web applications.

However, experts manual effort involved in our method is substantially less than traditional usability evaluation by experts.

Furthermore, usability testing is typically expensive for large

systems and faces its own scalability problems. An integrated

strategy might help alleviate the scalability problem, not only

for our method, but also for traditional usability practices.

X. CONCLUSION

We have developed a new method for the identification and

improvement of navigation-related Web usability problems by

checking extracted usage patterns against cognitive user models. As demonstrated by our case study, our method can identify areas with usability issues to help improve the usability

of Web systems. Once a website is operational, our method

can be continuously applied and drive ongoing refinements. In

contrast with traditional software products and systems, Webbased applications have shortened development cycles and prolonged maintenance cycles [24]. Our method can contribute

significantly to continuous usability improvement over these

prolonged maintenance cycles. The usability improvement in

successive iterations can be quantified by the progressively better effectiveness (higher task completion rate) and efficiency

(less time for given tasks).

Our method is not intended to and cannot replace heuristic

usability evaluation by experts and user-centered usability testing. It complements these traditional usability practices and can

be incorporated into an integrated strategy for Web usability

assurance. With automated tool support for a significant part of

the activities involved, our method is cost-effective. It would

be particularly valuable in the two common situations, where

an adequate number of actual users cannot be involved in testing and cognitive experts are in short supply. Server logs in

our method represent real users operations in natural working

conditions, and our IUIP models injected with human behavior

cognition represent part of cognitive experts work. We are currently integrating these modeling and analysis tools into a tool

suite that supports measurement, analysis, and overall quality

improvement for Web applications.

In the future, we should and must carry out validation studies

with large-scale Web applications. We also plan to explore additional approaches to discover Web usage patterns and related usability problems generalizable to other interesting domains. For

example, we have already started exploring deviation calculation and analysis at the trail level instead of at the individual page

level. Such analyses might be more meaningful and yield more

interesting results for Web applications with complex structure

and operation sequences. Our IUIP modeling architecture and

supporting tools also need to be further enhanced and optimized

for more complex tasks. We will also further expand our usability research to cover more usability aspects to improve Web

users overall satisfaction.

93

ACKNOWLEDGMENT

The authors would like to thank the anonymous reviewers for

their constructive comments and suggestions.

REFERENCES

[1] A. Agarwal and M. Prabaker, Building on the usability study: Two explorations on how to better understand an interface, in Human-Computer

Interaction. New Trends, J. Jacko, Ed. New York, NY, USA: Springer,

2009, pp. 385394.

[2] J. R. Anderson, D. Bothell, M. D. Byrne, S. Douglass, C. Lebiere, and

Y. Qin, An integrated theory of the mind, Psychol. Rev., vol. 111,

pp. 10361060, 2004.

[3] T. Arce, P. E. Roman, J. D. Velasquez, and V. Parada, Identifying web

sessions with simulated annealing, Expert Syst. Appl., vol. 41, no. 4,

pp. 15931600, 2014.

[4] M. F. Arlitt and C. L. Williamson, Internet Web servers: Workload

characterization and performance implications, IEEE/ACM Trans. Netw.,

vol. 5, no. 5, pp. 631645, Oct. 1997.

[5] C. M. Barnum and S. Dragga, Usability Testing and Research. White

Plains, NY, USA: Longman, Oct. 2001.

[6] B. Beizer, Software Testing Technique. Boston, MA, USA: Int. Thomson

Comput. Press, 1990.

[7] J. L. Belden, R. Grayson, and J. Barnes, Defining and testing EMR

usability: Principles and proposed methods of EMR usability evaluation and rating, Healthcare Information and Management Systems

Society, Chicago, IL, USA, Tech. Rep., (2009). [Online]. Available:

http://www.himss.org/ASP/ContentRedirector.asp?ContentID=71733

[8] M. C. Burton and J. B. Walther, The value of Web log data in use-based

design and testing, J. Comput.-Mediated Commun., vol. 6, no. 3, p. 0,

2001.

[9] M. D. Byrne, ACT-R/PM and menu selection: Applying a cognitive

architecture to HCI, Int. J. Human-Comput. Stud., vol. 55, no. 1,

pp. 4184, 2001.

[10] T. Carta, F. Patern`o, and V. F. D. Santana, Web usability probe: A tool for

supporting remote usability evaluation of web sites, in Human-Computer

InteractionINTERACT 2011. New York, NY, USA: Springer, 2011,

pp. 349357.

[11] G. Christou, F. E. Ritter, and R. J. Jacob, CODEINA new notation for

GOMS to handle evaluations of reality-based interaction style interfaces,

Int. J. Human-Comput. Interaction, vol. 28, no. 3, pp. 189201, 2012.

[12] M. A. Cohen, F. E. Ritter, and S. R. Haynes, Applying software engineering to agent development, AI Mag., vol. 31, no. 2, pp. 2544, 2010.

[13] J. Conallen, Building Web Applications with UML. Reading, MA, USA:

Addison-Wesley, 2003.

[14] R. Cooley, B. Mobasher, and J. Srivastava,, Data preparation for mining

World Wide Web browsing patterns, Knowl. Inf. Syst., vol. 1, no. 1,

pp. 532, 1999.

[15] O. L. Georgeon, A. Mille, T. Bellet, B. Mathern, and F. E. Ritter, Supporting activity modelling from activity traces, Expert Syst., vol. 29,

no. 3, pp. 261275, 2012.

[16] S. R. Haynes, M. A. Cohen, and F. E. Ritter, Designs for explaining intelligent agents, Int. J. Human-Comput. Stud., vol. 67, no. 1,

pp. 90110, Jan. 2009.

[17] M. Heinath, J. Dzaack, and A. Wiesner, Simplifying the development

and the analysis of cognitive models, in Proc. Eur. Cognitive Sci. Conf.,

Delphi, Greece, 2007, pp. 446451.

[18] D. M. Hilbert and D. F. Redmiles, Extracting usability information from

user interface events, ACM Comput. Surveys, vol. 32, no. 4, pp. 384421,

2000.

[19] M. Y. Ivory and M. A. Hearst, The state of the art in automating usability

evaluation of user interfaces, ACM Comput. Surveys, vol. 33, no. 4,

pp. 470516, 2001.

[20] C. Kallepalli and J. Tian, Measuring and modeling usage and reliability

for statistical Web testing, IEEE Trans. Softw. Engin., vol. 27, no. 11,

pp. 10231036, Nov. 2001.

[21] D. E. Kieras and D. E. Meyer, An overview of the EPIC architecture for

cognition and performance with application to human-computer interaction, Human-Comput. Interaction, vol. 12, no. 4, pp. 391438, 1997.

[22] S. J. Koyani, R. W. Bailey, and J. R. Nall, Research-Based Web Design and

Guidelines. Washington, DC, USA: U.S. Dept. Health Human Services,

2004.

[23] B. Liu, Web Data Mining: Exploring Hyperlinks, Contents, and Usage

Data. New York, NY, USA: Springer, 2007.

94

IEEE TRANSACTIONS ON HUMAN-MACHINE SYSTEMS, VOL. 45, NO. 1, FEBRUARY 2015

[24] A. McDonald and R. Welland, Web engineering in practice, in Proc.

10th Int. World Wide Web Conf., May 2001, pp. 2130.

[25] J. H. Morgan, C.-Y. Cheng, C. Pike, and F. E. Ritter, A design, tests and

considerations for improving keystroke and mouse loggers, Interacting

Comput., vol. 25, no. 3, pp. 242258, 2013.

[26] J. Nielsen, Usability Engineering. San Mateo, CA, USA: Morgan Kaufmann, 1993.

[27] L. Paganelli and F. Patern`o, Tools for remote usability evaluation of

web applications through browser logs and task models, Behavior Res.

Methods, Instrum., Comput., vol. 35, no. 3, pp. 369378, 2003.

[28] D. Peebles and A. L. Cox, Modeling interactive behaviour with a rational cognitive architecture, in Human Computer Interaction: Concepts,

Methodologies, Tools, and Applications, C. S. Ang and P. Zaphiris, Eds.

Hershey, PA, USA: Inf. Sci. Ref., 2008, pp. 11541172.

[29] R. W. Pew and A. S. Mavor, editors,. Human-System Integration in the

System Development Process: A New Look. Washington, DC, USA: Natl.

Acad. Press, 2007.

[30] M. Rauterberg, AMME: An automatic mental model evaluation to analyse user behaviour traced in a finite, discrete state space, Ergonomics,

vol. 36, no. 11, pp. 13691380, 1993.

[31] F. E. Ritter, A. R. Freed, and O. L. Haskett, Discovering user information

needs: The case of university department web sites, ACM Interactions,

vol. 12, no. 5, pp. 1927, 2005.

[32] P. M. Sanderson and C. Fisher, Exploratory sequential data analysis:

Foundations, HumanComput. Interaction, vol. 9, nos. 3/4, pp. 251317,

1994.

[33] P. M. Sanderson, M. D. McNeese, and B. S. Zaff, Handling complex

real-world data with two cognitive engineering tools: COGENT and MacSHAPA, Behavior Res. Methods, Instrum., Comput., vol. 26, no. 2,

pp. 117124, 1994.

[34] J. Sant, A. Souter, and L. Greenwald, An exploration of statistical models

for automated test case generation, ACM SIGSOFT Softw. Eng. Notes,

vol. 30, pp. 17, 2005.

[35] R. Sedgewick and K. Wayne, Algorithms. Reading, MA, USA: AddisonWesley, 1988.

[36] L. Tauscher and S. Greenberg, Revisitation patterns in World Wide Web

navigation, in Proc. ACM SIGCHI Conf. Human Factors Comput. Syst.,

New York, NY, USA, 1997, pp. 399406.

[37] Tec-Ed, Assessing web site usability from server log files, White Paper,

Tec-Ed, 1999.

[38] L.-H. Teo, B. John, and M. Blackmon, Cogtool-explorer: A model of

goal-directed user exploration that considers information layout, in Proc.

SIGCHI Conf. Human Factors Comput. Syst., New York, NY, USA, 2012,

pp. 24792488.

[39] J. Tian, S. Rudraraju, and Z. Li, Evaluating web software reliability

based on workload and failure data extracted from server logs, IEEE

Trans. Softw. Eng., vol. 30, no. 11, pp. 754769, Nov. 2004.

[40] V. C. Trinh and A. J. Gonzalez, Discovering contexts from observed

human performance, IEEE Trans. Human-Mach. Syst., vol. 43, no. 4,

pp. 359370, Jul. 2013.

[41] T. Tullis and B. Albert, Measuring the User Experience: Collecting, Analyzing, and Presenting Usability Metrics (Interactive Technologies). San

Mateo, CA, USA: Morgan Kaufmann, 2008.

[42] J. S. Valacich, D. V. Parboteeah, and J. D. Wells, The online consumers

hierarchy of needs, Comm. ACM, vol. 50, no. 9, pp. 8490, Sep. 2007.

Ruili Geng (M12) received the B.S. degree in computer application from University of Jinan, Jinan,

China, in 1998, and the M.S. degree in computer science from Beijing Technology and Business University, Beijing, China, in 2004. She is currently working toward the Ph.D. degree in computer science with

Southern Methodist University, Dallas, TX, USA.

She has worked as a Software Engineer, Test Engineer, and Project Manager for several IT companies,

including Microsoft China between 1998 and 2008.

Her research interests include software quality, usability, reliability, software measurement, and usage mining.

Jeff Tian (M89) received the B.S., M.S., and

Ph.D. degrees from Xian Jiaotong University, Xian,

China, Harvard University, Cambridge, MA, USA,

and the University of Maryland, College Park, MD,

USA, respectively.

He was with the IBM Toronto Lab from 1992

to 1995. Since 1995, he has been with Southern

Methodist University, Dallas, TX, USA, where he

is currently a Professor of computer science and engineering. Since 2012, he has also been a Shaanxi

100 Professor with the School of Computer Science, Northwestern Polytechnical University, Xian. His current research interests include software quality, reliability, usability, testing, measurement, and

Web/service/cloud computing.

Dr. Tian is a Member of the ACM.

Vous aimerez peut-être aussi

- WiMAX WiFi APRICOT2012 PDFDocument77 pagesWiMAX WiFi APRICOT2012 PDFMalathy KrishnanPas encore d'évaluation

- Introduction To NetworksDocument38 pagesIntroduction To NetworksMalathy KrishnanPas encore d'évaluation

- Improving Communications Service Provider Performance With Big DataDocument31 pagesImproving Communications Service Provider Performance With Big DataNidalPas encore d'évaluation

- ZigBee TutorialDocument53 pagesZigBee TutorialMalathy Krishnan100% (1)

- J2MEDocument20 pagesJ2MEMalathy KrishnanPas encore d'évaluation

- FtyDocument2 pagesFtyMalathy KrishnanPas encore d'évaluation

- 802 11Document253 pages802 11wityamitPas encore d'évaluation

- Wireless NetworksDocument47 pagesWireless NetworksRashmi_Gautam_7461Pas encore d'évaluation

- Ch3. ZigBeeDocument26 pagesCh3. ZigBeeMalathy KrishnanPas encore d'évaluation

- 13IT702 SyllabusDocument3 pages13IT702 SyllabusMalathy KrishnanPas encore d'évaluation

- Cateva RaspunsuriDocument20 pagesCateva RaspunsuriIonut PiticasPas encore d'évaluation

- J2MEDocument20 pagesJ2MEMalathy KrishnanPas encore d'évaluation

- FtyDocument2 pagesFtyMalathy KrishnanPas encore d'évaluation

- Crypot Ooad LabDocument10 pagesCrypot Ooad LabMalathy KrishnanPas encore d'évaluation

- ProgramDocument25 pagesProgramMalathy KrishnanPas encore d'évaluation

- The J2ME Architecture Comprises Three Software LayersDocument1 pageThe J2ME Architecture Comprises Three Software LayersMalathy KrishnanPas encore d'évaluation

- Kushal Modi (09BIT056)Document36 pagesKushal Modi (09BIT056)Malathy KrishnanPas encore d'évaluation

- The J2ME Architecture Comprises Three Software LayersDocument1 pageThe J2ME Architecture Comprises Three Software LayersMalathy KrishnanPas encore d'évaluation

- The J2ME Architecture Comprises Three Software LayersDocument1 pageThe J2ME Architecture Comprises Three Software LayersMalathy KrishnanPas encore d'évaluation

- Secret of Happiness EbookDocument122 pagesSecret of Happiness EbookSameer Darekar100% (13)

- Kushal Modi (09BIT056)Document36 pagesKushal Modi (09BIT056)Malathy KrishnanPas encore d'évaluation

- The J2ME Architecture Comprises Three Software LayersDocument1 pageThe J2ME Architecture Comprises Three Software LayersMalathy KrishnanPas encore d'évaluation

- 04721574Document15 pages04721574Malathy KrishnanPas encore d'évaluation

- PPTDocument5 pagesPPTMalathy KrishnanPas encore d'évaluation

- Paper Preparation TemplateDocument3 pagesPaper Preparation TemplateMalathy KrishnanPas encore d'évaluation

- 10 Generic English RtiDocument7 pages10 Generic English RtiMalathy KrishnanPas encore d'évaluation

- Shoe Dog: A Memoir by the Creator of NikeD'EverandShoe Dog: A Memoir by the Creator of NikeÉvaluation : 4.5 sur 5 étoiles4.5/5 (537)

- Grit: The Power of Passion and PerseveranceD'EverandGrit: The Power of Passion and PerseveranceÉvaluation : 4 sur 5 étoiles4/5 (587)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceD'EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceÉvaluation : 4 sur 5 étoiles4/5 (894)

- The Yellow House: A Memoir (2019 National Book Award Winner)D'EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Évaluation : 4 sur 5 étoiles4/5 (98)

- The Little Book of Hygge: Danish Secrets to Happy LivingD'EverandThe Little Book of Hygge: Danish Secrets to Happy LivingÉvaluation : 3.5 sur 5 étoiles3.5/5 (399)

- On Fire: The (Burning) Case for a Green New DealD'EverandOn Fire: The (Burning) Case for a Green New DealÉvaluation : 4 sur 5 étoiles4/5 (73)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeD'EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeÉvaluation : 4 sur 5 étoiles4/5 (5794)

- Never Split the Difference: Negotiating As If Your Life Depended On ItD'EverandNever Split the Difference: Negotiating As If Your Life Depended On ItÉvaluation : 4.5 sur 5 étoiles4.5/5 (838)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureD'EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureÉvaluation : 4.5 sur 5 étoiles4.5/5 (474)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryD'EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryÉvaluation : 3.5 sur 5 étoiles3.5/5 (231)

- The Emperor of All Maladies: A Biography of CancerD'EverandThe Emperor of All Maladies: A Biography of CancerÉvaluation : 4.5 sur 5 étoiles4.5/5 (271)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreD'EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreÉvaluation : 4 sur 5 étoiles4/5 (1090)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyD'EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyÉvaluation : 3.5 sur 5 étoiles3.5/5 (2219)

- Team of Rivals: The Political Genius of Abraham LincolnD'EverandTeam of Rivals: The Political Genius of Abraham LincolnÉvaluation : 4.5 sur 5 étoiles4.5/5 (234)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersD'EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersÉvaluation : 4.5 sur 5 étoiles4.5/5 (344)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaD'EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaÉvaluation : 4.5 sur 5 étoiles4.5/5 (265)

- The Unwinding: An Inner History of the New AmericaD'EverandThe Unwinding: An Inner History of the New AmericaÉvaluation : 4 sur 5 étoiles4/5 (45)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)D'EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Évaluation : 4.5 sur 5 étoiles4.5/5 (119)

- Her Body and Other Parties: StoriesD'EverandHer Body and Other Parties: StoriesÉvaluation : 4 sur 5 étoiles4/5 (821)

- LLRP 1 0 1-Standard-20070813Document194 pagesLLRP 1 0 1-Standard-20070813Keny BustillosPas encore d'évaluation

- VSAN Stretched Cluster & 2 Node GuideDocument129 pagesVSAN Stretched Cluster & 2 Node GuideNedeljko MatejakPas encore d'évaluation

- Issue14final PDFDocument51 pagesIssue14final PDFPOWER NewsPas encore d'évaluation

- Isolated Footing: BY: Albaytar, Mark Joseph A. Billones, Joyce Anne CDocument2 pagesIsolated Footing: BY: Albaytar, Mark Joseph A. Billones, Joyce Anne CMark JosephPas encore d'évaluation

- Bootstrapping Scrum and XP Under Crisis: Crisp AB, Sweden Henrik - Kniberg@crisp - Se, Reza - Farhang@crisp - SeDocument9 pagesBootstrapping Scrum and XP Under Crisis: Crisp AB, Sweden Henrik - Kniberg@crisp - Se, Reza - Farhang@crisp - SeateifPas encore d'évaluation

- DCOM Configuration OPC and Trend Server USDocument35 pagesDCOM Configuration OPC and Trend Server USapi-3804102100% (1)

- Part2 5548C E60-E200 FunctDocument111 pagesPart2 5548C E60-E200 FuncterbiliPas encore d'évaluation

- ANALYSIS OF PRECAST SEGMENTAL CONCRETE BRIDGESDocument32 pagesANALYSIS OF PRECAST SEGMENTAL CONCRETE BRIDGESMathew Sebastian100% (6)

- Daftar GedungDocument62 pagesDaftar GedungDewi SusantiPas encore d'évaluation

- Architectural Design-Vi Literature Study of MallDocument38 pagesArchitectural Design-Vi Literature Study of Malldevrishabh72% (60)

- Nucor Grating Catalogue 2015Document64 pagesNucor Grating Catalogue 2015Dan Dela PeñaPas encore d'évaluation

- Yonah / Calistoga / ICH7-MDocument42 pagesYonah / Calistoga / ICH7-MjstefanisPas encore d'évaluation

- Home Automation SystemDocument18 pagesHome Automation SystemChandrika Dalakoti77% (13)

- Soal Us Mulok Bahasa Inggris SDDocument4 pagesSoal Us Mulok Bahasa Inggris SDukasyah abdurrahmanPas encore d'évaluation

- Citrix Virtual Desktop Handbook (7x)Document220 pagesCitrix Virtual Desktop Handbook (7x)sunildwivedi10Pas encore d'évaluation

- Siemens 3113 PDFDocument359 pagesSiemens 3113 PDFIon DogeanuPas encore d'évaluation

- Egyptian Architecture: The Influence of Geography and BeliefsDocument48 pagesEgyptian Architecture: The Influence of Geography and BeliefsYogesh SoodPas encore d'évaluation

- How McAfee Took First Step To Master Data Management SuccessDocument3 pagesHow McAfee Took First Step To Master Data Management SuccessFirst San Francisco PartnersPas encore d'évaluation

- Building Construction ARP-431: Submitted By: Shreya Sood 17BAR1013Document17 pagesBuilding Construction ARP-431: Submitted By: Shreya Sood 17BAR1013Rithika Raju ChallapuramPas encore d'évaluation

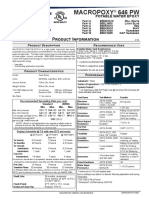

- Macropoxy 646 PW: Protective & Marine CoatingsDocument4 pagesMacropoxy 646 PW: Protective & Marine CoatingsAnn HewsonPas encore d'évaluation

- Varicondition DX enDocument5 pagesVaricondition DX encarlos16702014Pas encore d'évaluation

- As 400 User GuideDocument95 pagesAs 400 User GuideDSunte WilsonPas encore d'évaluation

- Configure Nokia E71WiFi SIP Client With The 3300Document14 pagesConfigure Nokia E71WiFi SIP Client With The 3300Angelo IonPas encore d'évaluation

- Get mailbox quotas and usageDocument4 pagesGet mailbox quotas and usagecopoz_copozPas encore d'évaluation

- S7 200 CommunicationDocument34 pagesS7 200 CommunicationsyoussefPas encore d'évaluation

- MPS Manual 9Document4 pagesMPS Manual 9صدام حسینPas encore d'évaluation

- Setting Up Sophos + Amavis For Postfix: G.Czaplinski@prioris - Mini.pw - Edu.plDocument5 pagesSetting Up Sophos + Amavis For Postfix: G.Czaplinski@prioris - Mini.pw - Edu.plsolocheruicPas encore d'évaluation

- AirbagDocument2 pagesAirbagawesomeyogeshwarPas encore d'évaluation

- Huawei HCNA-VC Certification TrainingDocument3 pagesHuawei HCNA-VC Certification TrainingArvind JainPas encore d'évaluation

- Central Processing Unit (The Brain of The Computer)Document36 pagesCentral Processing Unit (The Brain of The Computer)gunashekar1982Pas encore d'évaluation