Académique Documents

Professionnel Documents

Culture Documents

Function of A Random Variable and Its Distribution Topics

Transféré par

Vaibhav ShuklaDescription originale:

Titre original

Copyright

Formats disponibles

Partager ce document

Partager ou intégrer le document

Avez-vous trouvé ce document utile ?

Ce contenu est-il inapproprié ?

Signaler ce documentDroits d'auteur :

Formats disponibles

Function of A Random Variable and Its Distribution Topics

Transféré par

Vaibhav ShuklaDroits d'auteur :

Formats disponibles

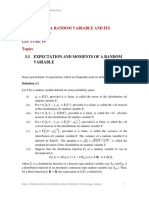

NPTEL- Probability and Distributions

MODULE 3

FUNCTION OF A RANDOM VARIABLE AND ITS

DISTRIBUTION

LECTURE 15

Topics

3.4 PROPERTIES OF RANDOM VARIABLES HAVING

THE SAME DISTRIBUTION

3.5 PROBABILITY AND MOMENT INEQUALITIES

3.5.1 Markov Inequality

3.5.2 Chebyshev Inequality

3.4 PROPERTIES OF RANDOM VARIABLES HAVING

THE SAME DISTRIBUTION

We begin this section with the following definition.

Definition 4.1

Two random variables 𝑋 and 𝑌, defined on the same probability space Ω, ℱ, 𝑃 , are said

𝑑

to have the same distribution (written as 𝑋 = 𝑌 ) if they have the same distribution

function, i.e., if 𝐹𝑋 𝑥 = 𝐹𝑌 𝑥 , ∀𝑥 ∈ ℝ.

Theorem 4.1

(i) Let 𝑋 and 𝑌 be random variables of discrete type with p.m.f.s 𝑓𝑋 and 𝑓𝑌

𝑑

respectively. Then 𝑋 = 𝑌 if, and only if, 𝑓𝑋 𝑥 = 𝑓𝑌 𝑥 , ∀𝑥 ∈ ℝ;

(ii) Let 𝑋 and 𝑌 be random variables having distribution functions that are

differentiable everywhere except, possibly, on some finite sets. Then both of

𝑑

them are of absolutely continuous type. Moreover, 𝑋 = 𝑌 if, and only if, there

exist versions of p.d.f.s 𝑓𝑋 and 𝑓𝑌 of 𝑋 and 𝑌, respectively, such that 𝑓𝑋 𝑥 =

𝑓𝑌 𝑥 , ∀𝑥 ∈ ℝ.

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 1

NPTEL- Probability and Distributions

Proof.

(i) Suppose that 𝑓𝑋 𝑥 = 𝑓𝑌 𝑥 , ∀𝑥 ∈ ℝ. Then, clearly, 𝐹𝑋 𝑥 = 𝐹𝑌 𝑥 , , ∀𝑥 ∈ ℝ,

𝑑 𝑑

and therefore 𝑋 = 𝑌. Conversely suppose that 𝑋 = 𝑌, i.e., 𝐹𝑋 𝑥 = 𝐹𝑌 𝑥 =

𝐺 𝑥 , say, ∀𝑥 ∈ ℝ. Then

𝑥 ∈ ℝ: 𝐹𝑋 𝑥 − 𝐹𝑋 𝑥 − > 0 = 𝑥 ∈ ℝ: 𝐹𝑌 𝑥 − 𝐹𝑌 𝑥 − > 0

= 𝑥 ∈ ℝ: 𝐺 𝑥 − 𝐺 𝑥 − > 0

⇒ 𝑆𝑋 = 𝑆𝑌 = 𝑆, say.

Moreover,

𝐺 𝑥 − 𝐺 𝑥 − , if 𝑥 ∈ 𝑆

𝑓𝑋 𝑥 = 𝑓𝑌 𝑥 = .

0, otherwise

(ii) Suppose that 𝑓𝑋 𝑥 = 𝑓𝑌 𝑥 , ∀𝑥 ∈ ℝ, for some versions of p.d.f.s of 𝑓𝑋 and 𝑓𝑌

of 𝑋 and 𝑌 respectively. Then, clearly, 𝐹𝑋 𝑥 = 𝐹𝑌 𝑥 , ∀𝑥 ∈ ℝ and therefore

𝑑 𝑑

𝑋 = 𝑌. Conversely suppose that 𝑋 = 𝑌, i.e., suppose that 𝐹𝑋 𝑥 = 𝐹𝑌 𝑥 =

𝐺 𝑥 , say, ∀𝑥 ∈ ℝ. By the hypothesis, 𝐺 is differentiable everywhere except

possibly on a finite set 𝐶. Using Remark 4.2 (vii), Module 2, it follows that

both 𝑋 and 𝑌 are of absolutely continuous type with a common (version of)

p.d.f.

𝐺 ′ 𝑥 , if 𝑥 ∉ 𝐶

𝑔(𝑥) = ∙

0, otherwise

As a consequence of the above theorem we have the following corollary.

Theorem 4.2

Let 𝑋 and 𝑌 be two random variables, of either discrete type or of absolutely continuous

𝑑

type, with 𝑋 = 𝑌. Then,

(i) for any Borel function , 𝐸 𝑋 = 𝐸 𝑌 , provided the expectations are

finite;

𝑑

(ii) for any Borel function 𝜓, 𝜓 𝑋 = 𝜓 𝑌 .

Proof.

𝑑

(i) Since 𝑋 = 𝑌, we have 𝐹𝑋 𝑥 = 𝐹𝑌 𝑥 = 𝐺 𝑥 , say, ∀𝑥 ∈ ℝ

Case I. 𝑋 is of discrete type.

𝑑

Since 𝑋 = 𝑌, using Theorem 4.1 (i), it follows that 𝑆𝑋 = 𝑆𝑌 = 𝑆, say, and 𝑓𝑋 𝑥 =

𝑓𝑌 (𝑥) = 𝑔 𝑥 , say, ∀𝑥 ∈ ℝ. Therefore,

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 2

NPTEL- Probability and Distributions

𝐸 𝑋 = 𝑥 𝑓𝑋 𝑥

𝑥∈𝑆

= 𝑥 𝑓𝑌 𝑥

𝑥∈𝑆

=𝐸 𝑌 .

Case II. 𝑋 is of absolutely continuous type.

For simplicity assume that 𝐺 is differentiable everywhere except possibly on a

finite set 𝐶. Using Remark 4.2 (vii), Module 2, we may take

𝐺 ′ 𝑥 , if 𝑥 ∉ 𝐶

𝑓𝑋 𝑥 = 𝑓𝑌 𝑥 = ∙

0, if 𝑥 ∈ 𝐶

Therefore,

∞

𝐸 𝑋 = 𝑥 𝑓𝑋 𝑥 𝑑𝑥

−∞

= 𝑥 𝑓𝑌 𝑥 𝑑𝑥

−∞

=𝐸 𝑌 .

(ii) Fix 𝑥 ∈ ℝ . On taking

1, if 𝜓 𝑥 ≤ 𝑎

𝑥 = 𝐼(−∞,𝑎] 𝜓 𝑥 =

0, if 𝜓 𝑥 > 𝑎

in (i), we get

𝐸 𝐼 (−∞,𝑎] 𝜓 𝑋 = 𝐸 𝐼(−∞,𝑎] 𝜓 𝑌

⇒𝑃 𝜓 𝑋 ≤𝑎 = 𝑃 𝜓 𝑌 ≤ 𝑎 , ∀𝑎 ∈ ℝ

𝑑

⇒𝜓 𝑋 =𝜓 𝑌 . ▄

Example 4.1

(i) Let 𝑋 be a random variable with p.m.f.

𝑛

𝑛 1

𝑓𝑋 𝑥 = , if 𝑥 ∈ 0, 1, … , 𝑛 ,

𝑥 2

0, otherwise

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 3

NPTEL- Probability and Distributions

𝑑

where 𝑛 is a given positive integer. Let 𝑌 = 𝑛 − 𝑋. Show that 𝑌 = 𝑋 and hence

𝑛

show that 𝐸(𝑋) = 2 .

(ii) Let 𝑋 be a random variable with p.d.f.

𝑒− 𝑥

𝑓𝑋 𝑥 = , −∞<𝑥 <∞,

2

𝑑

and let 𝑌 = −𝑋 . Show that 𝑌 = 𝑋 and hence show that 𝐸 𝑋 2𝑛+1 = 0, 𝑛 ∈

0, 1, ⋯ .

Solution.

(i) Clearly 𝐸 𝑋 is finite. Using Example 2.3 it follows that the p.m.f. of 𝑌 = 𝑛 − 𝑋

is given by

𝑓𝑌 𝑦 = 𝑃 𝑌 = 𝑦

𝑦

𝑛 1

, if 𝑦 ∈ 0, 1, ⋯ , 𝑛

= 𝑦 2

0, otherwise

= 𝑓𝑋 𝑦 , ∀𝑦 ∈ ℝ ,

𝑑

i.e., 𝑌 = 𝑋. Hence, using Theorem 4.2 (i),

𝐸 𝑋 = 𝐸 𝑌 = 𝐸 𝑛 − 𝑋 = 𝑛 − 𝐸(𝑋)

𝑛

⟹𝐸 𝑋 = .

2

(ii) Using Corollary 2.2, it can be shown that 𝑌 = −𝑋 is a random variable of

absolutely continuous type with p.d.f.

𝑒− 𝑦

𝑓𝑌 𝑦 = = 𝑓𝑋 𝑦 , − ∞ < 𝑦 < ∞ .

2

𝑑

It follows that 𝑌 = 𝑋. For or 𝑛 ∈ 0,1,2, … , it can be easily shown that 𝐸( 𝑋 𝑟 )is

finite for every 𝑟 > −1. Therefore

𝐸 𝑋 2𝑛+1 = 𝐸 𝑌 2𝑛 +1 = −𝐸 𝑋 2𝑛+1

⟹ 𝐸 𝑋 2𝑛+1 = 0. ▄

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 4

NPTEL- Probability and Distributions

Definition 4.2

A random variable 𝑋 is said to have a symmetric distribution about a point 𝜇 ∈ ℝ if 𝑋 −

𝑑

𝜇 = 𝜇 − 𝑋. ▄

Theorem 4.3

Let 𝑋 be a random variable having p.d.f./p.m.f. 𝑓𝑋 and distribution function 𝐹𝑋 . Let 𝜇 ∈ ℝ.

Then

(i) the distribution of 𝑋 is symmetric about 𝜇 if, and only if , 𝑓𝑋 𝜇 − 𝑥 = 𝑓𝑋 𝜇 + 𝑥 ,

∀𝑥 ∈ ℝ;

(ii) the distribution of 𝑋 is symmetric about 𝜇 if, and only if, 𝐹𝑋 𝜇 + 𝑥 + 𝐹𝑋 𝜇 − 𝑥 − =

1, ∀𝑥 ∈ ℝ i. e. , if and only if, 𝑃 𝑋 ≤ 𝜇 + 𝑥 = 𝑃 𝑋 ≥ 𝜇 − 𝑥 ;

(iii) the distribution of 𝑋 is symmetric about 𝜇 if, and only if, the distribution of 𝑌 = 𝑋 −

𝜇 is symmetric about 0;

1

(iv) if the distribution of 𝑋 is symmetric about 𝜇, then 𝐹𝑋 𝜇 − ≤ 2 ≤ 𝐹𝑋 𝜇 ;

(v) if the distribution of 𝑋 is symmetric about 𝜇 and the expected value of 𝑋 is finite, then

𝐸 𝑋 = 𝜇;

(vi) if the distribution of 𝑋 is symmetric about 0, then 𝐸 𝑋 2𝑚 +1 = 0, 𝑚 ∈ 0, 1, 2, ⋯ ,

provided the expectations exist.

Proof. For simplicity we will assume that if 𝑋 is of absolutely continuous type then its

distribution function is differentiable everywhere expectations possibly on a finite set.

(i) Let 𝑌1 = 𝑋 − 𝜇 and 𝑌2 = 𝜇 − 𝑋. Then the p.d.f.s/p.m.f.s of 𝑌1 and 𝑌2 are given by

𝑓𝑌1 𝑦 = 𝑓𝑋 𝜇 + 𝑦 , 𝑦 ∈ ℝ

and 𝑓𝑌2 𝑦 = 𝑓𝑋 𝜇 − 𝑦 , 𝑦 ∈ ℝ,

respectively. Now, under the hypothesis,

𝑑

distribution of 𝑋 is symmetric about 𝜇 ⇔ 𝑌1 = 𝑌2

⇔ 𝑓𝑌1 𝑦 = 𝑓𝑌2 𝑦 , ∀𝑦 ∈ ℝ

⇔ 𝑓𝑋 𝜇 + 𝑦 = 𝑓𝑋 𝜇 − 𝑦 , ∀𝑦 ∈ ℝ.

(ii) Let 𝑌1 = 𝑋 − 𝜇 and 𝑌2 = 𝜇 − 𝑋 so that the distribution functions of 𝑌1 and 𝑌2 are

given by 𝐹𝑌1 𝑥 = 𝐹𝑋 𝜇 + 𝑥 , 𝑥 ∈ ℝ, and 𝐹𝑌2 𝑥 = 1 − 𝐹𝑋 𝜇 − 𝑥 − , 𝑥 ∈ ℝ.

Therefore, under the hypothesis,

𝑑

𝑌1 = 𝑌2 ⇔ 𝐹𝑌1 𝑥 = 𝐹𝑌2 𝑥 , ∀𝑥 ∈ ℝ

⇔ 𝐹𝑋 𝜇 + 𝑥 + 𝐹𝑋 𝜇 − 𝑥 − = 1, ∀𝑥 ∈ ℝ.

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 5

NPTEL- Probability and Distributions

(iii) Clearly ,

𝑑

distribution of 𝑋 is symmetric about 𝜇 ⇔ 𝑋 − 𝜇 = 𝜇 − 𝑋 = −(𝑋 − 𝜇)

𝑑

⇔ 𝑌 =− 𝑌.

(iv) Using (ii), we have

distribution of 𝑋 is symmetric about 𝜇 ⇔ 𝐹𝑋 𝜇 + 𝑥 + 𝐹𝑋 𝜇 − 𝑥 − = 1, ∀𝑥 ∈ ℝ

⟹ 𝐹𝑋 𝜇 + 𝐹𝑋 𝜇 − = 1

1

⟹ 𝐹𝑋 𝜇 − ≤ ≤ 𝐹𝑋 𝜇 ,

2

since 𝐹𝑋 𝜇 − ≤ 𝐹𝑋 𝜇 .

𝑑

(v) Suppose that 𝑋 − 𝜇 = 𝜇 − 𝑋 and 𝐸 𝑋 < ∞. Then 𝐸 𝑋 − 𝜇 = 𝐸 𝜇 − 𝑋 (using

Theorem 4.2 (i)) and therefore 𝐸 𝑋 = 𝜇.

𝑑

(vi) Suppose that 𝑋 =− 𝑋 . Then, using Theorem 4.2 i ,

𝐸 𝑋 2𝑚 +1 = 𝐸 (−𝑋)2𝑚 +1 , 𝑚 ∈ 0,1,2, ⋯ ,

provided the expectations exist. Therefore,

𝐸 𝑋 2𝑚 +1 = 𝐸 −𝑋 2𝑚 +1 , 𝑚 ∈ 0, 1, 2, ⋯

⟹ 𝐸 𝑋 2𝑚 +1 = 0, 𝑚 ∈ 0, 1, 2, ⋯ . ▄

Theorem 4.4

Let 𝑋 and 𝑌 be random variables having m.g.f.s 𝑀𝑋 and 𝑀𝑌 respectively. Suppose that

there exists a positive real number 𝑏 such that 𝑀𝑋 𝑡 = 𝑀𝑌 𝑡 , ∀𝑡 ∈ −𝑏, 𝑏 . Then

𝑑

𝑋 = 𝑌.

Proof. We will provide the proof for the special case where 𝑋 and 𝑌 are of discrete type

and 𝑆𝑋 = 𝑆𝑌 ⊆ 0, 1, 2, ⋯ , as the proof for general 𝑋 and 𝑌 is involved. We have

𝑀𝑋 𝑡 = 𝑀𝑌 𝑡 , ∀𝑡 ∈ −𝑏, 𝑏

∞ ∞

⟹ 𝑒 𝑡𝑘 𝑃 𝑋 = 𝑘 = 𝑒 𝑡𝑘 𝑃 𝑌 = 𝑘 , ∀𝑡 ∈ −𝑏, 𝑏

𝑘=0 𝑘=0

∞ ∞

⟹ 𝑠𝑘 𝑃 𝑋 = 𝑘 = 𝑠 𝑘 𝑃 𝑌 = 𝑘 , ∀𝑠 ∈ 𝑒 −𝑏 , 𝑒 𝑏 .

𝑘=0 𝑘=0

We know that if two power series or polynomials match over an interval then they have

the same coefficients. It follows that 𝑃 𝑋 = 𝑘 = 𝑃 𝑌 = 𝑘 , 𝑘 ∈ 0,1,2, ⋯ , i.e.,

𝑋 and 𝑌 have the same p.m.f.. Now the result follows using Theorem 4.1 (i). ▄

Example 4.2

Let 𝜇 ∈ ℝ and 𝜎 > 0 be real constants and let 𝑋𝜇 ,𝜎 be a random variable having p.d.f.

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 6

NPTEL- Probability and Distributions

1 (𝑥 −μ)2

−

𝑓𝑋𝜇 ,𝜎 𝑥 = e 2σ2 , −∞< 𝑥 <∞∙ (4.1)

σ 2𝜋

(i) Show that 𝑓𝑋𝜇 ,𝜎 is a p.d.f.;

(ii) Show that the probability distribution function of 𝑋𝜇 ,𝜎 is symmetric about 𝜇. Hence

find 𝐸(𝑋𝜇,𝜎 );

(iii) Find the m.g.f. of 𝑋𝜇 ,𝜎 and hence find the mean and variance of 𝑋𝜇 ,𝜎 ;

(iv) Let 𝑌𝜇 ,𝜎 = 𝑎𝑋𝜇 ,𝜎 + 𝑏, where 𝑎 ≠ 0 and 𝑏 are real constants. Using the m.g.f. of

𝑋𝜇,𝜎 , show that the p.d.f. of 𝑌𝜇 ,𝜎 is

1 (y +(a 𝜇 +b ))2

−

𝑓𝑌𝜇 ,𝜎 𝑦 = e 2a 2 σ2 , − ∞ < 𝑦 < ∞.

a σ 2𝜋

Solution.

(i) Clearly 𝑓𝑋𝜇 ,𝜎 𝑥 ≥ 0, ∀𝑥 ∈ ℝ. Also,

∞ ∞ (x −μ)2

1 −

𝑓𝑋𝜇 ,𝜎 𝑥 𝑑𝑥 = e 2σ2 𝑑𝑥

−∞ −∞ σ 2𝜋

∞

1 z2

− 𝑥−μ

= e 2 𝑑𝑧 (on making the transformation = 𝑧)

2𝜋 −∞ 𝜎

= 𝐼, say.

Clearly 𝐼 ≥ 0 and

∞ ∞

2

1 −

y2 1 z2

−

𝐼 = e 2 𝑑𝑦 e 2 𝑑𝑧

2𝜋 −∞ 2𝜋 −∞

∞ ∞

1 −

(𝑦 2 +𝑧 2 )

= 𝑒 2 𝑑𝑦𝑑𝑧.

2π −∞ −∞

On making the transformation 𝑦 = 𝑟 cos 𝜃 , 𝑧 = 𝑟 sin 𝜃 , 𝑟 > 0, 0 ≤ 𝜃 < 2𝜋 (so

that the Jacobian of the transformation is r) we get

∞ 2𝜋

1 𝑟2

𝐼2 = 𝑟𝑒 − 2 𝑑𝜃𝑑𝑟

2𝜋 0 0

∞ 𝑟2

= 𝑟𝑒 − 2 𝑑𝑟

0

∞

= 𝑒 −𝑧 𝑑𝑧

0

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 7

NPTEL- Probability and Distributions

= 1.

Since 𝐼 ≥ 0, it follows that 𝐼 = 1 and thus 𝑓𝑋𝜇 ,𝜎 𝑥 is a p.d.f..

(ii) Clearly,

1 𝑥2

−

𝑓𝑋𝜇 ,𝜎 μ − 𝑥 = 𝑓𝑋𝜇 ,𝜎 μ + 𝑥 = e 2σ2 , ∀𝑥 ∈ ℝ.

σ 2𝜋

Using Theorem 4.3 (i) and (v) it follows that the distribution of 𝑋𝜇 ,𝜎 is symmetric

about 𝜇 and 𝐸 𝑋𝜇 ,𝜎 = 𝜇.

(iii) For 𝑡 ∈ ℝ

𝑀𝑋𝜇 ,𝜎 𝑡 = 𝐸(𝑒 𝑡𝑋𝜇 ,𝜎 )

∞ (𝑥 −𝜇 )2

𝑡𝑥

1 −

= 𝑒 𝑒 2𝜎 2 𝑑𝑥

−∞ 𝜎 2𝜋

∞

1 𝑧2

= 𝑒 𝑡(𝜇 +𝜎𝑧 ) 𝑒 − 2 𝑑𝑧

2𝜋 −∞

𝜎 2𝑡2

𝑒 𝜇𝑡 + 2 ∞ (𝑧−𝜎𝑡 )2

= 𝑒− 2 𝑑𝑡

2𝜋 −∞

𝜎 2𝑡2

= 𝑒 𝜇𝑡 + 2 ,

since, by (i),

∞ (𝑥 −𝜇 )2

−

𝑒 2𝜎 2 𝑑𝑥 = 𝜎 2𝜋, ∀𝜇 ∈ ℝ and 𝜎 > 0.

−∞

Thus, for 𝑡 ∈ ℝ,

𝜎2 𝑡2

𝜓𝑋𝜇 ,𝜎 𝑡 = ln 𝑀𝑋𝜇 ,𝜎 𝑡 = 𝜇𝑡 +

2

(1) (2)

⟹ 𝐸 𝑋 = 𝜓𝑋𝜇 ,𝜎 0 = 𝜇 and Var 𝑋 = 𝜓𝑋𝜇 ,𝜎 0 = 𝜎 2 .

(iv) From the discussion following Definition 3.3 we have, for 𝑡 ∈ ℝ,

𝑀𝑌𝜇 ,𝜎 𝑡 = 𝑀𝑎𝑋 𝜇 ,𝜎 +𝑏 𝑡

= 𝑒 𝑡𝑏 𝑀𝑋𝜇 ,𝜎 𝑎𝑡

𝑎 2𝜎 2𝑡2

𝑎𝜇 +𝑏 𝑡+

= 𝑒 2

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 8

NPTEL- Probability and Distributions

= 𝑀𝑋 𝑎𝜇 +𝑏, 𝑎 𝜎 𝑡

𝑑

⟹ 𝑌𝜇 ,𝜎 = 𝑋𝑎𝜇 +𝑏, 𝑎 𝜎 .

Therefore the p.d.f. of 𝑌𝜇 ,𝜎 is given by

𝑓𝑌𝜇 ,𝜎 𝑦 = 𝑓𝑋 𝑎𝜇 +𝑏, 𝑎 𝜎 𝑦

1 (𝑦 −(𝑎𝜇 +𝑏))2

−

= 𝑒 2𝑎 2 𝜎 2 𝑦 ∈ ℝ. ▄

𝑎 𝜎 2𝜋

In the statistical literature, the probability distribution of the random variable 𝑋𝜇 ,𝜎 having a

p.d.f. 𝑓𝑋𝜇 ,𝜎 ⋅ , defined by (4.1), is called the normal distribution (or Gaussian distribution)

with mean 𝜇 and variance 𝜎 2 (denoted by 𝑋𝜇 ,𝜎 ∼ 𝑁(𝜇, 𝜎 2 )). Various properties of this

distribution are further discussed in Module 5.

Example 4.3

Let 𝑝 ∈ 0,1 and let 𝑋𝑝 be a random variable with p.m.f.

𝑛 𝑥 𝑛−𝑥

𝑝 𝑞 , if 𝑥 ∈ 0, 1, ⋯ , 𝑛

𝑓𝑋𝑝 𝑥 = 𝑥 , (4.2)

0, otherwise

where 𝑛 is a given positive integer and 𝑞 = 1 − 𝑝.

(i) Find the m.g.f. of 𝑋𝑝 and hence find the mean and variance of 𝑋𝑝 , 𝑝 ∈ 0,1 ;

(ii) Let 𝑌𝑝 = 𝑛 − 𝑋𝑝 , p ∈ 0,1 . Using the m.g.f. of 𝑋𝑝 show that the p.m.f. of 𝑌𝑝 is

𝑛 𝑦

𝑞 (1 − 𝑞)𝑛−𝑦 , if 𝑦 ∈ 0, 1, ⋯ , 𝑛

𝑓𝑌𝑝 𝑥 = 𝑦 .

0, otherwise

Solution.

(i) From the solution of Example 3.6 (iii), it is clear that the m.g.f. of 𝑋𝑝 is given

by

𝑀𝑋𝑝 𝑡 = (1 − 𝑝 + 𝑝𝑒 𝑡 )𝑛 , 𝑡 ∈ ℝ.

Therefore, for 𝑡 ∈ ℝ,

𝜓𝑋𝑝 𝑡 = ln(𝑀𝑋𝑝 𝑡 ) = 𝑛 ln(1 − 𝑝 + 𝑝𝑒 𝑡 ) , 𝑡 ∈ ℝ,

𝑛𝑝𝑒 𝑡

𝜓𝑋1𝑝 𝑡 = , 𝑡 ∈ ℝ,

1 − 𝑝 + 𝑝𝑒 𝑡

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 9

NPTEL- Probability and Distributions

2 1 − 𝑝 + 𝑝𝑒 𝑡 𝑒 𝑡 − 𝑝𝑒 2𝑡

𝜓𝑋𝑝 (𝑡) = 𝑛𝑝 ,𝑡 ∈ ℝ

(1 − 𝑝 + 𝑝𝑒 𝑡 )2

⟹ 𝐸 𝑋 = 𝜓𝑋1𝑝 0 = 𝑛𝑝 and Var 𝑋 = 𝜓𝑋2𝑝 (0) = 𝑛𝑝 1 − 𝑝 .

(ii) For 𝑡 ∈ ℝ

𝑀𝑌𝑝 𝑡 = 𝐸(𝑒 𝑡𝑌𝑝 )

= 𝑒 𝑛𝑡 𝑀𝑋𝑝 −𝑡

= 𝑒 𝑛𝑡 (1 − 𝑝 + 𝑝𝑒 −𝑡 )𝑛

= (𝑝 + (1 − 𝑝)𝑒 𝑡 )𝑛

= 𝑀𝑋1−𝑝 𝑡 ,

𝑑

i.e. , 𝑌𝑝 = 𝑋1−𝑝 , p ∈ 0,1 . Therefore the p.m.f. of 𝑌𝑝 is given by

𝑓𝑌𝑝 𝑦 = 𝑓𝑋1−𝑝 𝑦

𝑛 𝑦

𝑞 (1 − 𝑞)𝑛−𝑦 , if 𝑦 ∈ 0, 1, ⋯ , 𝑛

= 𝑦 . ▄

0, otherwise

The probability distribution of the random variable 𝑋𝑝 , having p.m.f. 𝑓𝑋𝑝 (⋅) defined by

(4.2), is called a binomial distribution with 𝑛 trials and success probability 𝑝 (denoted by

𝑋𝑝 ∼ Bin(𝑛, 𝑝)). The binomial distribution and other related distributions are discussed in

more detail in Module 5.

3.5 PROBABILITY AND MOMENT INEQUALITIES

Let 𝑋 be a random variable defined on a probability space 𝛺, ℱ, 𝑃 , and let 𝐵 ∈ ℬ1 be a

Borel set. In many situation 𝑃 𝑋 ∈ 𝐵 cannot be explicitly evaluated and therefore some

estimate of this probability may be desired. For example if a random variable 𝑍 has the

p.d.f.

1 𝑧2

𝑓𝑍 𝑧 = 𝑒 − 2 , −∞ < 𝑧 < ∞,

2𝜋

then

∞ 1 𝑧2

𝑃 𝑍>2 = 2 2𝜋

𝑒 − 2 𝑑𝑧 (5.1)

cannot be explicitly evaluated and, therefore, an estimate of this probability may be

desired. To estimate this probability one has to either resort to numerical integration or use

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 10

NPTEL- Probability and Distributions

some other estimation procedure. Inequalities are popular estimation procedures and they

play an important role in probability theory.

Theorem 5.1

Let 𝑋 be a random variable and let 𝑔: 0, ∞) → ℝ be a non-negative and non decreasing

function such that the expected value of 𝑔(𝑋) is finite. Then, for any 𝑐 > 0 for which

𝑔(𝑐) > 0,

𝛦 𝑔 𝑋

𝑃 𝑋 ≥𝑐 ≤ .

𝑔 𝑐

Proof. We will provide the proof for the case when 𝑋 is of absolutely continuous type.

The proof for the discrete case follows in the similar fashion with integral signs replaced

by summation signs.

Fix 𝑐 > 0 such that 𝑔 𝑐 > 0. Define 𝐴 = ℝ − −𝑐, 𝑐 so that, for 𝑥 ∈ 𝛢, 𝑥 ≥ 𝑐. Then

∞

𝐸 𝑔 𝑋 = 𝑔 𝑥 𝑓𝑋 𝑥 𝑑𝑥

−∞

∞

≥ 𝑔 𝑥 𝐼𝐴 𝑥 𝑓𝑋 𝑥 𝑑𝑥 since 𝑔 𝑥 ≥ 𝑔 𝑥 𝐼𝐴 (𝑥)∀𝑥 ∈ ℝ

−∞

∞

≥𝑔 𝑐 𝐼𝐴 𝑥 𝑓𝑋 𝑥 𝑑𝑥 (since 𝑔 𝑥 𝐼𝐴 𝑥 ≥ 𝑔 𝑐 𝐼𝐴 𝑥 ∀𝑥 ∈ ℝ, as 𝑔 ↑)

−∞

= 𝑔 𝑐 𝑃 𝑋∈𝐴

= 𝑔 𝑐 𝑃 𝑋 ≥𝑐

Ε 𝑔 𝑋

⟹𝑃 𝑋 ≥𝑐 ≤ . ▄

𝑔 𝑐

Corollary 5.1

Let 𝑋 be a random variable.

3.5.1 Markov Inequality

𝑟

Suppose that 𝐸 𝑋 < ∞, for some 𝑟 > 0. Then, for any 𝑐 > 0,

𝐸 𝑋𝑟

𝑃 𝑋 ≥𝑐 ≤ .

𝑐𝑟

3.5.2 Chebyshev Inequality

Suppose that 𝑋 has finite first two moments. If 𝜇 = 𝐸 𝑋 and 𝜎 2 = Var 𝑋 𝜎≥

0 . Then for any 𝑘 > 0,

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 11

NPTEL- Probability and Distributions

𝜎2

𝑃 𝑋−𝜇 ≥𝑘 ≤ 2.

𝑘

Proof.

(i) Fix 𝑐 > 0 and 𝑟 > 0 and let 𝑔 𝑥 = 𝑥 𝑟 , 𝑥 ≥ 0. Clearly 𝑔 is a non-negative and

non decreasing function. Using Theorem 5.1, we get

𝑟

𝐸 𝑋

𝑃 𝑋 ≥𝑐 ≤ .

𝑐𝑟

(ii) Using (i) on 𝑌 = 𝑋 − 𝜇 , for 𝑟 = 2, we get

𝐸( 𝑋 − 𝜇 2 ) 𝜎 2

𝑃 𝑋−𝜇 ≥𝑘 ≤ = 2. ▄

𝑘2 𝑘

Example: 5.1

Let us revisit the problem of estimating 𝑃 𝑍 > 2 , defined by (5.1). Using Example 4.2

(iii), we have 𝜇 = 𝐸 𝑍 = 0 and 𝜎 2 = Var 𝑍 = 1. Moreover, using Example 4.2 (ii),

𝑑

𝑍 =− 𝑍. Consequently 𝑃 𝑍 > 2 = 𝑃 −𝑍 > 2 = 𝑃 𝑍 < −2 , i.e.,

𝑃 𝑍 >2 𝑃 𝑍 ≥2

𝑃 𝑍>2 = = .

2 2

Now, using the Chebyshev inequality we have

2

𝑃 𝑍 ≥2 𝐸 𝑍 1

𝑃 𝑍>2 = ≤ = = 0.125.

2 8 8

The exact value of 𝑃 𝑍 > 2 , obtained using numerical integration, is 0.0228. ▄

The following example illustrates that bounds provided in Theorem 5.1 and Corollary 5.1

are tight, i.e., the upper bounds provided there in may be attained.

Example 5.2

Let 𝑋 be a random variable with p.m.f.

1

, if 𝑥 ∈ {−1,1}

8

𝑓𝑋 𝑥 = 3 .

, if 𝑥 = 0

4

0, otherwise

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 12

NPTEL- Probability and Distributions

1

Clearly 𝐸 𝑋 2 = and, therefore, using the Markov inequality we have

4

1

𝑃 𝑋 ≥1 ≤ 𝐸 𝑋2 = .

4

The exact probability is

1

𝑃 𝑋 ≥1 = 𝑃 𝑋 ∈ −1,1 = . ▄

4

Definition 5.1

A random variable 𝑋 is said to be degenerate at a point 𝑐 ∈ ℝ if 𝑃 𝑋 = 𝑐 = 1. ▄

Suppose that a random variable 𝑋 is degenerate at 𝑐 ∈ ℝ. Then clearly 𝑋 is of discrete

type with support 𝑆𝑋 = 𝑐 , distribution function.

0, 𝑥<𝑐

𝐹𝑋 𝑥 = ,

1, 𝑥≥𝑐

and p.m.f.

1, 𝑥=𝑐

𝑓𝑋 𝑥 = .

0, otherwise

Note that a random variable 𝑋 is degenerate at a point 𝑐 ∈ ℝ if, and only if, 𝐸 𝑋 = 𝑐 and

Var 𝑋 = 0.

Dept. of Mathematics and Statistics Indian Institute of Technology, Kanpur 13

Vous aimerez peut-être aussi

- 688 (I) Hunter-Killer - User ManualDocument115 pages688 (I) Hunter-Killer - User ManualAndrea Rossi Patria100% (2)

- A-level Maths Revision: Cheeky Revision ShortcutsD'EverandA-level Maths Revision: Cheeky Revision ShortcutsÉvaluation : 3.5 sur 5 étoiles3.5/5 (8)

- Continuous Probability Distribution.Document10 pagesContinuous Probability Distribution.Hassan El-kholy100% (1)

- Chapter 5 Assessment, Solution Manual, Electrons in Atoms, Glencoe, ChemistryDocument9 pagesChapter 5 Assessment, Solution Manual, Electrons in Atoms, Glencoe, Chemistrypumeananda100% (3)

- 01 Gyramatic-Operator Manual V2-4-1Document30 pages01 Gyramatic-Operator Manual V2-4-1gytoman100% (2)

- A Course of Mathematics for Engineerings and Scientists: Volume 5D'EverandA Course of Mathematics for Engineerings and Scientists: Volume 5Pas encore d'évaluation

- Function of A Random Variable and Its Distribution TopicsDocument14 pagesFunction of A Random Variable and Its Distribution TopicsVaibhav ShuklaPas encore d'évaluation

- Random Variable and Its Distribution TopicsDocument8 pagesRandom Variable and Its Distribution TopicsMinilik DersehPas encore d'évaluation

- Function of A Random Variable and Its Distirbution ProblemsDocument8 pagesFunction of A Random Variable and Its Distirbution ProblemsMinilik DersehPas encore d'évaluation

- Random Variable and Its Distribution Problems: NPTEL-Probability and DistributionsDocument7 pagesRandom Variable and Its Distribution Problems: NPTEL-Probability and DistributionsMinilik DersehPas encore d'évaluation

- Expansive Mappings and Their Applications in Modular SpaceDocument9 pagesExpansive Mappings and Their Applications in Modular SpaceWaqasskhanPas encore d'évaluation

- General Topology: The Problems Autumn 2020 Abstract Topological SpacesDocument4 pagesGeneral Topology: The Problems Autumn 2020 Abstract Topological SpacesAlexandru IonescuPas encore d'évaluation

- Implicit Approximation Scheme For The Solution of Positive Definite Operator EquationDocument7 pagesImplicit Approximation Scheme For The Solution of Positive Definite Operator EquationPranjali KPas encore d'évaluation

- A Proximal Method Solve Quasiconvex-UltimoDocument6 pagesA Proximal Method Solve Quasiconvex-UltimoArturo Morales SolanoPas encore d'évaluation

- Continuous Probability DistributionsDocument7 pagesContinuous Probability DistributionsAlaa FaroukPas encore d'évaluation

- Mod2 4Document19 pagesMod2 4lakshita6789Pas encore d'évaluation

- Lecture 6. Random Variable and Its DistributionDocument7 pagesLecture 6. Random Variable and Its Distributionkatherineoden14Pas encore d'évaluation

- Unit - V: Statistical Technique & DistributionDocument20 pagesUnit - V: Statistical Technique & DistributionGirraj DoharePas encore d'évaluation

- Characterization of The Sup/inf (Slide 6) : Calculus!Document2 pagesCharacterization of The Sup/inf (Slide 6) : Calculus!Kimmy MahllyPas encore d'évaluation

- Unit II Session 05Document7 pagesUnit II Session 05K.A.S.S PereraPas encore d'évaluation

- Phy 413 Lecture Three NotesDocument3 pagesPhy 413 Lecture Three Notesprudence.chepngenoPas encore d'évaluation

- Unit-Iiic DevcDocument14 pagesUnit-Iiic Devc464-JALLA GANESHPas encore d'évaluation

- Friedlander and Joshi Distribution Theory NotesDocument35 pagesFriedlander and Joshi Distribution Theory Notesفارس الزهريPas encore d'évaluation

- (Last) Extension of Several Random VariablesDocument16 pages(Last) Extension of Several Random VariablesPrehatin FitrianPas encore d'évaluation

- Wave Function ELearn 0923Document3 pagesWave Function ELearn 0923邱頌智Pas encore d'évaluation

- Unit I Session 03Document10 pagesUnit I Session 03K.A.S.S PereraPas encore d'évaluation

- C) Calculus Sheet SolutionsDocument4 pagesC) Calculus Sheet SolutionsHaoyu WangPas encore d'évaluation

- Laplacian, Conservative Field (Lecture 7)Document4 pagesLaplacian, Conservative Field (Lecture 7)BlessingPas encore d'évaluation

- Existence and Uniqueness Results For A Smooth Model ofDocument5 pagesExistence and Uniqueness Results For A Smooth Model ofI Made WiragunarsaPas encore d'évaluation

- LatticeDocument13 pagesLatticeAkarshitaPas encore d'évaluation

- Stochastic Calculus For Finance II ContiDocument99 pagesStochastic Calculus For Finance II ContisumPas encore d'évaluation

- Calculus - Lec. 04Document22 pagesCalculus - Lec. 04julie mamdouhPas encore d'évaluation

- Free FireDocument7 pagesFree FirePs PsPas encore d'évaluation

- Anna University, Chennai, November/December 2012Document11 pagesAnna University, Chennai, November/December 2012losaferPas encore d'évaluation

- Linear Algebra by OzaDocument91 pagesLinear Algebra by OzaScout opPas encore d'évaluation

- 1.3 Discrete Random VariablesDocument35 pages1.3 Discrete Random Variableslatiy65696Pas encore d'évaluation

- CE 809 - Lecture 4 - Response of SDF Systems To Periodic LoadingDocument32 pagesCE 809 - Lecture 4 - Response of SDF Systems To Periodic LoadingArslan UmarPas encore d'évaluation

- Flac hw4Document2 pagesFlac hw4Ashish FugarePas encore d'évaluation

- Measure Theory PDFDocument19 pagesMeasure Theory PDFchebetprudence508Pas encore d'évaluation

- Convergence and Large Sample Approximations - Part 1 Definition: Convergence in DistributionDocument10 pagesConvergence and Large Sample Approximations - Part 1 Definition: Convergence in DistributiondPas encore d'évaluation

- CH 8Document22 pagesCH 8aju michaelPas encore d'évaluation

- SYBSc Semester IV Paper I Question Bank (Unit 1 and 3)Document2 pagesSYBSc Semester IV Paper I Question Bank (Unit 1 and 3)jadhav742004Pas encore d'évaluation

- MTH 102 Calculus - II-Topic 2-The Fundamental Theorem of CalculusDocument23 pagesMTH 102 Calculus - II-Topic 2-The Fundamental Theorem of CalculusDestroy GamePas encore d'évaluation

- IBC CHED Basic Calculus FormulaDocument8 pagesIBC CHED Basic Calculus FormulaCharleymaine Venus BelmontePas encore d'évaluation

- L-21 M (X) - G-1 ModelDocument8 pagesL-21 M (X) - G-1 ModelAnisha GargPas encore d'évaluation

- Chap 2Document32 pagesChap 2Meriem (Student) DjidjeliPas encore d'évaluation

- Dspfoehu Matlab02 Thediscrete Timefourieranalysis 170324210057 PDFDocument50 pagesDspfoehu Matlab02 Thediscrete Timefourieranalysis 170324210057 PDFBến trần vănPas encore d'évaluation

- I. Variational Formulation.: Student: Victor Pugliese R#: 11492336 Course: MATH 5345 Numerical Analysis Project 01Document6 pagesI. Variational Formulation.: Student: Victor Pugliese R#: 11492336 Course: MATH 5345 Numerical Analysis Project 01Victor Pugliese ManotasPas encore d'évaluation

- Chapter 3Document75 pagesChapter 3Logan HooverPas encore d'évaluation

- T-1 Math 2nd Year Ideal Acdmy CH 1 2Document2 pagesT-1 Math 2nd Year Ideal Acdmy CH 1 2Tahreem AsifPas encore d'évaluation

- Lecture NotesDocument107 pagesLecture NotesHimanshu YadavPas encore d'évaluation

- Probability Class 9Document4 pagesProbability Class 9Ankit ChatterjeePas encore d'évaluation

- Improper IntegralsDocument16 pagesImproper Integralsjodyanne ubaldePas encore d'évaluation

- Basic Calculus 3rd Quarter Week 8 MergedDocument11 pagesBasic Calculus 3rd Quarter Week 8 MergedKazandra Cassidy GarciaPas encore d'évaluation

- The Laplace TransformDocument3 pagesThe Laplace TransformRenaldoPas encore d'évaluation

- BC q4wk1 2dlp Done Qa RodelDocument16 pagesBC q4wk1 2dlp Done Qa RodelMaynard CorpuzPas encore d'évaluation

- For Students Quantifiers and ProofsDocument33 pagesFor Students Quantifiers and ProofsJohn Carlo Sinampaga Solivio-LisondatoPas encore d'évaluation

- Unit I NotesDocument31 pagesUnit I Notesangel auxzaline maryPas encore d'évaluation

- MAT Calculus Practice: Non CalculatorDocument3 pagesMAT Calculus Practice: Non CalculatorjimPas encore d'évaluation

- Module 2 - Methods of Integration (Part 2)Document13 pagesModule 2 - Methods of Integration (Part 2)johnpaulbandonil651Pas encore d'évaluation

- HTTPS://WWW Scribd com/document/432985003/PICASADocument7 pagesHTTPS://WWW Scribd com/document/432985003/PICASANelli HariPas encore d'évaluation

- Radically Elementary Probability Theory. (AM-117), Volume 117D'EverandRadically Elementary Probability Theory. (AM-117), Volume 117Évaluation : 4 sur 5 étoiles4/5 (2)

- Random Fourier Series with Applications to Harmonic Analysis. (AM-101), Volume 101D'EverandRandom Fourier Series with Applications to Harmonic Analysis. (AM-101), Volume 101Pas encore d'évaluation

- Mini Riset Bahasa Inggris BisnisDocument10 pagesMini Riset Bahasa Inggris BisnissyahsabilahPas encore d'évaluation

- Oracle Pac 2nd KeyDocument48 pagesOracle Pac 2nd KeyKrishna Kumar GuptaPas encore d'évaluation

- Self Awareness and Self Management: NSTP 1Document7 pagesSelf Awareness and Self Management: NSTP 1Fritzgerald LanguidoPas encore d'évaluation

- Additional Material On CommunicationDocument15 pagesAdditional Material On CommunicationSasmita NayakPas encore d'évaluation

- 0192-En 13948-2008Document9 pages0192-En 13948-2008Borga ErdoganPas encore d'évaluation

- Introduction To Multistage Car Parking SystemDocument4 pagesIntroduction To Multistage Car Parking SystemInternational Journal of Application or Innovation in Engineering & ManagementPas encore d'évaluation

- What Happens To The 3-Phase Motor When 1 Out of 3 Phases Is Lost?Document3 pagesWhat Happens To The 3-Phase Motor When 1 Out of 3 Phases Is Lost?miretade titoPas encore d'évaluation

- CS221 - Artificial Intelligence - Search - 4 Dynamic ProgrammingDocument23 pagesCS221 - Artificial Intelligence - Search - 4 Dynamic ProgrammingArdiansyah Mochamad NugrahaPas encore d'évaluation

- Chuck Eesley - Recommended ReadingDocument7 pagesChuck Eesley - Recommended ReadinghaanimasoodPas encore d'évaluation

- Semantic SearchMonkeyDocument39 pagesSemantic SearchMonkeyPaul TarjanPas encore d'évaluation

- Load Dwe Eigh Ing D Devi Ice: For R Elev Vators SDocument28 pagesLoad Dwe Eigh Ing D Devi Ice: For R Elev Vators SNaren AnandPas encore d'évaluation

- Catholic Social TeachingsDocument21 pagesCatholic Social TeachingsMark de GuzmanPas encore d'évaluation

- SAGC Compliance Awareness-Grid UsersDocument66 pagesSAGC Compliance Awareness-Grid Userskamal_khan85Pas encore d'évaluation

- Makenna Resort: by Drucker ArchitectsDocument12 pagesMakenna Resort: by Drucker ArchitectsArvinth muthuPas encore d'évaluation

- Imarest 2021 Warship Development 1997Document43 pagesImarest 2021 Warship Development 1997nugrohoPas encore d'évaluation

- Addis Ababa University Lecture NoteDocument65 pagesAddis Ababa University Lecture NoteTADY TUBE OWNER100% (9)

- p-100 Vol2 1935 Part5Document132 pagesp-100 Vol2 1935 Part5Matias MancillaPas encore d'évaluation

- Coding Prony 'S Method in MATLAB and Applying It To Biomedical Signal FilteringDocument14 pagesCoding Prony 'S Method in MATLAB and Applying It To Biomedical Signal FilteringBahar UğurdoğanPas encore d'évaluation

- Lab Session 8: To Develop and Understanding About Fatigue and To Draw S-N Curve For The Given Specimen: I. SteelDocument4 pagesLab Session 8: To Develop and Understanding About Fatigue and To Draw S-N Curve For The Given Specimen: I. SteelMehboob MeharPas encore d'évaluation

- Consumer Behaviour ProjectDocument43 pagesConsumer Behaviour ProjectMuhammad UsmanPas encore d'évaluation

- JMPGuitars 18 Watt Tremolo TMB Reverb LayoutDocument1 pageJMPGuitars 18 Watt Tremolo TMB Reverb LayoutRenan Franzon GoettenPas encore d'évaluation

- BP KWN RPM W KGW KGW KGW KG SFC GM KWHR Caloricfivalue MJ KGDocument3 pagesBP KWN RPM W KGW KGW KGW KG SFC GM KWHR Caloricfivalue MJ KGHoàng Khôi100% (1)

- Waste Heat BoilerDocument7 pagesWaste Heat Boilerabdul karimPas encore d'évaluation

- BUCA IMSEF 2021 Jury Evaluation ScheduleDocument7 pagesBUCA IMSEF 2021 Jury Evaluation SchedulePaulina Arti WilujengPas encore d'évaluation

- Nguyen Ngoc-Phu's ResumeDocument2 pagesNguyen Ngoc-Phu's ResumeNgoc Phu NguyenPas encore d'évaluation

- Guide For H Nmr-60 MHZ Anasazi Analysis: Preparation of SampleDocument7 pagesGuide For H Nmr-60 MHZ Anasazi Analysis: Preparation of Sampleconker4Pas encore d'évaluation

- Piramal Revanta - Tower 3Document13 pagesPiramal Revanta - Tower 3bennymahaloPas encore d'évaluation