Académique Documents

Professionnel Documents

Culture Documents

Analysis of Artificial Neural-Network

Transféré par

Editor IJTSRDCopyright

Formats disponibles

Partager ce document

Partager ou intégrer le document

Avez-vous trouvé ce document utile ?

Ce contenu est-il inapproprié ?

Signaler ce documentDroits d'auteur :

Formats disponibles

Analysis of Artificial Neural-Network

Transféré par

Editor IJTSRDDroits d'auteur :

Formats disponibles

International Journal of Trend in Scientific Research and Development (IJTSRD)

International Open Access Journal | www.ijtsrd.com

ISSN No: 2456 - 6470 | Volume - 2 | Issue – 6 | Sep – Oct 2018

Analysis oof Artificial Neural-Network

CVS1, Nadikoppula Pardhasaradhi2

Rajesh C

1

Assistant Professor

Professor, Mechanical Engineering Department,

Vizag Institute of Technology, Visakhapatnam, Andhra Pradesh, India

2

M.Tech (Product Design and Ma

Manufacturing) Student,

Maharaj Vijayaram Gajapathi Raj College of Engineering, Andhra Pradesh, India

ABSTRACT

An Artificial Neural Network (ANN) is accepted by most without further analysis. Currently,

a computational model that is inspired by the way the neural network field ld enjoys a resurgence of

biological neural networks in the human brain process interest and a corresponding increase in funding.

information. Artificial Neural Networks have

generated a lot of excitement in Machine Learning Neural networks, with their remarkable ability to

research and industry, thanks to many breakthrough derive meaning from complicated or imprecise data,

results in speech recognition, computer vision and text can be used to extract patterns and detect trends that

processing. In this blog post we will try to develop an are too complex to be noticed by either humans or

understanding of a particular type of Artificial Neural other computer techniques. A trained neural network

Network called the Multi Layer Perceptron. An can be thought of as an "expert" in the category of

Artificial Neural Network (ANN) is an information information it has been given to analyze. This expert

processing paradigm that is inspired by the way can then be used to provide projections given new

biological nervous systems, such as the brain, process situations of interest

st and answer "what if" questions.

information. The key element of this paradigm is the

novel structure of the information processing system. Other advantages include:

It is composed of a large number of highly 1. Adaptive learning: An ability to learn how to do

interconnected processing elements (neurons) tasks based on the data given for training or initial

working in unison to solve specific problems. ANNs, experience.

like people, learn by example. An ANN is configured 2. Self-Organization:

Organization: An ANN can create its own

for a specific application, such as pattern recognition organization or representation of the information it

or data classification, through a learning process. receives during learning time.

Learning in biological systems involves adjustments 3. Real Time Operation: ANN computations may be

to the synaptic

naptic connections that exist between the carried out in parallel, and special hardware

neurones. This is true for ANNs as well. devices are being designed and manufactured

manu

which take advantage of this capability.

Many important advances have been boosted by the

4. Fault Tolerance via Redundant Information

use of inexpensive computer emulations. Following

Coding: Partial destruction of a network leads to

an initial period of enthusiasm, the field survived a

the corresponding degradation of performance.

period of frustration

on and disrepute. During this period

However, some network capabilities may be

when funding and professional support was minimal,

retained even with major network damage.

important advances were made by relatively few

researchers. These pioneers were able to develop

convincing technology which surpassed the

limitations identified by Minsky

ky and Papert. Minsky

and Papert, published a book (in 1969) in which they

summed up a general feeling of frustration (against

neural networks) among researchers, and was thus

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 418

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

Neural networks versus conventional computers elements (neurones) working in parallel to solve a

specific problem. Neural networks learn by example.

They cannot be programmed to perform a specific

task. The examples must be selected carefully

otherwise useful time is wasted or even worse the

network might be functioning incorrectly. The

disadvantage is that because the network finds out

how to solve the problem by itself, its operation can

be unpredictable.

On the other hand, conventional computers use a

cognitive approach

roach to problem solving; the way the

problem is to solved must be known and stated in

small unambiguous instructions. These instructions

are then converted to a high level language program

and then into machine code that the computer can

understand. These machines are totally predictable; if

anything goes wrong is due to a software or hardware

fault.

Neural networks and conventional algorithmic

computers are not in competition but complement

each other. There are tasks are more suited to an

algorithmic approach

proach like arithmetic operations and

tasks that are more suited to neural networks. Even

more, a large number of tasks, require systems that

use a combination of the two approaches (normally a

conventional computer is used to supervise the neural

network) in order to perform at maximum efficiency.

Human and Artificial Neurones - investigating the

similarities

Neural networks take a different approach to problem

solving than that of conventional computers.

Conventional computers use an algorithmic approach

i.e. the computer follows a set of instructions in order

to solve a problem. Unless the specific steps that the

computer needs to follow are known the computer

cannot solve the problem. That restricts the problem

solving capability of conventional computers to

problems that we already understand

rstand and know how

to solve. But computers would be so much more

useful if they could do things that we don't exactly

know how to do.

Neural networks process information in a similar way

the human brain does. The network is composed of a

large number of highly interconnected processing

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 419

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

The synapse

From Human Neurones to Artificial Neurones

We conduct these neural networks by first trying to

deduce the essential

ssential features of neurones and their

interconnections. We then typically program a

computer to simulate these features. However because

our knowledge of neurones is incomplete and our

computing power is limited, our models are

necessarily gross idealizations

tions of real networks of

neurones.

Some interesting numbers

BRAIN PC

Much is still unknown about how the brain trains

Vprop=3*108

itself to process information, so theories abound. In Vprop=100m/s

m/s

the human brain, a typical neuron collects signals

from others through a host of fine structures called N 100 hz N 109 hz

dendrites.. The neuron sends out spikes of electri

electrical N=1010-1011 neurons N=109

activity through a long, thin stand known as an axon, The parallelism degree ~1014 like

which splits into thousands of branches. At the end of 1014processors with 100 Hz

each branch, a structure called a synapse converts the frequency. 104 connected at the

activity from the axon into electrical effects that same time.

inhibit or excite activity from the axon into electrical

effects that inhibit or excite activity in the connected An engineering approach

neurones. When a neuron receives excitatory input A neuron

that is sufficiently large compared with its inhibitory A more sophisticated neuron is the McCulloch and

input, it sends a spike of electrical activity down its Pitts model (MCP). The difference from the previous

axon. Learning occurs

ccurs by changing the effectiveness model is that the inputs are ‘weighted’; the effect that

of the synapses so that the influence of one neuron on each input has at decision making is dependent on the

another changes. weight of the particular input. The weight ofo an input

is a number which when multiplied with the input

gives the weighted input. These weighted inputs are

then added together and if they exceed a pre-set pre

threshold value, the neuron fires. In any other case the

neuron does not fire.

Components of a neuron

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 420

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

In mathematical

ical terms, the neuron fires if and only if; The activity of each hidden unit is determined by the

the addition of input weights and of the threshold activities of the input units and the weights on the

makes this neuron a very flexible and powerful one. connections between the input

nput and the hidden units.

The MCP neuron has the ability to adapt to a

particular situation by changing its weights and/or The behavior of the output units depends on the

threshold. Various algorithms exist that cause the activity of the hidden units and the weights between

neuron to 'adapt'; the most used ones are the Delta the hidden and output units.

rule and the back error propagation. The former is

used in feed-forward

forward networks and the latter in This simple type of network is interesting because the

feedback networks. hidden units are free to construct their own

representations of the input. The weights between the

Architecture of neural networks input and hidden units determine when each hidden

Feed-forward networks unit is active, and so by modifying these weights, a

Feed-forward ANNs allow hidden unit can choose what it represents.

signals to travel one way

only; from input to output. We also distinguish single-layer

single and multi-layer

There is no feedback (loops) architectures. The single-layer

layer organization, in which

i.e. the output of any layer all units are connected to one another, constitutes the

does not affect that same most general case and is of more potential

layer. Feed-forward ANNs computational power than hierarchically structured

tend to be straight forward multi-layer

layer organizations. In multi-layer

multi networks,

networks that associate inputs with outputs. They are units

nits are often numbered by layer, instead of

extensively used in pattern recognition. This type of following a global numbering.

organization is also referred to as bottom

bottom-up or top-

down. Perceptrons

The most influential work on

Feedback networks neural nets in the 60's went

Feedback networks under the heading of

(figure 1) can have 'perceptrons' a term coined

signals traveling in by Frank Rosenblatt. The

both directions by perceptron turns out to be

introducing loops an MCP model (neuron with

in the network. weighted inputs) with some additional, fixed, pre--

pre

Feedback networks are very powerful and can get processing. Units labeled A1, A2, Aj, Ap are called

extremely complicated. Feedback networks are association units and their task is to extract specific,

dynamic; their 'state' is changing continuously unt

until localized featured from the input images. Perceptrons

they reach an equilibrium point. They remain at the mimic the basic idea behind

ehind the mammalian visual

equilibrium point until the input changes and a new system. They were mainly used in pattern recognition

equilibrium needs to be found. Feedback architectures even though their capabilities extended a lot more.

are also referred to as interactive or recurrent,

although the latter term is often used to den denote In 1969

feedback connections in single-layer

layer organizations. Minsky and

Papert wrote

Network layers a book in

The commonest type of artificial neural network which they

consists of three groups, or layers, of units: a layer of described the

"input"" units is connected to a layer of ""hidden" limitations of

units, which is connected to a layer of "output

"output" units. single layer

The activity of the input units represents the raw Perceptrons.

information that is fed into the network. The impact that at the book had was tremendous and

caused a lot of neural network researchers to loose

their interest. The book was very well written and

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 421

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

showed mathematically that single layer perceptrons TRANSFER FUNCTION

could not do some basic pattern recognition The behavior of an ANN (Artificial Neural Network)

operations like determining

ining the parity of a shape or depends

ends on both the weights and the input-output

input

determining whether a shape is connected or not. function (transfer function) that is specified for the

What they did not realize, until the 80's, is that given units. This function typically falls into one of three

the appropriate training, multilevel perceptrons can do categories:

these operations. linear (or ramp)

threshold

The Learning Process sigmoid f a 1 1 exp( a )

TYPES OF LEARNING

All learning methods used for dw For linear units, the output activity is proportional to the

adaptive neural networks can E w

dt total weighted output.

be classified into two major E

categories: For threshold units,, the output are set at one of two

levels, depending on whether the total input is greater

Supervised learning which than or less than some threshold value.

incorporates an

external teacher, so E w E x ,y ,y x , w

For sigmoid units,, the output varies continuously but

that each output unit is not linearly as the input changes. Sigmoid units bear a

told what its desired response to input signals ought to greater resemblance to real neurones than do linear or

be. During the learning process global information threshold units, but all three must be considered rough

may be required. Paradigms of supervised learning approximations.

include error-correction learning,ning, reinforcement

learning and stochastic learning. We can teach a three-layerlayer network to perform a

An important issue concerning supervised learning is particular task by using the following procedure:

the problem of error convergence, i.e. the We present the network with training examples,

minimization of error between the desired and which consist of a pattern of activities for the input

computed unit values. The aim is to determine a set of units together with the desired pattern of activities for

weightsts which minimizes the error. One well

well-known the output units.

method, which is common to many learning

paradigms, is the least mean square (LMS) We determine how closely thet actual output of the

convergence. network matches the desired output.

Unsupervised learning uses no external teacher and We change the weight of each connection so that the

is based upon only local information. It is also network produces a better approximation of the

referred to as self-organization,

organization, in the sense that it desired output.

self-organizes

organizes data presented to the network and

detects their emergent collective properties. In order to train a neural network to perform some

task, we must adjust the weights of each unit in such a

Paradigms of unsupervised learning are Hebbian way that the error between the desired output and the

learning and competitive learning. actual output is reduced. This process requires that the

from Human Neurones to Artificial Ne Neuron Esther neural network compute the error derivative of the

aspect of learning concerns the distinction or not of a weights (EW). ). In other words, it must calculate how

separate phase, during which the network is trained, the error changes

hanges as each weight is increased or

and a subsequent operation phase. We say that a decreased slightly. The back propagation algorithm is

neural network learns off-line

line if the learning phase the most widely used method for determining the

and the operation phase are distinct.

ct. A neural network EW.

learns on-line

line if it learns and operates at the same

time. Usually, supervised learning is performed off off- The back-propagation

propagation algorithm is easiest to

line, whereas unsupervised learning is performed on on- understand if all the units in the network are linear.

line. The algorithm

hm computes each EW by first computing

the EA,, the rate at which the error changes as the

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 422

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

activity level of a unit is changed. For output units, you will have to

the EA is simply the difference between the actual fight - is noise in

and the desired real signals. And

output. To compute the biggest trouble,

the EA for a hidden that nobody knows

unit in the layer just what is noise and

before the output what is pure signal.

layer, we first For example, how

identify all the to choose the right

weights between variant for the

that hidden unit and the output units to which it is problem in the

connected. We then multiply those weights by the right?

EAss of those output units and add the products. This

sum equals the EA for the chosen hidden unit. After ADAPTIVE FILTERS

calculating all the EAss in the hidden layer just before Main features, which we must obtain:

the output layer, we can compute in like fashion the 1. Zero-phase

phase distortion (because we are going to

EAss for other layers, moving from layer to layer in a make forecasts and we shouldn’t influence on the

direction opposite to the way activities propagate data’s phase we have.

through

gh the network. This is what gives back 2. We don’t know what real signal is and what is

propagation its name. Once the EA has been noise, especially in case of share values or smth

computed for a unit, it is straight forward to compute like that. So one have to decide apriori what is the

the EW for each incoming connection of the unit. The signal we want to obtain.

EW is the product of the EA and the activity through

the incoming connection. In this chapter I’m going to make an overview of 3

types of adaptive filters:

ers: Zero-phase

Zero filter (smoothing

Data filters filter), Kalman filter (error estimator), and Empirical

That is all we need to know about Neural Nets, if we mode decomposition.

want to make forecasts. The next problem with which

ZERO-PHASE FILTER

The main equation of this filter:

y ( n) b(1) x (n ) b ( 2) x ( n 1) ... b ( nb 1) x ( n nb) a (2) y ( n 1) ... a ( na 1) y ( n na )

The scheme of this filter

Where x(n) is the signal we have at the moment n . Filter performs zero-phasephase digital filtering by

Z-1 – means that we take the previous value of the processing the input data in both the forward and

signal (moment (n-1)). b , a - are the filter reverse directions. After filtering in the forward

coefficients. direction, it reverses the filtered sequence and runs it

back through the filter. The resulting sequence has

precisely zero-phase

phase distortion and double the filter

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 423

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

order. filter minimizes start-up

up and ending transients coefficient are very good. It means that it’s

it is possible

by matching initial conditions, and works for both real to use this filter, but with some kind of error

and complex inputs. Note that filter should not be estimator.

used with differentiator and Hilbert FIR filters, since

the operation of these filters

ers depends heavily on their KALMAN FILTER (ERROR ESTIMATOR)

phase response. Consider a linear, discrete-time

time dynamical system.

The concept of state is fundamental to this

description. The state vector or simply state, denoted

by x, is defined as the minimal set of data that is

sufficient to uniquely describe the unforced dynamical

behavior of the system; the subscript n denotes

discrete time. In other words, the state is the least

amount of data on the past behavior of the system

sys that

is needed to predict its future behavior. Typically, the

state xk is unknown. To estimate it, we use a set of

observed data, denoted by the vector yk. In

mathematical terms, the block diagram embodies the

Problem: After the neural net the forecast will have 1 following pair of equations:

day delay if we tried to make forecast for one day. It

looks like the picture on the right. But I should

mention that all statistical values e.g. correlation

The equation of this filter is given below:

x (n) a x (n 1) k (n)[ y (n) ac x (n 1)], where x (n) ax (n 1) w(n 1) model of generating signal,

w(n) white noise and y (n) cx (n) ( n) signal after neural net , (n) white noise

Problem: After Kalman filter the error became less, but there is still a day delay. The solution is Empirical

Mode Decomposition.

EMPIRICAL MODE DECOMPOSITION POSITION

A new nonlinear technique, referred to as Empirical the method and to propose various improvements

Mode Decomposition (EMD), has recently been upon the original formulation. Some preliminary

pioneered by N.E. Huang et al. for adaptively elements of experimental performance evaluation will

representing nonstationary signals as sums of zero

zero- also be provided for giving a flavor of the efficiency

mean AMFM components [2]. Although it often of the decomposition, as well as of the difficulty of its

proved remarkably effective [1, 2, 5, 6, 8], the interpretation.

technique is faced with the difficulty of being

essentially defined by an algorithm, and therefor

therefore of The starting point of the Empirical Mode

not admitting an analytical formulation which would Decomposition (EMD) is to consider oscillations in

allow for a theoretical analysis and performance signals at a very local level. In fact, if we look at the

evaluation. The purpose of this paper is therefore to evolution of a signal x(t)) between two consecutive

contribute experimentally to a better understanding of extrema (say, two minima occurring at times t− and

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 424

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

t+), we can heuristically

istically define a (local) high high- IMF#1

frequency part {d(t), t− ≤ t ≤ t+},, or local detail,

which corresponds to the oscillation terminating at the

two minima and passing through the maximum which

necessarily exists in between them. For the picture to

be complete,, one still has to identify the

corresponding (local) low-frequency

frequency part m(t), or

local trend, so that we have x(t) = m(t)) + d(t) for t− ≤ t

≤ t+.

+. Assuming that this is done in some proper way

for all the oscillations composing the entire signal, the

procedure

dure can then be applied on the residual

consisting of all local trends, and constitutive

components of a signal can therefore be iteratively

extracted. Given a signal x(t), ), the effective algorithm IMF#2

of EMD can be summarized as follows:

1. Identity all extrema of x(t)

2. Interpolate between minima (resp. maxima),

ending up with some envelope emin(t) (resp.

emax(t))

3. Compute the mean m(t) = (emin(t)+eemax(t))/2

4. Extract the detail d(t) = x(t) − m(t)

5. Iterate on the residual m(t)

In practice, the above procedure has to be refined by a

sifting process which amounts to first iterating steps 1

to 4 upon the detail signal d(t), ), until this latter can be

considered as zero-mean mean according to some stopping Let’s try to filter from high frequency part (light gray

criterion. Once this is achieved, the detail is referred – EMD, dark gray – zero phase filters)

to as an Intrinsic Mode Function (IMF), the

corresponding residual is computed and step 5

applies. By construction, the number of extrema is

decreased when going from one residual to the next,

and the whole decomposition is guaranteed to be

completed with a finite number of modes. Modes and

residuals have been heuristically introduced on

“spectral” arguments, but this must not be considered

from a too narrow perspective. First, it is worth

stressing the fact that, even in the case of harmonic

oscillations, the high vs. low frequency discrimination

mentioned above applies only locally and corresponds Problem: Using this method we have strong boundary

by no way to a pre-determined ined sub band filtering (as, effects. Sifting process can minimize this effect, but

e.g., in a wavelet transform). Selection of modes not at all.

rather corresponds to an automatic and adaptive

(signal-dependent) time-variant

variant filtering. ZERO-PHASE

PHASE FILTER +KALMAN FILTER

Let’s try to do this procedure with next signal +EMD = SOLUTION

x 0,7 sin( 20t ) 0,5 sin( 400t ), t [01] The so called solution is next. We should use data,

obtained from zero-phase

phase filter on the boundaries, we

should use linear weighed sum of zero-phase

zero and

some IMF’s in the middle. And after the forecast is

done, we should apply Kalman filter to the forecast.

Let’s try to do that. The figure on the bottom is the

forecast of some company’s sharesh value (production

set). There is no delay.

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 425

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

The bottom figures are zoomed pictures of previous

figure

HOLDER-FUNCTIONFUNCTION BEHAVIOR IS

IMPORTANT

The main idea is next. Consider f (t ) D f Holder

derived, that

| f (t t ) f (t ) | const (t ) (t ) , (t ) [0,1]

0 means that we have break of second order

1means that we have O (t )

So this formula is a somewhat connection between

“bad” functions and “good” functions. If we will look

on this formula with more precise we will notice, that

we can catch moments in time, when our function

knows, that it’s going to change

hange it’s behavior from

one to another. It means that today we can make a

forecast on tomorrow behavior. But one should

mention that we don’t know the sigh on what

behavior is going to change.

The figure on the bottom is the result of mat lab program which shows all results in one window.

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 426

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

And pay attention to field Holder after filtration was done

So we can make forecasts without delay and we know Horst behavior.

(In case of IMF filtering Holder function is smoother)

RESULTS

If you are going to make forecast, you will need filters. The best way to make forecast is to use adaptive filters.

Three of them I showed you. The thing I will show next isn’t the best way to make forecasts. I’m going to teach

ANN to make forecast for one day. After that we suggest predicted value as an input to make forecast for the

second day and so on. Let’s try to make forecast for Siemens share values.

Here is Siemens function (for share values)

Let’s filter it.

Now we are ready to make forecast.

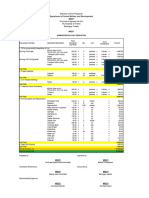

Here is the forecast in comparison with real data.

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 427

International Journal of Trend in Scientific Research and Development (IJTSRD) ISSN: 2456-6470

2456

3,165

3,16

3,155

3,15

3,145

forecast

3,14

real

3,135

3,13

3,125

3,12

3,115

0 2 4 6 8 10 12 14

Of course it’s is better to use another techniques to make forecast’s for few days. But this approach can give

you a chance to make forecast for 55-6 days depending on the function you are working with.

REFERENCE

1. Web-Site

Site made by Christos Stergiou and

Dimitrios Siganos http://www.doc.ic.ac.uk 6. Gabriel Rilling, Patrick Flandrin and Paulo

Goncalves “On Empirical Mode Decomposition

2. S. A. Shumsky “Selected lections about neural and its algorithms”

computing”

7. Norden E. Huang1, Zheng Shen, Steven R. Long,

3. Simon Haykin “Kalman Filtering and Neural Manli C. Wu, Hsing H. Shih, Quanan Zheng, Nai-

Nai

Networks” Copyright © 2001 John Wiley & Sons, Chyuan Yen, Chi Chao Tung and Henry H. Liu

Inc. ISBNs: 0-471-36998-55 (Hardback); 00-471- “The empirical mode decomposition and the

22154-6 (Electronic) Hilbert spectrum for nonlinear and non-stationary

non

4. Web-Site made by Base Group Labs © 1995

1995-2006 time series analysis”

http://www.basegroup.ru 8. Gabriel Rilling, Patrick Flandrin and Paulo

5. Vesselin Vatchev, USC November 20, 2002, “The Goncalves “Detrending

ding and denoising with

analysis of the Empirical Mode Decomposition Empirical Mode Decompositions”

ecompositions”

Method ”

@ IJTSRD | Available Online @ www.ijtsrd.com | Volume – 2 | Issue – 6 | Sep-Oct

Oct 2018 Page: 428

Vous aimerez peut-être aussi

- Design Simulation and Hardware Construction of An Arduino Microcontroller Based DC DC High Side Buck Converter For Standalone PV SystemDocument6 pagesDesign Simulation and Hardware Construction of An Arduino Microcontroller Based DC DC High Side Buck Converter For Standalone PV SystemEditor IJTSRDPas encore d'évaluation

- Sustainable EnergyDocument8 pagesSustainable EnergyEditor IJTSRDPas encore d'évaluation

- Challenges Faced by The Media in An Attempt To Play Their Roles in Public Awareness On Waste Management in Buea and DoualaDocument18 pagesChallenges Faced by The Media in An Attempt To Play Their Roles in Public Awareness On Waste Management in Buea and DoualaEditor IJTSRDPas encore d'évaluation

- Women Before and After Islam With Special Reference To ArabDocument3 pagesWomen Before and After Islam With Special Reference To ArabEditor IJTSRDPas encore d'évaluation

- Deconstructing The Hijra Narrative Reimagining Trans Identities Through Literary PerspectivesDocument6 pagesDeconstructing The Hijra Narrative Reimagining Trans Identities Through Literary PerspectivesEditor IJTSRDPas encore d'évaluation

- A Pharmaceutical Review On Kaanji and Its Wide Range of ApplicabilityDocument6 pagesA Pharmaceutical Review On Kaanji and Its Wide Range of ApplicabilityEditor IJTSRDPas encore d'évaluation

- Collective Bargaining and Employee Prosocial Behaviour in The Hospitality Sector in Port HarcourtDocument10 pagesCollective Bargaining and Employee Prosocial Behaviour in The Hospitality Sector in Port HarcourtEditor IJTSRDPas encore d'évaluation

- Activating Geospatial Information For Sudans Sustainable Investment MapDocument13 pagesActivating Geospatial Information For Sudans Sustainable Investment MapEditor IJTSRDPas encore d'évaluation

- Educational Unity Embracing Diversity For A Stronger SocietyDocument6 pagesEducational Unity Embracing Diversity For A Stronger SocietyEditor IJTSRDPas encore d'évaluation

- International Journal of Trend in Scientific Research and Development (IJTSRD)Document13 pagesInternational Journal of Trend in Scientific Research and Development (IJTSRD)Editor IJTSRDPas encore d'évaluation

- An Analysis On The Use of Image Design With Generative AI TechnologiesDocument4 pagesAn Analysis On The Use of Image Design With Generative AI TechnologiesEditor IJTSRDPas encore d'évaluation

- Differential Equations Third Order Inhomogeneous Linear With Boundary ConditionsDocument6 pagesDifferential Equations Third Order Inhomogeneous Linear With Boundary ConditionsEditor IJTSRDPas encore d'évaluation

- Consumers' Impulsive Buying Behavior in Social Commerce PlatformsDocument5 pagesConsumers' Impulsive Buying Behavior in Social Commerce PlatformsEditor IJTSRDPas encore d'évaluation

- Artificial Intelligence A Boon in Expanding Online Education Through Social Media and Digital Marketing Post Covid 19Document9 pagesArtificial Intelligence A Boon in Expanding Online Education Through Social Media and Digital Marketing Post Covid 19Editor IJTSRDPas encore d'évaluation

- An Investigation of The Temperature Effect On Solar Panel Efficiency Based On IoT TechnologyDocument7 pagesAn Investigation of The Temperature Effect On Solar Panel Efficiency Based On IoT TechnologyEditor IJTSRDPas encore d'évaluation

- To Assess The Knowledge and Attitude of Non Professionals Regarding COVID 19 Vaccination A Descriptive StudyDocument4 pagesTo Assess The Knowledge and Attitude of Non Professionals Regarding COVID 19 Vaccination A Descriptive StudyEditor IJTSRDPas encore d'évaluation

- Effectiveness of Video Teaching Program On Knowledge Regarding 5Fs of Disease Transmission Food, Finger, Fluid, Fomite, Faces Among Children at Selected Setting, ChennaiDocument3 pagesEffectiveness of Video Teaching Program On Knowledge Regarding 5Fs of Disease Transmission Food, Finger, Fluid, Fomite, Faces Among Children at Selected Setting, ChennaiEditor IJTSRDPas encore d'évaluation

- Sustainable Development A PrimerDocument9 pagesSustainable Development A PrimerEditor IJTSRDPas encore d'évaluation

- Role of Dashamooladi Niruha Basti Followed by Katibasti in The Management of "Katigraha" W.R.S To Lumbar Spondylosis A Case StudyDocument3 pagesRole of Dashamooladi Niruha Basti Followed by Katibasti in The Management of "Katigraha" W.R.S To Lumbar Spondylosis A Case StudyEditor IJTSRDPas encore d'évaluation

- Evan Syndrome A Case ReportDocument3 pagesEvan Syndrome A Case ReportEditor IJTSRDPas encore d'évaluation

- Challenges in Pineapple Cultivation A Case Study of Pineapple Orchards in TripuraDocument4 pagesChallenges in Pineapple Cultivation A Case Study of Pineapple Orchards in TripuraEditor IJTSRDPas encore d'évaluation

- A Study To Assess The Effectiveness of Art Therapy To Reduce Depression Among Old Age Clients Admitted in Saveetha Medical College and Hospital, Thandalam, ChennaiDocument5 pagesA Study To Assess The Effectiveness of Art Therapy To Reduce Depression Among Old Age Clients Admitted in Saveetha Medical College and Hospital, Thandalam, ChennaiEditor IJTSRDPas encore d'évaluation

- Importance of Controlled CreditDocument3 pagesImportance of Controlled CreditEditor IJTSRDPas encore d'évaluation

- H1 L1 Boundedness of Rough Toroidal Pseudo Differential OperatorDocument8 pagesH1 L1 Boundedness of Rough Toroidal Pseudo Differential OperatorEditor IJTSRDPas encore d'évaluation

- Financial Risk, Capital Adequacy and Liquidity Performance of Deposit Money Banks in NigeriaDocument12 pagesFinancial Risk, Capital Adequacy and Liquidity Performance of Deposit Money Banks in NigeriaEditor IJTSRDPas encore d'évaluation

- A Study To Assess The Knowledge Regarding Iron Deficiency Anemia Among Reproductive Age Women in Selected Community ThrissurDocument4 pagesA Study To Assess The Knowledge Regarding Iron Deficiency Anemia Among Reproductive Age Women in Selected Community ThrissurEditor IJTSRDPas encore d'évaluation

- Knowledge Related To Diabetes Mellitus and Self Care Practice Related To Diabetic Foot Care Among Diabetic PatientsDocument4 pagesKnowledge Related To Diabetes Mellitus and Self Care Practice Related To Diabetic Foot Care Among Diabetic PatientsEditor IJTSRDPas encore d'évaluation

- Concept of Shotha W.S.R To Arishta LakshanaDocument3 pagesConcept of Shotha W.S.R To Arishta LakshanaEditor IJTSRDPas encore d'évaluation

- An Approach To The Diagnostic Study On Annavaha Srotodusti in Urdwaga Amlapitta WSR To Oesophagogastroduodenoscopic ChangesDocument4 pagesAn Approach To The Diagnostic Study On Annavaha Srotodusti in Urdwaga Amlapitta WSR To Oesophagogastroduodenoscopic ChangesEditor IJTSRDPas encore d'évaluation

- A Study On Human Resource AccountingDocument3 pagesA Study On Human Resource AccountingEditor IJTSRDPas encore d'évaluation

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeD'EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeÉvaluation : 4 sur 5 étoiles4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingD'EverandThe Little Book of Hygge: Danish Secrets to Happy LivingÉvaluation : 3.5 sur 5 étoiles3.5/5 (399)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryD'EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryÉvaluation : 3.5 sur 5 étoiles3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceD'EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceÉvaluation : 4 sur 5 étoiles4/5 (894)

- The Yellow House: A Memoir (2019 National Book Award Winner)D'EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Évaluation : 4 sur 5 étoiles4/5 (98)

- Shoe Dog: A Memoir by the Creator of NikeD'EverandShoe Dog: A Memoir by the Creator of NikeÉvaluation : 4.5 sur 5 étoiles4.5/5 (537)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureD'EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureÉvaluation : 4.5 sur 5 étoiles4.5/5 (474)

- Never Split the Difference: Negotiating As If Your Life Depended On ItD'EverandNever Split the Difference: Negotiating As If Your Life Depended On ItÉvaluation : 4.5 sur 5 étoiles4.5/5 (838)

- Grit: The Power of Passion and PerseveranceD'EverandGrit: The Power of Passion and PerseveranceÉvaluation : 4 sur 5 étoiles4/5 (587)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaD'EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaÉvaluation : 4.5 sur 5 étoiles4.5/5 (265)

- The Emperor of All Maladies: A Biography of CancerD'EverandThe Emperor of All Maladies: A Biography of CancerÉvaluation : 4.5 sur 5 étoiles4.5/5 (271)

- On Fire: The (Burning) Case for a Green New DealD'EverandOn Fire: The (Burning) Case for a Green New DealÉvaluation : 4 sur 5 étoiles4/5 (73)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersD'EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersÉvaluation : 4.5 sur 5 étoiles4.5/5 (344)

- Team of Rivals: The Political Genius of Abraham LincolnD'EverandTeam of Rivals: The Political Genius of Abraham LincolnÉvaluation : 4.5 sur 5 étoiles4.5/5 (234)

- The Unwinding: An Inner History of the New AmericaD'EverandThe Unwinding: An Inner History of the New AmericaÉvaluation : 4 sur 5 étoiles4/5 (45)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyD'EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyÉvaluation : 3.5 sur 5 étoiles3.5/5 (2219)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreD'EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreÉvaluation : 4 sur 5 étoiles4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)D'EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Évaluation : 4.5 sur 5 étoiles4.5/5 (119)

- Her Body and Other Parties: StoriesD'EverandHer Body and Other Parties: StoriesÉvaluation : 4 sur 5 étoiles4/5 (821)

- Sensing System Assisted Novel PID Controller For Efficient Speed Control of DC MDocument4 pagesSensing System Assisted Novel PID Controller For Efficient Speed Control of DC Mu2005044Pas encore d'évaluation

- Đánh giá chế độ ăn kiêng: Nhịn ăn gián đoạn để giảm cân- wed HarvardDocument14 pagesĐánh giá chế độ ăn kiêng: Nhịn ăn gián đoạn để giảm cân- wed HarvardNam NguyenHoangPas encore d'évaluation

- Research Article (Lavandula Angustifolia) Essential Oil On: Effect of Lavender Acute Inflammatory ResponseDocument10 pagesResearch Article (Lavandula Angustifolia) Essential Oil On: Effect of Lavender Acute Inflammatory ResponseAndreeaPas encore d'évaluation

- MC BreakdownDocument5 pagesMC BreakdownThane SnymanPas encore d'évaluation

- 5-in-1 Document Provides Lessons on Trees and Environmental ConservationDocument45 pages5-in-1 Document Provides Lessons on Trees and Environmental ConservationPriya DharshiniPas encore d'évaluation

- Traxonecue Catalogue 2011 Revise 2 Low Res Eng (4!5!2011)Document62 pagesTraxonecue Catalogue 2011 Revise 2 Low Res Eng (4!5!2011)Wilson ChimPas encore d'évaluation

- Christmas Around the WorldDocument16 pagesChristmas Around the WorldVioleta Veljanovska100% (1)

- Syllabus - 2nd Paper NTCDocument2 pagesSyllabus - 2nd Paper NTCajesharyalPas encore d'évaluation

- Cambridge International AS & A Level: Mathematics 9709/13Document20 pagesCambridge International AS & A Level: Mathematics 9709/13Justin OngPas encore d'évaluation

- Briefing Paper No 4 CV Electrification 30 11 17 PDFDocument5 pagesBriefing Paper No 4 CV Electrification 30 11 17 PDFAlex WoodrowPas encore d'évaluation

- Uv Spectrophotometric Estimation of Carvedilol Hydrochloride by First Order Derivative and Area Under Curve Methods in Bulk and PH PDFDocument7 pagesUv Spectrophotometric Estimation of Carvedilol Hydrochloride by First Order Derivative and Area Under Curve Methods in Bulk and PH PDFMeilia SuhermanPas encore d'évaluation

- Aac Block Adhesive: Product DescriptionDocument2 pagesAac Block Adhesive: Product DescriptionmaznahPas encore d'évaluation

- Pharmaceutics | Water Solubility and Dissolution RateDocument11 pagesPharmaceutics | Water Solubility and Dissolution RateAnnisa AgustinaPas encore d'évaluation

- 692pu 6 6Document1 page692pu 6 6Diego GodoyPas encore d'évaluation

- 2206 - Stamina Monograph - 0 PDFDocument3 pages2206 - Stamina Monograph - 0 PDFMhuez Iz Brave'sPas encore d'évaluation

- Lisa - Add New Front: Process Matching/Installation and Qualification (IQ)Document62 pagesLisa - Add New Front: Process Matching/Installation and Qualification (IQ)Thanh Vũ NguyễnPas encore d'évaluation

- Psychopathology: Dr. Shafqat Huma MBBS, FCPS (Psychiatry) Fellowship in Addiction Psychiatry (USA)Document48 pagesPsychopathology: Dr. Shafqat Huma MBBS, FCPS (Psychiatry) Fellowship in Addiction Psychiatry (USA)sfrtr100% (1)

- Solving Rational Equations and InequalitiesDocument5 pagesSolving Rational Equations and InequalitiesJaycint - Rud PontingPas encore d'évaluation

- Omcmle Physiology Workbook Part 5 PDFDocument63 pagesOmcmle Physiology Workbook Part 5 PDFloiuse shepiralPas encore d'évaluation

- What Is RTN/Microwave TechnologyDocument27 pagesWhat Is RTN/Microwave TechnologyRavan AllahverdiyevPas encore d'évaluation

- The Ultimate Life GuideDocument12 pagesThe Ultimate Life GuideNLPCoachingPas encore d'évaluation

- General Biology 2: Quarter 3, Module 1 Genetic EngineeringDocument20 pagesGeneral Biology 2: Quarter 3, Module 1 Genetic EngineeringRonalyn AndaganPas encore d'évaluation

- Vietnam & Angkor Wat (PDFDrive) PDFDocument306 pagesVietnam & Angkor Wat (PDFDrive) PDFChristine TranPas encore d'évaluation

- Colistimethate Sodium 1 Million I.U. Powder For Solution For Injection - Colistin - (Emc)Document8 pagesColistimethate Sodium 1 Million I.U. Powder For Solution For Injection - Colistin - (Emc)hakim shaikhPas encore d'évaluation

- ParikalpDocument43 pagesParikalpManish JaiswalPas encore d'évaluation

- Hypnotherapy GuideDocument48 pagesHypnotherapy Guides_e_bell100% (2)

- ElectrochemistryDocument24 pagesElectrochemistryZainul AbedeenPas encore d'évaluation

- Spcr-TagbayaganDocument76 pagesSpcr-TagbayaganReycia Vic QuintanaPas encore d'évaluation

- Présentation Transportation ManagementDocument14 pagesPrésentation Transportation ManagementHiba Hmito100% (1)

- Torn MeniscusDocument10 pagesTorn MeniscusKrystal Veverka100% (3)