Académique Documents

Professionnel Documents

Culture Documents

Cluster Analysis BRM Session 14

Transféré par

akhil1070430 évaluation0% ont trouvé ce document utile (0 vote)

361 vues25 pagesbrm

Copyright

© © All Rights Reserved

Formats disponibles

PPT, PDF, TXT ou lisez en ligne sur Scribd

Partager ce document

Partager ou intégrer le document

Avez-vous trouvé ce document utile ?

Ce contenu est-il inapproprié ?

Signaler ce documentbrm

Droits d'auteur :

© All Rights Reserved

Formats disponibles

Téléchargez comme PPT, PDF, TXT ou lisez en ligne sur Scribd

0 évaluation0% ont trouvé ce document utile (0 vote)

361 vues25 pagesCluster Analysis BRM Session 14

Transféré par

akhil107043brm

Droits d'auteur :

© All Rights Reserved

Formats disponibles

Téléchargez comme PPT, PDF, TXT ou lisez en ligne sur Scribd

Vous êtes sur la page 1sur 25

Cluster Analysis

Cluster analysis is a class of techniques used to

classify objects or cases into relatively

homogeneous groups called clusters. Objects in

each cluster tend to be similar to each other and

dissimilar to objects in the other clusters. Cluster

analysis is also called classification analysis, or

numerical taxonomy.

Both cluster analysis and discriminant analysis are

concerned with classification. However,

discriminant analysis requires prior knowledge of

the cluster or group membership for each object

or case included, to develop the classification rule.

In contrast, in cluster analysis there is no a priori

information about the group or cluster

membership for any of the objects. Groups or

clusters are suggested by the data, not defined a

priori.

Variable 2

V

a

r

i

a

b

l

e

1

Fig. 1

X

Variable 2

V

a

r

i

a

b

l

e

1

Fig. 2

Agglomeration schedule. An agglomeration

schedule gives information on the objects or cases

being combined at each stage of a hierarchical

clustering process.

Cluster centroid. The cluster centroid is the mean

values of the variables for all the cases or objects in

a particular cluster.

Cluster centers. The cluster centers are the initial

starting points in nonhierarchical clustering.

Clusters are built around these centers, or seeds.

Cluster membership. Cluster membership indicates

the cluster to which each object or case belongs.

Dendrogram. A dendrogram, or tree graph, is a

graphical device for displaying clustering results.

Vertical lines represent clusters that are joined

together. The position of the line on the scale

indicates the distances at which clusters were

joined. The dendrogram is read from left to right.

Figure 8 is a dendrogram.

Distances between cluster centers. These

distances indicate how separated the individual

pairs of clusters are. Clusters that are widely

separated are distinct, and therefore desirable.

Icicle diagram. An icicle diagram is a graphical

display of clustering results, so called because it

resembles a row of icicles hanging from the eaves

of a house. The columns correspond to the objects

being clustered, and the rows correspond to the

number of clusters. An icicle diagram is read from

bottom to top. Figure 7 is an icicle diagram.

Similarity/distance coefficient matrix. A

similarity/distance coefficient matrix is a lower-

triangle matrix containing pairwise distances

between objects or cases.

Perhaps the most important part of formulating the

clustering problem is selecting the variables on

which the clustering is based.

Inclusion of even one or two irrelevant variables may

distort an otherwise useful clustering solution.

Basically, the set of variables selected should

describe the similarity between objects in terms that

are relevant to the marketing research problem.

The variables should be selected based on past

research, theory, or a consideration of the

hypotheses being tested. In exploratory research,

the researcher should exercise judgment and

intuition.

The most commonly used measure of similarity is the

Euclidean distance or its square. The Euclidean distance is the

square root of the sum of the squared differences in values

for each variable. Other distance measures are also available.

The city-block or Manhattan distance between two objects is

the sum of the absolute differences in values for each

variable. The Chebychev distance between two objects is the

maximum absolute difference in values for any variable.

If the variables are measured in vastly different units, the

clustering solution will be influenced by the units of

measurement. In these cases, before clustering respondents,

we must standardize the data by rescaling each variable to

have a mean of zero and a standard deviation of unity. It is

also desirable to eliminate outliers (cases with atypical

values).

Use of different distance measures may lead to different

clustering results. Hence, it is advisable to use different

measures and compare the results.

Hierarchical clustering is characterized by the

development of a hierarchy or tree-like structure.

Hierarchical methods can be agglomerative or divisive.

Agglomerative clustering starts with each object in a

separate cluster. Clusters are formed by grouping

objects into bigger and bigger clusters. This process is

continued until all objects are members of a single

cluster.

Divisive clustering starts with all the objects grouped in a

single cluster. Clusters are divided or split until each

object is in a separate cluster.

Agglomerative methods are commonly used in marketing

research. They consist of linkage methods, error sums

of squares or variance methods, and centroid methods.

Fig. 4

Nonhierarchical

Hierarchical

Agglomerative

Divisive

Sequential

Threshold

Parallel

Threshold

Optimizing

Partitioning

Linkage

Methods

Variance

Methods

Centroid

Methods

Wards

Method

Single

Linkage

Complete

Linkage

Average

Linkage

Other

Two-Step

Clustering Procedures

The single linkage method is based on minimum

distance, or the nearest neighbor rule. At every

stage, the distance between two clusters is the

distance between their two closest points (see Figure

5).

The complete linkage method is similar to single

linkage, except that it is based on the maximum

distance or the furthest neighbor approach. In

complete linkage, the distance between two clusters

is calculated as the distance between their two

furthest points.

The average linkage method works similarly.

However, in this method, the distance between two

clusters is defined as the average of the distances

between all pairs of objects, where one member of

the pair is from each of the clusters (Figure 5).

Fig. 5

Single Linkage

Minimum Distance

Complete Linkage

Maximum

Distance

Average Linkage

Average Distance

Cluster 1

Cluster 2

Cluster 1

Cluster 2

Cluster 1

Cluster 2

The variance methods attempt to generate clusters to minimize

the within-cluster variance.

A commonly used variance method is the Ward's procedure. For

each cluster, the means for all the variables are computed. Then,

for each object, the squared Euclidean distance to the cluster

means is calculated (Figure 6). These distances are summed for

all the objects. At each stage, the two clusters with the smallest

increase in the overall sum of squares within cluster distances are

combined.

In the centroid methods, the distance between two clusters is the

distance between their centroids (means for all the variables), as

shown in Figure 6. Every time objects are grouped, a new

centroid is computed.

Of the hierarchical methods, average linkage and Ward's methods

have been shown to perform better than the other procedures.

Wards Procedure

Centroid Method

Fig. 6

The nonhierarchical clustering methods are frequently referred

to as k-means clustering. These methods include sequential

threshold, parallel threshold, and optimizing partitioning.

In the sequential threshold method, a cluster center is selected

and all objects within a prespecified threshold value from the

center are grouped together. Then a new cluster center or

seed is selected, and the process is repeated for the

unclustered points. Once an object is clustered with a seed, it

is no longer considered for clustering with subsequent seeds.

The parallel threshold method operates similarly, except that

several cluster centers are selected simultaneously and objects

within the threshold level are grouped with the nearest center.

The optimizing partitioning method differs from the two

threshold procedures in that objects can later be reassigned to

clusters to optimize an overall criterion, such as average within

cluster distance for a given number of clusters.

It has been suggested that the hierarchical and

nonhierarchical methods be used in tandem. First, an

initial clustering solution is obtained using a

hierarchical procedure, such as average linkage or

Ward's. The number of clusters and cluster centroids so

obtained are used as inputs to the optimizing

partitioning method.

Choice of a clustering method and choice of a distance

measure are interrelated. For example, squared

Euclidean distances should be used with the Ward's and

centroid methods. Several nonhierarchical procedures

also use squared Euclidean distances.

Theoretical, conceptual, or practical considerations may

suggest a certain number of clusters.

In hierarchical clustering, the distances at which

clusters are combined can be used as criteria. This

information can be obtained from the agglomeration

schedule or from the dendrogram.

In nonhierarchical clustering, the ratio of total within-

group variance to between-group variance can be

plotted against the number of clusters. The point at

which an elbow or a sharp bend occurs indicates an

appropriate number of clusters.

The relative sizes of the clusters should be meaningful.

Interpreting and profiling clusters involves

examining the cluster centroids. The centroids

enable us to describe each cluster by assigning it a

name or label.

It is often helpful to profile the clusters in terms of

variables that were not used for clustering. These

may include demographic, psychographic, product

usage, media usage, or other variables.

1. Perform cluster analysis on the same data using different

distance measures. Compare the results across measures

to determine the stability of the solutions.

2. Use different methods of clustering and compare the

results.

3. Split the data randomly into halves. Perform clustering

separately on each half. Compare cluster centroids across

the two subsamples.

4. Delete variables randomly. Perform clustering based on the

reduced set of variables. Compare the results with those

obtained by clustering based on the entire set of variables.

5. In nonhierarchical clustering, the solution may depend on

the order of cases in the data set. Make multiple runs using

different order of cases until the solution stabilizes.

X1: Shopping is fun-filled

X2: Shopping upsets my budget

X3: I combine shopping with eating out

X4: I try to get best buys when shopping

X5: I dont care about shopping

X6: You can save a lot of money by

comparing prices.

Case No. V

1

V

2

V

3

V

4

V

5

V

6

1 6 4 7 3 2 3

2 2 3 1 4 5 4

3 7 2 6 4 1 3

4 4 6 4 5 3 6

5 1 3 2 2 6 4

6 6 4 6 3 3 4

7 5 3 6 3 3 4

8 7 3 7 4 1 4

9 2 4 3 3 6 3

10 3 5 3 6 4 6

11 1 3 2 3 5 3

12 5 4 5 4 2 4

13 2 2 1 5 4 4

14 4 6 4 6 4 7

15 6 5 4 2 1 4

16 3 5 4 6 4 7

17 4 4 7 2 2 5

18 3 7 2 6 4 3

19 4 6 3 7 2 7

20 2 3 2 4 7 2

To select this procedure using SPSS for

Windows, click:

Analyze>Classify>Hierarchical Cluster

Analyze>Classify>K-Means Cluster

1. Click CLASSIFY and then HIERARCHICAL CLUSTER.

2. Move Fun [v1], Bad for Budget [v2], Eating Out [v3], Best Buys [v4], Dont

Care [v5], and Compare Prices [v6] into the VARIABLES box.

3. In the CLUSTER box, check CASES (default option). In the DISPLAY box, check

STATISTICS and PLOTS (default options).

4. Click on STATISTICS. In the pop-up window, check AGGLOMERATION

SCHEDULE. In the CLUSTER MEMBERSHIP box, check None (default option).

Click CONTINUE.

5. Click on PLOTS. In the pop-up window, check DENDROGRAM. In the ICICLE

box, check ALL CLUSTERS (default). In the ORIENTATION box, check

VERTICAL. Click CONTINUE.

6. Click on METHOD. For CLUSTER METHOD, select BETWEEN GROUPS LINKAGE

METHOD(Default option)). In the MEASURE box, check INTERVAL and select

SQUARED EUCLIDEAN DISTANCE. Click CONTINUE.

7. Click OK.

1. Select ANALYZE from the SPSS menu bar.

2. Click CLASSIFY and then K-MEANS CLUSTER.

3. Move Fun [v1], Bad for Budget [v2], Eating Out [v3], Best

Buys [v4], Dont Care [v5], and Compare Prices [v6] into

the VARIABLES box.

4. For NUMBER OF CLUSTER, select 3.

5. Click on OPTIONS. In the pop-up window, in the STATISTICS

box, check INITIAL CLUSTER CENTERS and ANOVA table. Click

CONTINUE.

6. Click on SAVE. Check Cluster Membership .Click CONTINUE.

7. Click OK.

Vous aimerez peut-être aussi

- An Introduction To Factor Analysis: Philip HylandDocument34 pagesAn Introduction To Factor Analysis: Philip HylandAtiqah NizamPas encore d'évaluation

- Introduction To Factor Analysis (Compatibility Mode) PDFDocument20 pagesIntroduction To Factor Analysis (Compatibility Mode) PDFchaitukmrPas encore d'évaluation

- Cluster Training PDF (Compatibility Mode)Document21 pagesCluster Training PDF (Compatibility Mode)Sarbani DasguptsPas encore d'évaluation

- Analytics CompendiumDocument41 pagesAnalytics Compendiumshubham markadPas encore d'évaluation

- Case Tuscan LifestylesDocument10 pagesCase Tuscan LifestyleskowillPas encore d'évaluation

- Logistic RegressionDocument71 pagesLogistic Regressionanil_kumar31847540% (1)

- Factor and Cluster AnalysisDocument14 pagesFactor and Cluster Analysisbodhraj91Pas encore d'évaluation

- Cluster AnalysisDocument38 pagesCluster AnalysisShiva KumarPas encore d'évaluation

- Malhotra 19Document37 pagesMalhotra 19Sunia AhsanPas encore d'évaluation

- Cluster AnalysisDocument12 pagesCluster AnalysisUsman AliPas encore d'évaluation

- Factor AnalysisDocument46 pagesFactor AnalysisSurbhi KambojPas encore d'évaluation

- (Autonomous and Affiliated To Bharathiar University & Recognized by The UGCDocument35 pages(Autonomous and Affiliated To Bharathiar University & Recognized by The UGCapindhratex100% (2)

- Digital Analytics Maturation ModelDocument3 pagesDigital Analytics Maturation ModelJason LuisPas encore d'évaluation

- Factor Analysis (Optional Session)Document23 pagesFactor Analysis (Optional Session)jaya1117sarkar100% (2)

- Dimensionality ReductionDocument48 pagesDimensionality ReductionnitinPas encore d'évaluation

- Correlation and Regression: Explaining Association and CausationDocument23 pagesCorrelation and Regression: Explaining Association and CausationParth Rajesh ShethPas encore d'évaluation

- Logistic Regression AnalysisDocument16 pagesLogistic Regression AnalysisPIE TUTORSPas encore d'évaluation

- Accenture Consulting, SC&OMDocument2 pagesAccenture Consulting, SC&OMashishshukla1989Pas encore d'évaluation

- Area: Operations Tem 1: Post-Graduate Diploma in Management (PGDM)Document6 pagesArea: Operations Tem 1: Post-Graduate Diploma in Management (PGDM)rakeshPas encore d'évaluation

- Business Analytics - Science of Data Driven Decision Making (Live Online Programme)Document3 pagesBusiness Analytics - Science of Data Driven Decision Making (Live Online Programme)KRITIKA NIGAMPas encore d'évaluation

- Business Research Method: Factor AnalysisDocument52 pagesBusiness Research Method: Factor Analysissubrays100% (1)

- Conjoint AnalysisDocument26 pagesConjoint AnalysisRock100% (1)

- AirtelDocument68 pagesAirtelamitdutta87Pas encore d'évaluation

- Computer AssignmentDocument6 pagesComputer AssignmentSudheender Srinivasan0% (1)

- Machine Learning: Bilal KhanDocument20 pagesMachine Learning: Bilal KhanOsama Inayat100% (1)

- Moderating Effect of The Relationship Between Private Label Share and Store Loyalty PDFDocument15 pagesModerating Effect of The Relationship Between Private Label Share and Store Loyalty PDFKhushbooPas encore d'évaluation

- STA780 Wk6 Factor Analysis-SPSSDocument19 pagesSTA780 Wk6 Factor Analysis-SPSSsyaidatul umairahPas encore d'évaluation

- Marketing Research Question BankDocument2 pagesMarketing Research Question BankOnkarnath SinghPas encore d'évaluation

- Assignment 2 Case Study and PresentationDocument2 pagesAssignment 2 Case Study and PresentationRichard MchikhoPas encore d'évaluation

- COMPUTER APPLICATIONS - Excel NotesDocument2 pagesCOMPUTER APPLICATIONS - Excel NotesPamela WilliamsPas encore d'évaluation

- Operations StartegyDocument27 pagesOperations StartegySaloni BishnoiPas encore d'évaluation

- Multi Dimensional Scaling - Angrau - PrashanthDocument41 pagesMulti Dimensional Scaling - Angrau - PrashanthPrashanth Pasunoori100% (1)

- Cluster AnalysisDocument13 pagesCluster AnalysisAtiqah IsmailPas encore d'évaluation

- MKTG475 Conjoint Analysis 2021SDocument79 pagesMKTG475 Conjoint Analysis 2021SnemanjaPas encore d'évaluation

- LDR 531 Final Exam - LDR 531 Final Exam Organizational Leadership Final Exam - UOP StudentsDocument66 pagesLDR 531 Final Exam - LDR 531 Final Exam Organizational Leadership Final Exam - UOP StudentsUOP Students100% (1)

- Le Petit Chef Case MemoDocument7 pagesLe Petit Chef Case MemoashuPas encore d'évaluation

- BADM 572 - Stats Homework Answers 6Document7 pagesBADM 572 - Stats Homework Answers 6nicktimmonsPas encore d'évaluation

- Linear Regression PDFDocument32 pagesLinear Regression PDFAravindPas encore d'évaluation

- Chapter 21 MDS & ConjointDocument11 pagesChapter 21 MDS & ConjointKANIKA GORAYAPas encore d'évaluation

- Airtel ServqualDocument2 pagesAirtel ServqualVashishtha DadhichPas encore d'évaluation

- Management AccountingDocument14 pagesManagement AccountingAmit RajPas encore d'évaluation

- Factor Analysis: © Dr. Maher KhelifaDocument36 pagesFactor Analysis: © Dr. Maher KhelifaIoannis MilasPas encore d'évaluation

- Lufthansa Case PDFDocument41 pagesLufthansa Case PDFJoaw Kmp100% (1)

- Data Science AFADocument15 pagesData Science AFARaviPas encore d'évaluation

- Mergers and AmalgmationsDocument38 pagesMergers and AmalgmationsShweta SawantPas encore d'évaluation

- Conjoint Analysis PDFDocument15 pagesConjoint Analysis PDFSumit Kumar Awkash100% (1)

- Research Insights 2 Infosys LimitedDocument6 pagesResearch Insights 2 Infosys Limited2K20DMBA53 Jatin suriPas encore d'évaluation

- Management of Sales Territories and QuotasDocument7 pagesManagement of Sales Territories and QuotasRavimohan RajmohanPas encore d'évaluation

- Statistics For Business Analysis: Learning ObjectivesDocument37 pagesStatistics For Business Analysis: Learning ObjectivesShivani PandeyPas encore d'évaluation

- RegressionDocument87 pagesRegressionHardik Sharma100% (1)

- Lecture Notes - Hypothesis TestingDocument17 pagesLecture Notes - Hypothesis TestingSushant SumanPas encore d'évaluation

- Factor Analysis in SPSSDocument9 pagesFactor Analysis in SPSSLoredana IcsulescuPas encore d'évaluation

- Agency CompensationDocument5 pagesAgency CompensationMOHIT GUPTAPas encore d'évaluation

- Syllabus Global Marketing ManagementDocument3 pagesSyllabus Global Marketing ManagementBabu GeorgePas encore d'évaluation

- Exploratory Data Analysis - Komorowski PDFDocument20 pagesExploratory Data Analysis - Komorowski PDFEdinssonRamosPas encore d'évaluation

- Business Statistics AssignmentDocument7 pagesBusiness Statistics AssignmentRahul ChopraPas encore d'évaluation

- Week-9-Part-2 Agglomerative ClusteringDocument40 pagesWeek-9-Part-2 Agglomerative ClusteringMichael ZewdiePas encore d'évaluation

- Cluster AnalysisDocument5 pagesCluster AnalysisGowtham BharatwajPas encore d'évaluation

- Cluster AnalysisDocument9 pagesCluster AnalysisAmol RattanPas encore d'évaluation

- Cluster AnalysisDocument9 pagesCluster AnalysisPriyanka BujugundlaPas encore d'évaluation

- Create A Specimen of A Corporate Web Page. Design Home Page Including About Us, Logo Image, History, Site-Map, Sub-BranchesDocument22 pagesCreate A Specimen of A Corporate Web Page. Design Home Page Including About Us, Logo Image, History, Site-Map, Sub-Branchesakhil107043Pas encore d'évaluation

- Cover PDFDocument1 pageCover PDFakhil107043Pas encore d'évaluation

- Q'aire SolutionDocument22 pagesQ'aire Solutionakhil107043Pas encore d'évaluation

- Demand Estimation & Market Sizing: Study To Understand M-Commerce Market in IndiaDocument37 pagesDemand Estimation & Market Sizing: Study To Understand M-Commerce Market in Indiaakhil107043Pas encore d'évaluation

- Training Session On Questionnaire MakingDocument17 pagesTraining Session On Questionnaire Makingakhil107043Pas encore d'évaluation

- 2Document2 pages2akhil107043Pas encore d'évaluation

- International Financial Markets: South-Western/Thomson Learning © 2006Document30 pagesInternational Financial Markets: South-Western/Thomson Learning © 2006akhil107043Pas encore d'évaluation

- India Ports and Terminals: S.L.GanapathiDocument19 pagesIndia Ports and Terminals: S.L.Ganapathiakhil107043Pas encore d'évaluation

- Praveen Ya (Final)Document3 pagesPraveen Ya (Final)Priyanka VijayvargiyaPas encore d'évaluation

- ATB Business Start-Up GuideDocument19 pagesATB Business Start-Up GuideHilkyahuPas encore d'évaluation

- m4s BrochureDocument8 pagesm4s BrochureAnderson José Carrillo NárvaezPas encore d'évaluation

- Air Compressor Daisy Chain Termination - Rev 0Document1 pageAir Compressor Daisy Chain Termination - Rev 0mounrPas encore d'évaluation

- Adept PovertyDocument21 pagesAdept PovertyKitchie HermosoPas encore d'évaluation

- BCI ICT ResilienceDocument57 pagesBCI ICT ResilienceOana DragusinPas encore d'évaluation

- Free License Keys of Kaspersky Internet Security 2017 Activation Code PDFDocument20 pagesFree License Keys of Kaspersky Internet Security 2017 Activation Code PDFCaesar Catalin Caratasu0% (1)

- Study of Possibilities of Joint Application of Pareto Analysis and Risk Analysis During Corrective ActionsDocument4 pagesStudy of Possibilities of Joint Application of Pareto Analysis and Risk Analysis During Corrective ActionsJoanna Gabrielle Cariño TrillesPas encore d'évaluation

- Chapter 4 - Introduction To WindowsDocument8 pagesChapter 4 - Introduction To WindowsKelvin mwaiPas encore d'évaluation

- Digital Overspeed - Protection System: Short DescriptionDocument8 pagesDigital Overspeed - Protection System: Short DescriptionYohannes S AripinPas encore d'évaluation

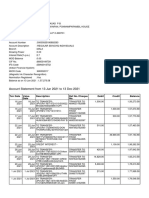

- Account Statement From 13 Jun 2021 To 13 Dec 2021Document10 pagesAccount Statement From 13 Jun 2021 To 13 Dec 2021Syamprasad P BPas encore d'évaluation

- Notebook Power System Introduction TroubleshootingDocument44 pagesNotebook Power System Introduction TroubleshootingDhruv Gonawala89% (9)

- Elb AgDocument117 pagesElb AgniravPas encore d'évaluation

- A Guide To Noise Measurement TerminologyDocument24 pagesA Guide To Noise Measurement TerminologyChhoan NhunPas encore d'évaluation

- NVIDIA System Information 01-08-2011 21-54-14Document1 pageNVIDIA System Information 01-08-2011 21-54-14Saksham BansalPas encore d'évaluation

- Java ProgrammingDocument134 pagesJava ProgrammingArt LookPas encore d'évaluation

- AlfrescoDocument10 pagesAlfrescoanangsaPas encore d'évaluation

- Total Result 194Document50 pagesTotal Result 194S TompulPas encore d'évaluation

- Technology and Tourism by Anil SangwanDocument62 pagesTechnology and Tourism by Anil SangwanAkhil V. MadhuPas encore d'évaluation

- HP Pavilion 14 - x360 Touchscreen 2-In-1 Laptop - 12th Gen Intel Core I5-1235u - 1080p - Windows 11 - CostcoDocument4 pagesHP Pavilion 14 - x360 Touchscreen 2-In-1 Laptop - 12th Gen Intel Core I5-1235u - 1080p - Windows 11 - CostcoTanish JainPas encore d'évaluation

- Computer Integrated ManufacturingDocument2 pagesComputer Integrated ManufacturingDhiren Patel100% (1)

- Design and Analysis of Manually Operated Floor Cleaning MachineDocument4 pagesDesign and Analysis of Manually Operated Floor Cleaning MachineAnonymous L9fB0XUPas encore d'évaluation

- Instep BrochureDocument8 pagesInstep Brochuretariqaftab100% (4)

- CH 21 Hull OFOD9 TH EditionDocument40 pagesCH 21 Hull OFOD9 TH Editionseanwu95Pas encore d'évaluation

- Unit 8 - AWT and Event Handling in JavaDocument60 pagesUnit 8 - AWT and Event Handling in JavaAsmatullah KhanPas encore d'évaluation

- Z Remy SpaanDocument87 pagesZ Remy SpaanAlexandra AdrianaPas encore d'évaluation

- PNP Switching Transistor: FeatureDocument2 pagesPNP Switching Transistor: FeatureismailinesPas encore d'évaluation

- Book PDF Reliability Maintainability and Risk Practical Methods For Engineers PDF Full ChapterDocument18 pagesBook PDF Reliability Maintainability and Risk Practical Methods For Engineers PDF Full Chaptermary.turner669100% (8)

- Full Download Ebook PDF Fundamentals of Modern Manufacturing Materials Processes and Systems 6th Edition PDFDocument42 pagesFull Download Ebook PDF Fundamentals of Modern Manufacturing Materials Processes and Systems 6th Edition PDFruth.white442100% (37)

- 012-NetNumen U31 R22 Northbound Interface User Guide (SNMP Interface)Document61 pages012-NetNumen U31 R22 Northbound Interface User Guide (SNMP Interface)buts101100% (5)