Académique Documents

Professionnel Documents

Culture Documents

John Loucks: 1 Slide © 2009 Thomson South-Western. All Rights Reserved

Transféré par

Chelsea RoseTitre original

Copyright

Formats disponibles

Partager ce document

Partager ou intégrer le document

Avez-vous trouvé ce document utile ?

Ce contenu est-il inapproprié ?

Signaler ce documentDroits d'auteur :

Formats disponibles

John Loucks: 1 Slide © 2009 Thomson South-Western. All Rights Reserved

Transféré par

Chelsea RoseDroits d'auteur :

Formats disponibles

Slides by

JOHN

LOUCKS

St. Edward’s

University

© 2009 Thomson South-Western. All Rights Reserved Slide 1

Chapter 4

Introduction to Probability

Experiments, Counting Rules,

and Assigning Probabilities

Events and Their Probability

Some Basic Relationships

of Probability

Conditional Probability

Bayes’ Theorem

© 2009 Thomson South-Western. All Rights Reserved Slide 2

Probability as a Numerical Measure

of the Likelihood of Occurrence

Increasing Likelihood of Occurrence

0 .5 1

Probability:

The event The occurrence The event

is very of the event is is almost

unlikely just as likely as certain

to occur. it is unlikely. to occur.

© 2009 Thomson South-Western. All Rights Reserved Slide 3

An Experiment and Its Sample Space

An experiment is any process that generates

well-defined outcomes.

The sample space for an experiment is the set of

all experimental outcomes.

An experimental outcome is also called a sample

point.

© 2009 Thomson South-Western. All Rights Reserved Slide 4

Example: Bradley Investments

Bradley has invested in two stocks, Markley Oil and

Collins Mining. Bradley has determined that the

possible outcomes of these investments three months

from now are as follows.

Investment Gain or Loss

in 3 Months (in $000)

Markley Oil Collins Mining

10 8

5 -2

0

-20

© 2009 Thomson South-Western. All Rights Reserved Slide 5

A Counting Rule for

Multiple-Step Experiments

If an experiment consists of a sequence of k steps

in which there are n1 possible results for the first step,

n2 possible results for the second step, and so on,

then the total number of experimental outcomes is

given by (n1)(n2) . . . (nk).

A helpful graphical representation of a multiple-step

experiment is a tree diagram.

© 2009 Thomson South-Western. All Rights Reserved Slide 6

A Counting Rule for

Multiple-Step Experiments

Bradley Investments can be viewed as a two-step

experiment. It involves two stocks, each with a set of

experimental outcomes.

Markley Oil: n1 = 4

Collins Mining: n2 = 2

Total Number of

Experimental Outcomes: n1n2 = (4)(2) = 8

© 2009 Thomson South-Western. All Rights Reserved Slide 7

Tree Diagram

Markley Oil Collins Mining Experimental

(Stage 1) (Stage 2) Outcomes

Gain 8 (10, 8) Gain $18,000

(10, -2) Gain $8,000

Gain 10 Lose 2

Gain 8 (5, 8) Gain $13,000

Lose 2 (5, -2) Gain $3,000

Gain 5

Gain 8

(0, 8) Gain $8,000

Even

(0, -2) Lose $2,000

Lose 20 Lose 2

Gain 8 (-20, 8) Lose $12,000

Lose 2 (-20, -2) Lose $22,000

© 2009 Thomson South-Western. All Rights Reserved Slide 8

Counting Rule for Combinations

A second useful counting rule enables us to count the

number of experimental outcomes when n objects are to

be selected from a set of N objects.

Number of Combinations of N Objects Taken n at a Time

N N!

CnN

n n !(N - n )!

where: N! = N(N - 1)(N - 2) . . . (2)(1)

n! = n(n - 1)(n - 2) . . . (2)(1)

0! = 1

© 2009 Thomson South-Western. All Rights Reserved Slide 9

Counting Rule for Permutations

A third useful counting rule enables us to count the

number of experimental outcomes when n objects are to

be selected from a set of N objects, where the order of

selection is important.

Number of Permutations of N Objects Taken n at a Time

N N!

PnN n !

n (N - n )!

where: N! = N(N - 1)(N - 2) . . . (2)(1)

n! = n(n - 1)(n - 2) . . . (2)(1)

0! = 1

© 2009 Thomson South-Western. All Rights Reserved Slide 10

Assigning Probabilities

Classical Method

Assigning probabilities based on the assumption

of equally likely outcomes

Relative Frequency Method

Assigning probabilities based on experimentation

or historical data

Subjective Method

Assigning probabilities based on judgment

© 2009 Thomson South-Western. All Rights Reserved Slide 11

Classical Method

If an experiment has n possible outcomes, this method

would assign a probability of 1/n to each outcome.

Example

Experiment: Rolling a die

Sample Space: S = {1, 2, 3, 4, 5, 6}

Probabilities: Each sample point has a

1/6 chance of occurring

© 2009 Thomson South-Western. All Rights Reserved Slide 12

Relative Frequency Method

Example: Lucas Tool Rental

Lucas Tool Rental would like to assign probabilities

to the number of car polishers it rents each day. Office

records show the following frequencies of daily rentals

for the last 40 days.

Number of Number

Polishers Rented of Days

0 4

1 6

2 18

3 10

4 2

© 2009 Thomson South-Western. All Rights Reserved Slide 13

Relative Frequency Method

Each probability assignment is given by dividing the

frequency (number of days) by the total frequency (total

number of days).

Number of Number

Polishers Rented of Days Probability

0 4 .10

1 6 .15

2 18 .45 4/40

3 10 .25

4 2 .05

40 1.00

© 2009 Thomson South-Western. All Rights Reserved Slide 14

Subjective Method

When economic conditions and a company’s

circumstances change rapidly it might be

inappropriate to assign probabilities based solely on

historical data.

We can use any data available as well as our

experience and intuition, but ultimately a probability

value should express our degree of belief that the

experimental outcome will occur.

The best probability estimates often are obtained by

combining the estimates from the classical or relative

frequency approach with the subjective estimate.

© 2009 Thomson South-Western. All Rights Reserved Slide 15

Subjective Method

Applying the subjective method, an analyst made

the following probability assignments.

Exper. Outcome Net Gain or Loss Probability

(10, 8) $18,000 Gain .20

(10, -2) $8,000 Gain .08

(5, 8) $13,000 Gain .16

(5, -2) $3,000 Gain .26

(0, 8) $8,000 Gain .10

(0, -2) $2,000 Loss .12

(-20, 8) $12,000 Loss .02

(-20, -2) $22,000 Loss .06

© 2009 Thomson South-Western. All Rights Reserved Slide 16

Events and Their Probabilities

An event is a collection of sample points.

The probability of any event is equal to the sum of

the probabilities of the sample points in the event.

If we can identify all the sample points of an

experiment and assign a probability to each, we

can compute the probability of an event.

© 2009 Thomson South-Western. All Rights Reserved Slide 17

Events and Their Probabilities

Event M = Markley Oil Profitable

M = {(10, 8), (10, -2), (5, 8), (5, -2)}

P(M) = P(10, 8) + P(10, -2) + P(5, 8) + P(5, -2)

= .20 + .08 + .16 + .26

= .70

© 2009 Thomson South-Western. All Rights Reserved Slide 18

Events and Their Probabilities

Event C = Collins Mining Profitable

C = {(10, 8), (5, 8), (0, 8), (-20, 8)}

P(C) = P(10, 8) + P(5, 8) + P(0, 8) + P(-20, 8)

= .20 + .16 + .10 + .02

= .48

© 2009 Thomson South-Western. All Rights Reserved Slide 19

Some Basic Relationships of Probability

There are some basic probability relationships that

can be used to compute the probability of an event

without knowledge of all the sample point probabilities.

Complement of an Event

Union of Two Events

Intersection of Two Events

Mutually Exclusive Events

© 2009 Thomson South-Western. All Rights Reserved Slide 20

Complement of an Event

The complement of event A is defined to be the event

consisting of all sample points that are not in A.

The complement of A is denoted by Ac.

Sample

Event A Ac Space S

Venn

Diagram

© 2009 Thomson South-Western. All Rights Reserved Slide 21

Union of Two Events

The union of events A and B is the event containing

all sample points that are in A or B or both.

The union of events A and B is denoted by A B

Sample

Event A Event B Space S

© 2009 Thomson South-Western. All Rights Reserved Slide 22

Union of Two Events

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

M C = Markley Oil Profitable

or Collins Mining Profitable (or both)

M C = {(10, 8), (10, -2), (5, 8), (5, -2), (0, 8), (-20, 8)}

P(M C) = P(10, 8) + P(10, -2) + P(5, 8) + P(5, -2)

+ P(0, 8) + P(-20, 8)

= .20 + .08 + .16 + .26 + .10 + .02

= .82

© 2009 Thomson South-Western. All Rights Reserved Slide 23

Intersection of Two Events

The intersection of events A and B is the set of all

sample points that are in both A and B.

The intersection of events A and B is denoted by A

Sample

Event A Event B Space S

Intersection of A and B

© 2009 Thomson South-Western. All Rights Reserved Slide 24

Intersection of Two Events

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

M C = Markley Oil Profitable

and Collins Mining Profitable

M C = {(10, 8), (5, 8)}

P(M C) = P(10, 8) + P(5, 8)

= .20 + .16

= .36

© 2009 Thomson South-Western. All Rights Reserved Slide 25

Addition Law

The addition law provides a way to compute the

probability of event A, or B, or both A and B occurring.

The law is written as:

P(A B) = P(A) + P(B) - P(A B

© 2009 Thomson South-Western. All Rights Reserved Slide 26

Addition Law

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

M C = Markley Oil Profitable

or Collins Mining Profitable (or both)

We know: P(M) = .70, P(C) = .48, P(M C) = .36

Thus: P(M C) = P(M) + P(C) - P(M C)

= .70 + .48 - .36

= .82

(This result is the same as that obtained earlier

using the definition of the probability of an event.)

© 2009 Thomson South-Western. All Rights Reserved Slide 27

Mutually Exclusive Events

Two events are said to be mutually exclusive if the

events have no sample points in common.

Two events are mutually exclusive if, when one event

occurs, the other cannot occur.

Sample

Event A Event B Space S

© 2009 Thomson South-Western. All Rights Reserved Slide 28

Mutually Exclusive Events

If events A and B are mutually exclusive, P(A B = 0.

The addition law for mutually exclusive events is:

P(A B) = P(A) + P(B)

There is no need to

include “- P(A B”

© 2009 Thomson South-Western. All Rights Reserved Slide 29

Conditional Probability

The probability of an event given that another event

has occurred is called a conditional probability.

The conditional probability of A given B is denoted

by P(A|B).

A conditional probability is computed as follows :

P( A B)

P( A|B)

P( B)

© 2009 Thomson South-Western. All Rights Reserved Slide 30

Conditional Probability

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

P(C| M ) = Collins Mining Profitable

given Markley Oil Profitable

We know: P(M C) = .36, P(M) = .70

P(C M ) .36

Thus: P(C| M ) .5143

P( M ) .70

© 2009 Thomson South-Western. All Rights Reserved Slide 31

Multiplication Law

The multiplication law provides a way to compute the

probability of the intersection of two events.

The law is written as:

P(A B) = P(B)P(A|B)

© 2009 Thomson South-Western. All Rights Reserved Slide 32

Multiplication Law

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

M C = Markley Oil Profitable

and Collins Mining Profitable

We know: P(M) = .70, P(C|M) = .5143

Thus: P(M C) = P(M)P(M|C)

= (.70)(.5143)

= .36

(This result is the same as that obtained earlier

using the definition of the probability of an event.)

© 2009 Thomson South-Western. All Rights Reserved Slide 33

Independent Events

If the probability of event A is not changed by the

existence of event B, we would say that events A

and B are independent.

Two events A and B are independent if:

P(A|B) = P(A) or P(B|A) = P(B)

© 2009 Thomson South-Western. All Rights Reserved Slide 34

Multiplication Law

for Independent Events

The multiplication law also can be used as a test to see

if two events are independent.

The law is written as:

P(A B) = P(A)P(B)

© 2009 Thomson South-Western. All Rights Reserved Slide 35

Multiplication Law

for Independent Events

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

Are events M and C independent?

Does P(M C) = P(M)P(C) ?

We know: P(M C) = .36, P(M) = .70, P(C) = .48

But: P(M)P(C) = (.70)(.48) = .34, not .36

Hence: M and C are not independent.

© 2009 Thomson South-Western. All Rights Reserved Slide 36

Bayes’ Theorem

Often we begin probability analysis with initial or

prior probabilities.

Then, from a sample, special report, or a product

test we obtain some additional information.

Given this information, we calculate revised or

posterior probabilities.

Bayes’ theorem provides the means for revising the

prior probabilities.

Application

Prior New Posterior

of Bayes’

Probabilities Information Probabilities

Theorem

© 2009 Thomson South-Western. All Rights Reserved Slide 37

Bayes’ Theorem

Example: L. S. Clothiers

A proposed shopping center will provide strong

Competition for downtown businesses like L. S.

Clothiers. If the shopping center is built, the owner of

L. S. Clothiers feels it would be best to relocate to the

center.

The shopping center cannot be built unless a zoning

change is approved by the town council. The planning

board must first make a recommendation, for or against

the zoning change, to the council.

© 2009 Thomson South-Western. All Rights Reserved Slide 38

Bayes’ Theorem

Prior Probabilities

Let:

A1 = town council approves the zoning change

A2 = town council disapproves the change

Using subjective judgment:

P(A1) = .7, P(A2) = .3

© 2009 Thomson South-Western. All Rights Reserved Slide 39

Bayes’ Theorem

New Information

The planning board has recommended against the

zoning change. Let B denote the event of a negative

recommendation by the planning board.

Given that B has occurred, should L. S. Clothiers

revise the probabilities that the town council will

approve or disapprove the zoning change?

© 2009 Thomson South-Western. All Rights Reserved Slide 40

Bayes’ Theorem

Conditional Probabilities

Past history with the planning board and the

town council indicates the following:

P(B|A1) = .2 P(B|A2) = .9

Hence: P(BC|A1) = .8 P(BC|A2) = .1

© 2009 Thomson South-Western. All Rights Reserved Slide 41

Bayes’ Theorem

Tree Diagram

Town Council Planning Board Experimental

Outcomes

P(B|A1) = .2

P(A1 B) = .14

P(A1) = .7

c

P(B |A1) = .8 P(A1 Bc) = .56

P(B|A2) = .9

P(A2 B) = .27

P(A2) = .3

c

P(B |A2) = .1 P(A2 Bc) = .03

© 2009 Thomson South-Western. All Rights Reserved Slide 42

Bayes’ Theorem

To find the posterior probability that event Ai will

occur given that event B has occurred, we apply

Bayes’ theorem.

P( Ai )P( B| Ai )

P( Ai |B)

P( A1 )P( B| A1 ) P( A2 )P( B| A2 ) ... P( An )P( B| An )

Bayes’ theorem is applicable when the events for

which we want to compute posterior probabilities

are mutually exclusive and their union is the entire

sample space.

© 2009 Thomson South-Western. All Rights Reserved Slide 43

Bayes’ Theorem

Posterior Probabilities

Given the planning board’s recommendation not

to approve the zoning change, we revise the prior

probabilities as follows:

P( A1 )P( B| A1 )

P( A1 |B)

P( A1 )P( B| A1 ) P( A2 )P( B| A2 )

(. 7)(. 2)

(. 7)(. 2) (.3)(. 9)

= .34

© 2009 Thomson South-Western. All Rights Reserved Slide 44

Bayes’ Theorem

Conclusion

The planning board’s recommendation is good

news for L. S. Clothiers. The posterior probability of

the town council approving the zoning change is .34

compared to a prior probability of .70.

© 2009 Thomson South-Western. All Rights Reserved Slide 45

Tabular Approach

Step 1

Prepare the following three columns:

Column 1 - The mutually exclusive events for which

posterior probabilities are desired.

Column 2 - The prior probabilities for the events.

Column 3 - The conditional probabilities of the new

information given each event.

© 2009 Thomson South-Western. All Rights Reserved Slide 46

Tabular Approach

(1) (2) (3) (4) (5)

Prior Conditional

Events Probabilities Probabilities

Ai P(Ai) P(B|Ai)

A1 .7 .2

A2 .3 .9

1.0

© 2009 Thomson South-Western. All Rights Reserved Slide 47

Tabular Approach

Step 2

Column 4

Compute the joint probabilities for each event and

the new information B by using the multiplication

law.

Multiply the prior probabilities in column 2 by

the corresponding conditional probabilities in

column 3. That is, P(Ai IB) = P(Ai) P(B|Ai).

© 2009 Thomson South-Western. All Rights Reserved Slide 48

Tabular Approach

(1) (2) (3) (4) (5)

Prior Conditional Joint

Events Probabilities Probabilities Probabilities

Ai P(Ai) P(B|Ai) P(Ai I B)

A1 .7 .2 .14

A2 .3 .9 .27

.7 x .2

1.0

© 2009 Thomson South-Western. All Rights Reserved Slide 49

Tabular Approach

Step 2 (continued)

We see that there is a .14 probability of the town

council approving the zoning change and a negative

recommendation by the planning board.

There is a .27 probability of the town council

disapproving the zoning change and a negative

recommendation by the planning board.

© 2009 Thomson South-Western. All Rights Reserved Slide 50

Tabular Approach

Step 3

Column 4

Sum the joint probabilities. The sum is the

probability of the new information, P(B). The sum

.14 + .27 shows an overall probability of .41 of a

negative recommendation by the planning board.

© 2009 Thomson South-Western. All Rights Reserved Slide 51

Tabular Approach

(1) (2) (3) (4) (5)

Prior Conditional Joint

Events Probabilities Probabilities Probabilities

Ai P(Ai) P(B|Ai) P(Ai I B)

A1 .7 .2 .14

A2 .3 .9 .27

1.0 P(B) = .41

© 2009 Thomson South-Western. All Rights Reserved Slide 52

Tabular Approach

Step 4

Column 5

Compute the posterior probabilities using the basic

relationship of conditional probability.

P( Ai B)

P( Ai | B)

P( B)

The joint probabilities P(Ai I B) are in column 4 and

the probability P(B) is the sum of column 4.

© 2009 Thomson South-Western. All Rights Reserved Slide 53

Tabular Approach

(1) (2) (3) (4) (5)

Prior Conditional Joint Posterior

Events Probabilities Probabilities Probabilities Probabilities

Ai P(Ai) P(B|Ai) P(Ai I B) P(Ai |B)

A1 .7 .2 .14 .3415

A2 .3 .9 .27 .6585

1.0 P(B) = .41 1.0000

.14/.41

© 2009 Thomson South-Western. All Rights Reserved Slide 54

End of Chapter 4

© 2009 Thomson South-Western. All Rights Reserved Slide 55

Vous aimerez peut-être aussi

- Nat ReviewerDocument4 pagesNat Reviewerericmilamattim88% (48)

- Hackerrank TestDocument3 pagesHackerrank Testswapnil0% (7)

- Sales Transactions Salesperson Sales AmountDocument6 pagesSales Transactions Salesperson Sales AmountRahul SinghPas encore d'évaluation

- John Loucks: 1 Slide © 2009 Thomson South-Western. All Rights ReservedDocument56 pagesJohn Loucks: 1 Slide © 2009 Thomson South-Western. All Rights Reservedauro auroPas encore d'évaluation

- Chapter 4Document57 pagesChapter 4Omar ALdeebPas encore d'évaluation

- 4 - Introduction To ProbabilityDocument55 pages4 - Introduction To ProbabilitySaurabh KhuranaPas encore d'évaluation

- BS Session 3-4Document56 pagesBS Session 3-4SRINIVASPas encore d'évaluation

- CH 04Document51 pagesCH 04Aitesam UllahPas encore d'évaluation

- CH 04Document51 pagesCH 04Quỳnh Anh Nguyễn TháiPas encore d'évaluation

- CHP 4Document49 pagesCHP 4Muhammad BilalPas encore d'évaluation

- Session - 1 - Probability - Probability DistributionDocument25 pagesSession - 1 - Probability - Probability DistributionSrishti TyagiPas encore d'évaluation

- Introduction To ProbabilityDocument50 pagesIntroduction To ProbabilityKukreja JitinPas encore d'évaluation

- Probability and Probability DistributionDocument100 pagesProbability and Probability DistributionVinay AroraPas encore d'évaluation

- Chap 4Document48 pagesChap 4Hoàng Kim ThanhPas encore d'évaluation

- ProbabilityDocument62 pagesProbabilityMohit BholaPas encore d'évaluation

- Week 5a - ProbabilityDocument45 pagesWeek 5a - Probability_vanitykPas encore d'évaluation

- QM Session 4Document44 pagesQM Session 4harherron123Pas encore d'évaluation

- Decision Making in Operations ManagementDocument52 pagesDecision Making in Operations ManagementTin Keiiy0% (1)

- BUsiness Statistics 7Document26 pagesBUsiness Statistics 7soumiya kamakshiPas encore d'évaluation

- 37 2Document20 pages37 2Mayar ZoPas encore d'évaluation

- John Loucks: 1 Slide © 2008 Thomson South-Western. All Rights ReservedDocument57 pagesJohn Loucks: 1 Slide © 2008 Thomson South-Western. All Rights ReservedAmar RaoPas encore d'évaluation

- John Loucks: 1 Slide © 2008 Thomson South-Western. All Rights ReservedDocument67 pagesJohn Loucks: 1 Slide © 2008 Thomson South-Western. All Rights Reservedsaurav.martPas encore d'évaluation

- The Binomial DistributionDocument19 pagesThe Binomial DistributionK Kunal RajPas encore d'évaluation

- 2) Lecture - Predictability - Fall - 2022Document25 pages2) Lecture - Predictability - Fall - 2022DunsScotoPas encore d'évaluation

- Multiple RegressionDocument57 pagesMultiple RegressionChristian Alfred VillenaPas encore d'évaluation

- Central Limit Theorem For The Realized Volatility Based On Tick Time Sampling - SlidesDocument21 pagesCentral Limit Theorem For The Realized Volatility Based On Tick Time Sampling - SlidesTraderCat SolarisPas encore d'évaluation

- Chapter 06Document42 pagesChapter 06neltyPas encore d'évaluation

- Chapter Six: ProbabilityDocument58 pagesChapter Six: Probabilitymahesh karajolPas encore d'évaluation

- CHAPTER 15: Decision Theory: Business Statistics in Practice, Third Canadian EditionDocument5 pagesCHAPTER 15: Decision Theory: Business Statistics in Practice, Third Canadian Editionamul65Pas encore d'évaluation

- 10 Quantitative Risk Assessment (Cost Benefit and Risk)Document40 pages10 Quantitative Risk Assessment (Cost Benefit and Risk)theresia windiPas encore d'évaluation

- CH 032Document57 pagesCH 032Gandhi MardiPas encore d'évaluation

- Inferential StatisticsDocument8 pagesInferential StatisticsDhruv JainPas encore d'évaluation

- John Loucks: 1 Slide © 2008 Thomson South-Western. All Rights ReservedDocument55 pagesJohn Loucks: 1 Slide © 2008 Thomson South-Western. All Rights ReservedMaxPas encore d'évaluation

- Chapter 7 - Part 1Document22 pagesChapter 7 - Part 1zerin.emuPas encore d'évaluation

- CH 12 SimulationDocument49 pagesCH 12 SimulationscribdnewidPas encore d'évaluation

- CH 02Document59 pagesCH 02sergiolombricoPas encore d'évaluation

- Sample CIVE70052 Solutions Stochastic Water Resources Management - HWRMDocument5 pagesSample CIVE70052 Solutions Stochastic Water Resources Management - HWRMOlyna IakovenkoPas encore d'évaluation

- Random Number GenerationDocument42 pagesRandom Number GenerationNikhil AggarwalPas encore d'évaluation

- Quality Management: Tools and TechniquesDocument41 pagesQuality Management: Tools and TechniquesNusaPas encore d'évaluation

- Basic ProbabilityDocument59 pagesBasic ProbabilityAiro MirandaPas encore d'évaluation

- Lightning Protection 3 PDF FreeDocument5 pagesLightning Protection 3 PDF FreeKim Sang HuỳnhPas encore d'évaluation

- 5/13/2012 Prof. P. K. Dash 1Document37 pages5/13/2012 Prof. P. K. Dash 1Richa AnandPas encore d'évaluation

- Financial Applications of Random MatrixDocument18 pagesFinancial Applications of Random Matrixxi yanPas encore d'évaluation

- Aba3691 2015 06 NorDocument5 pagesAba3691 2015 06 NorPinias ShefikaPas encore d'évaluation

- Managerial Statistics: Session 05Document19 pagesManagerial Statistics: Session 05Praveen DwivediPas encore d'évaluation

- Linear Programming NotesDocument9 pagesLinear Programming NotesMohit aswalPas encore d'évaluation

- CH 18Document42 pagesCH 18Saadat Ullah KhanPas encore d'évaluation

- DGD 9Document24 pagesDGD 9Sophia SaidPas encore d'évaluation

- Data Mining 2020Document2 pagesData Mining 2020waleedPas encore d'évaluation

- Chapter 5Document23 pagesChapter 5Doug BezerediPas encore d'évaluation

- Chap 003Document24 pagesChap 003Beste KarıkPas encore d'évaluation

- Problem Formulation (LP Section1)Document34 pagesProblem Formulation (LP Section1)Swastik MohapatraPas encore d'évaluation

- Oleh Dr. Drs. Yohanes Suhari, MMSIDocument22 pagesOleh Dr. Drs. Yohanes Suhari, MMSIHardy Gulasemut PhongPas encore d'évaluation

- A Probability and Statistics RefresherDocument33 pagesA Probability and Statistics RefresherjoshtrompPas encore d'évaluation

- İst 211 - W3 - 1Document33 pagesİst 211 - W3 - 1hikmetdenizyavasPas encore d'évaluation

- Transportation, Assignment and Tra Nsshipment ProblemsDocument72 pagesTransportation, Assignment and Tra Nsshipment ProblemsSalman NamLasPas encore d'évaluation

- Tutorial 5 Sem 1 2021-22Document6 pagesTutorial 5 Sem 1 2021-22Pap Zhong HuaPas encore d'évaluation

- QMB9 15Document54 pagesQMB9 15Sarankumar Reddy DuvvuruPas encore d'évaluation

- Chapter 3 Introduction To Linear Programming: Resources Among Competing Activities in The Best Possible (Optimal) WayDocument13 pagesChapter 3 Introduction To Linear Programming: Resources Among Competing Activities in The Best Possible (Optimal) Way李萬仁Pas encore d'évaluation

- Determination of Rate Equation - 2 QPDocument12 pagesDetermination of Rate Equation - 2 QPSalman ZaidiPas encore d'évaluation

- The Application of The Percentage Change Calculation in The Context of Inflation in Mathematical LiteracyDocument11 pagesThe Application of The Percentage Change Calculation in The Context of Inflation in Mathematical LiteracyChelsea RosePas encore d'évaluation

- Industry4 0anditsimpactDocument11 pagesIndustry4 0anditsimpactChelsea RosePas encore d'évaluation

- 1.0 Background of StudyDocument3 pages1.0 Background of StudyChelsea RosePas encore d'évaluation

- Math and You Chapter 4 PDFDocument50 pagesMath and You Chapter 4 PDFChelsea RosePas encore d'évaluation

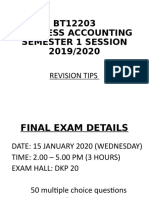

- BT12203 Revision TipsDocument10 pagesBT12203 Revision TipsChelsea RosePas encore d'évaluation

- AdafdfgdgDocument15 pagesAdafdfgdgChelsea RosePas encore d'évaluation

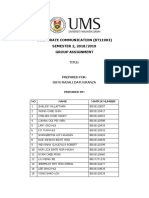

- International Economics-Group Assignment - EditedDocument14 pagesInternational Economics-Group Assignment - EditedChelsea RosePas encore d'évaluation

- Is Climate Change RealDocument1 pageIs Climate Change RealChelsea RosePas encore d'évaluation

- Review of Other Countries' Toilet and The Comparison of The Behavior of Malaysian and Foreigner in ToiletDocument2 pagesReview of Other Countries' Toilet and The Comparison of The Behavior of Malaysian and Foreigner in ToiletChelsea RosePas encore d'évaluation

- English For Research Purposes (Ub00502) SEMESTER 2, 2018/2019 Group Assignment Section 4Document21 pagesEnglish For Research Purposes (Ub00502) SEMESTER 2, 2018/2019 Group Assignment Section 4Chelsea RosePas encore d'évaluation

- English For Research Purposes (Ub00502) SEMESTER 2, 2018/2019 Group Assignment Section 4Document21 pagesEnglish For Research Purposes (Ub00502) SEMESTER 2, 2018/2019 Group Assignment Section 4Chelsea RosePas encore d'évaluation

- CSR ReportDocument13 pagesCSR ReportChelsea RosePas encore d'évaluation

- NotesDocument15 pagesNotesChelsea RosePas encore d'évaluation

- Department of Statistics Malaysia: EMBARGO: Only To Be Published or Disseminated at 1200 Hours, 29 June 2015Document5 pagesDepartment of Statistics Malaysia: EMBARGO: Only To Be Published or Disseminated at 1200 Hours, 29 June 2015Chelsea RosePas encore d'évaluation

- His Strength Is PerfectDocument9 pagesHis Strength Is PerfectChelsea RosePas encore d'évaluation

- Macro ReferencesDocument1 pageMacro ReferencesChelsea RosePas encore d'évaluation

- Department of Statistics Malaysia: EMBARGO: Only To Be Published or Disseminated at 1200 Hours, Friday, 28 April 2017Document4 pagesDepartment of Statistics Malaysia: EMBARGO: Only To Be Published or Disseminated at 1200 Hours, Friday, 28 April 2017Chelsea RosePas encore d'évaluation

- 14.1 Differentials 14.1 Differentials: (1 of 4) (2 of 4)Document1 page14.1 Differentials 14.1 Differentials: (1 of 4) (2 of 4)Chelsea RosePas encore d'évaluation

- Department of Statistics Malaysia: EMBARGO: Only To Be Published or Disseminated at 1200 Hours, Friday, 27 April 2018Document4 pagesDepartment of Statistics Malaysia: EMBARGO: Only To Be Published or Disseminated at 1200 Hours, Friday, 27 April 2018Chelsea RosePas encore d'évaluation

- 2020-21 G12 Mock AA-HL Calculator Practice Questions ANSWERSDocument23 pages2020-21 G12 Mock AA-HL Calculator Practice Questions ANSWERS0010048Pas encore d'évaluation

- Eval Exam 2Document3 pagesEval Exam 2Krisia MartinezPas encore d'évaluation

- Region IX, Zamboanga Peninsula: Interdisciplinary Contextualization (Icon) and Inquiry-Based Lesson Plan in MathDocument7 pagesRegion IX, Zamboanga Peninsula: Interdisciplinary Contextualization (Icon) and Inquiry-Based Lesson Plan in MathRebecca CastroPas encore d'évaluation

- C++ Module Chapter 3Document11 pagesC++ Module Chapter 3Bacha TarikuPas encore d'évaluation

- LR 1 Error AnalysisDocument17 pagesLR 1 Error AnalysisMuhammad MursaleenPas encore d'évaluation

- Spotlight On Teachers: James LewisDocument8 pagesSpotlight On Teachers: James Lewissamira said mazrukPas encore d'évaluation

- Numerical Solution of The Two-Dimensional Unsteady Dam BreakDocument19 pagesNumerical Solution of The Two-Dimensional Unsteady Dam BreakHindreen MohammedPas encore d'évaluation

- Translation and RotationDocument16 pagesTranslation and RotationgbonetePas encore d'évaluation

- Group Performance Task1-Math 8 Illustrating Triangle InequalitiesDocument2 pagesGroup Performance Task1-Math 8 Illustrating Triangle Inequalitiesromina javierPas encore d'évaluation

- Designing Rich Numeracy Tasks: The University of QueenslandDocument9 pagesDesigning Rich Numeracy Tasks: The University of QueenslanddannyPas encore d'évaluation

- PdeDocument1 784 pagesPdemengchheang0% (1)

- Shell ProgramsDocument6 pagesShell ProgramsRithika M NagendiranPas encore d'évaluation

- May18 Question Paper Engineering Mathematics 2 SPPUDocument3 pagesMay18 Question Paper Engineering Mathematics 2 SPPUSkye EdmnetPas encore d'évaluation

- Week 6 - Important Random Variables and Their DistributionsDocument24 pagesWeek 6 - Important Random Variables and Their DistributionsHaris GhafoorPas encore d'évaluation

- 小五組 Grade 5: 時限: 分鐘 Time allowed: minutesDocument5 pages小五組 Grade 5: 時限: 分鐘 Time allowed: minutestoga lubis100% (1)

- MST121 Course GuideDocument12 pagesMST121 Course Guidejunk6789Pas encore d'évaluation

- Numerical Analysis Measuring Errors: Lecture # 2Document29 pagesNumerical Analysis Measuring Errors: Lecture # 2Sajid ShahidPas encore d'évaluation

- Simple & Compound Interest, Growth & Decay AQA GCSE Maths Questions & Answers 2022 (Hard) Save My ExamsDocument1 pageSimple & Compound Interest, Growth & Decay AQA GCSE Maths Questions & Answers 2022 (Hard) Save My Examsjishnu.rajwaniPas encore d'évaluation

- Discrete Random Variables and Their Probability DistributionsDocument137 pagesDiscrete Random Variables and Their Probability DistributionsZaid Jabbar JuttPas encore d'évaluation

- Study Notes-Theory of ComputationDocument47 pagesStudy Notes-Theory of ComputationSwarnavo MondalPas encore d'évaluation

- Lattice TheoryDocument36 pagesLattice TheoryShravya S MallyaPas encore d'évaluation

- Mathematics CompetitionDocument4 pagesMathematics Competitionsegun adebayoPas encore d'évaluation

- Computerweek 2016Document12 pagesComputerweek 2016api-320497288Pas encore d'évaluation

- Module 3: Sequence Components and Fault Analysis: Sequence ComponentsDocument11 pagesModule 3: Sequence Components and Fault Analysis: Sequence Componentssunny1725Pas encore d'évaluation

- Computer - New - Automata Theory Lecture 6 Equivalence and Reduction of Finite State MachinesDocument35 pagesComputer - New - Automata Theory Lecture 6 Equivalence and Reduction of Finite State MachinesBolarinwa OwuogbaPas encore d'évaluation

- Maths Specimen Paper 1 Mark Scheme 2014 2017Document12 pagesMaths Specimen Paper 1 Mark Scheme 2014 2017Kevin Aarasinghe0% (1)

- Sol HW4Document3 pagesSol HW4Lelouch V. BritaniaPas encore d'évaluation

- Simple Linear Regression Part 1Document63 pagesSimple Linear Regression Part 1_vanitykPas encore d'évaluation